From Copilot Mode Experiment to Always‑On Edge Intelligence

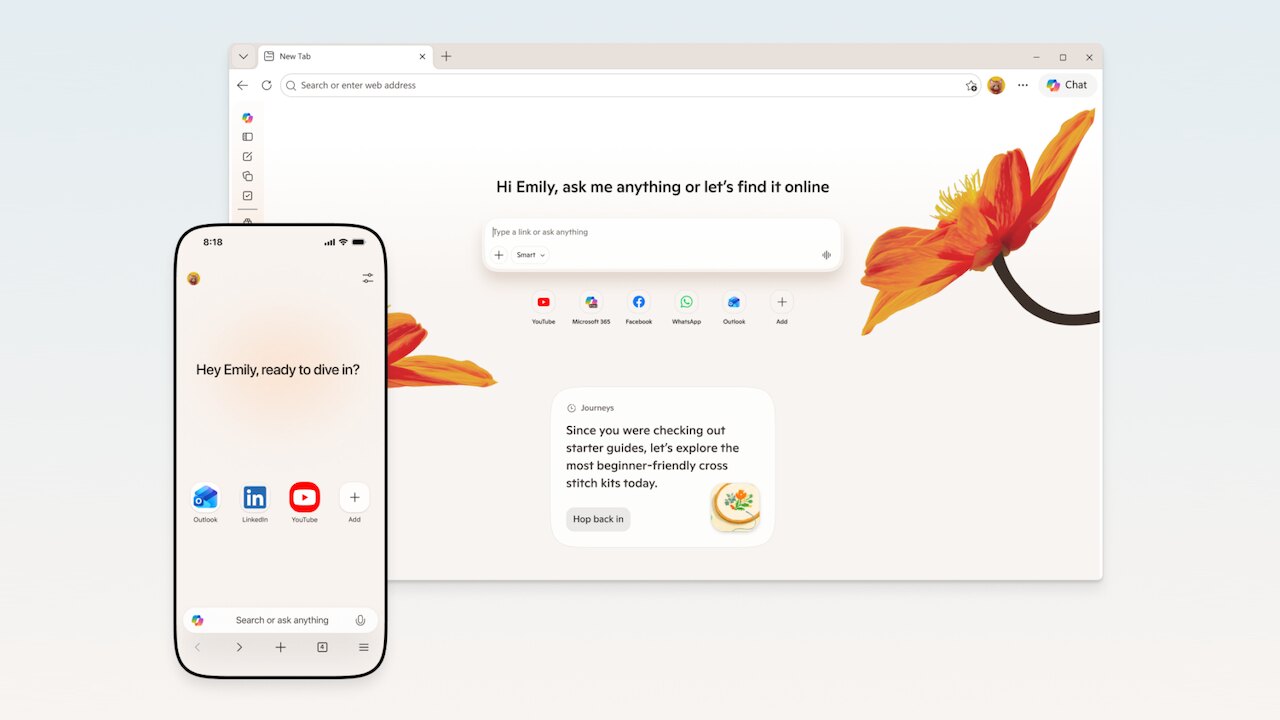

Microsoft is phasing out the separate Copilot Mode in Edge and folding its AI tools directly into the core browser interface. Instead of switching into a dedicated environment, users now tap the Copilot button in Edge to access multi-tab reasoning, browsing context, and writing help as part of their normal sessions. Microsoft positions this shift as a workflow upgrade: Edge becomes the starting point where search, chat, and navigation converge, rather than a portal to a standalone AI experiment. The move also aligns desktop and mobile: features like Journeys and AI-assisted navigation are designed to follow users across Windows, Mac, Android, iPhone, and iPad. While availability still depends on market support and subscription tiers, the strategic direction is clear. Edge is being rebuilt as an AI browser, where Edge tab intelligence quietly underpins everyday research, planning, and decision-making in the background.

How Multi‑Tab Reasoning and Tab Comparison Change Web Research

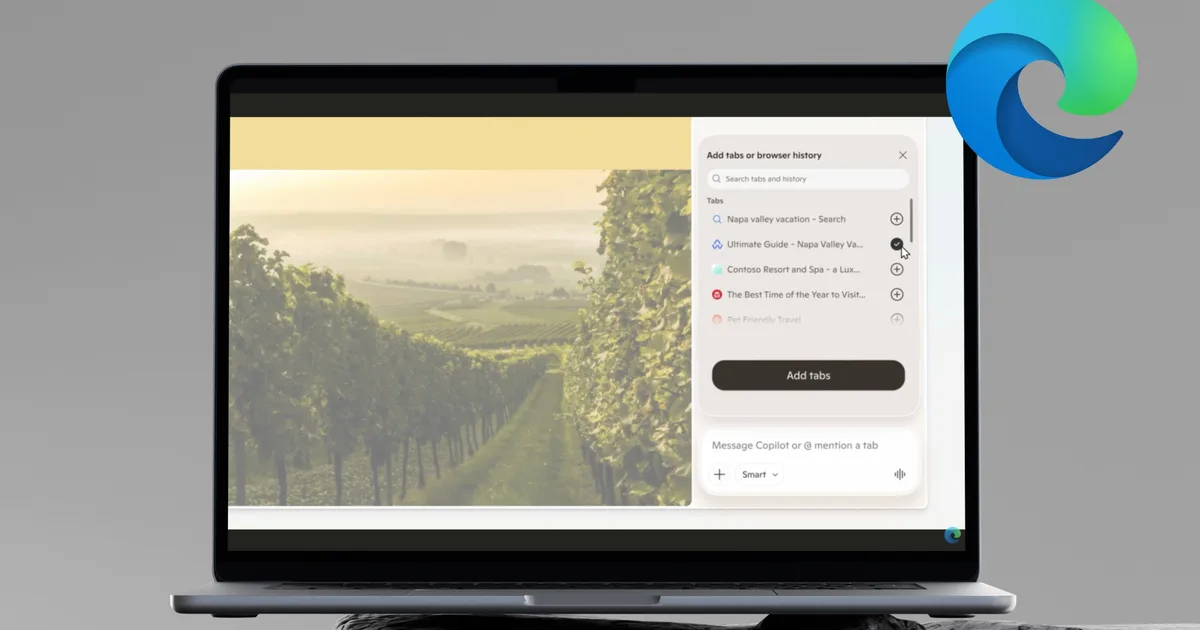

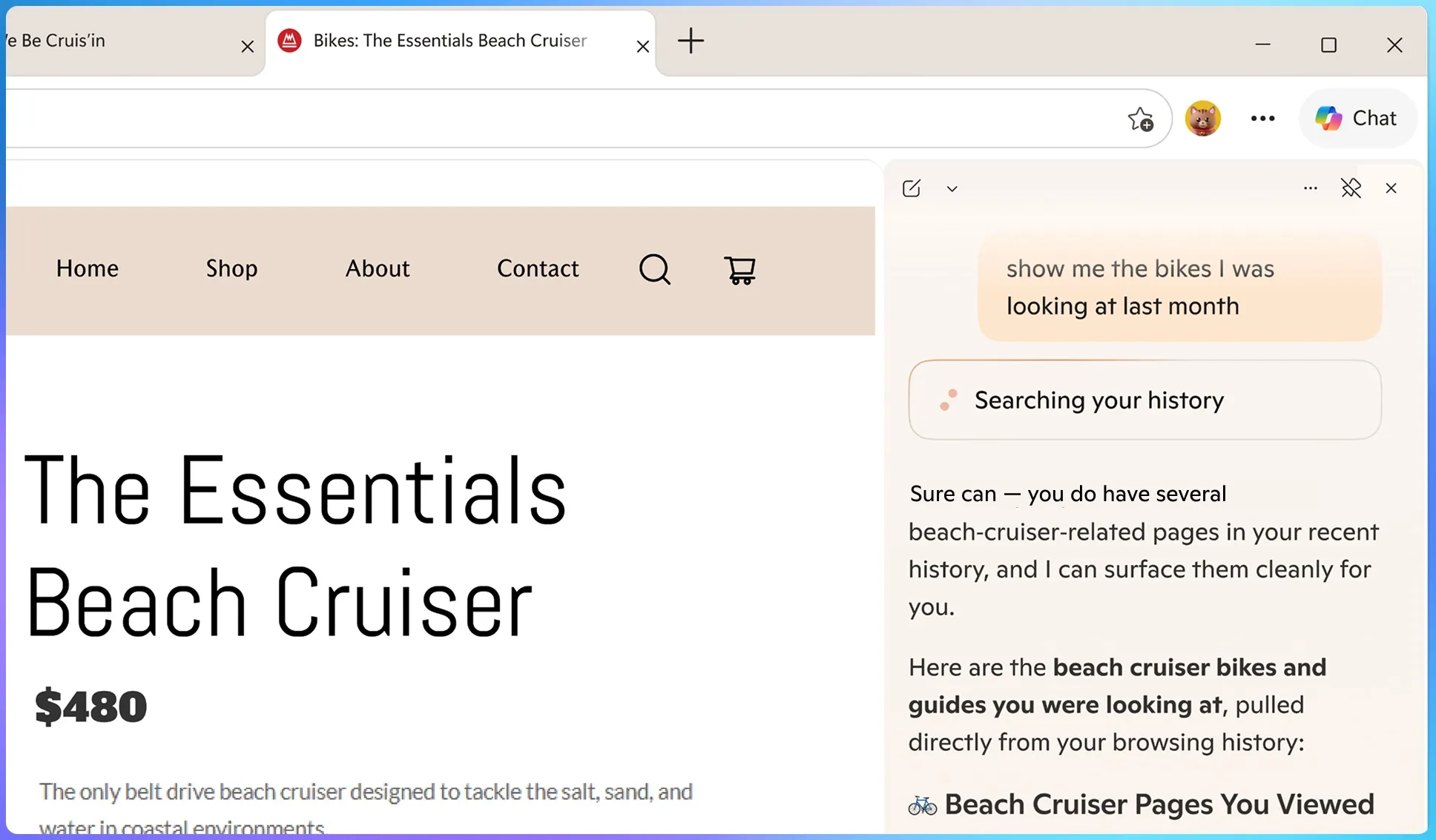

The standout upgrade is Copilot multi-tab reasoning, effectively turning Edge into a tab comparison tool that understands your full workspace. Instead of manually bouncing among 15 open pages, you can ask Copilot to compare what’s already in front of you: hotels, smart TVs, research papers, SaaS products, or competing news coverage. Edge tab intelligence lets Copilot read across all open tabs, extract key details, and present a consolidated summary or side‑by‑side breakdown on demand. For example, a user can ask, “Compare the hotel bookings across my open tabs,” and get a structured list of prices, amenities, and cancellation policies without clicking through each site. With permission, Copilot can also incorporate related browsing history and past chats, reconnecting research you did days earlier with the tabs you just opened. The result is a browser that reasons over your session, not just a single page.

Browsing Memory, Journeys, and Study Tools for Different User Types

Beyond tab comparison, Edge’s new AI browser features add long‑term context and learning aids tailored to distinct workflows. Power researchers and knowledge workers gain from Copilot’s optional “long‑term memory,” which uses previous conversations and, with consent, browsing history to refine answers over time. Journeys groups related pages into project‑style timelines, making it easier to resume complex planning sessions for work, travel, or personal purchases. Students and lifelong learners get Study and Learn mode: Copilot can turn any article or textbook page into interactive quizzes when you ask, “Quiz me on this topic,” helping with exam prep or skill building. Casual users benefit too: writing assistance can polish emails or posts directly inside the browser, while summarization tools quickly condense long reads. Collectively, these capabilities position Edge less as a passive tab container and more as an active organizer of your online research and learning.

Vision, Voice, and Cross‑Device AI Browsing on Mobile

Microsoft is bringing many of these desktop innovations to mobile, aiming for seamless cross‑device AI browsing. On phones and tablets, Copilot in Edge can now reason across open mobile tabs with user permission, offering the same multi‑tab reasoning and tab comparison experience on a smaller screen. Mobile Edge adds Vision and Voice, so you can share your screen directly with Copilot, speak your questions, and have it respond without typing. This real‑time, voice‑driven assistance resembles other conversational AI experiences but is anchored in what you’re actually viewing in the browser. Journeys is also expanding to mobile, letting you pick up desktop research from your phone when you leave your desk. Visual activity cues indicate when assistant tools are active, providing transparency as AI features become more deeply embedded. For on‑the‑go users, Edge effectively becomes a voice‑enabled research partner in your pocket.

From Tabs to Podcasts: Edge as a Personal Research Broadcaster

One of the more novel additions turns static pages into audio companions. Microsoft is introducing an AI feature that can generate podcast‑style audio from web content you’re browsing. Combined with Edge tab intelligence, this means you can research a topic across multiple tabs, ask Copilot for a synthesized summary, and then have that overview rendered as an audio episode you can listen to while commuting, exercising, or multitasking. For busy professionals, this tab‑to‑podcast flow transforms long reports or product comparisons into digestible briefings. Students can review study summaries away from their screens, reinforcing material covered in Study and Learn sessions. Creators and analysts might even use the feature as a rapid way to audit their own research notes. Together with multi‑tab reasoning, browsing memory, and mobile Vision and Voice, it pushes Edge beyond traditional browsing into a personalized, cross‑modal research environment.