How RAG Pipelines Turn Documents into Invisible Risk

Retrieval-augmented generation (RAG) pipelines work by ingesting internal content, chunking it and turning each fragment into embeddings stored in a vector database. This enables semantic search and more accurate, context-aware responses, as described in IBM’s content-aware storage work, where document vectorization is embedded directly into the storage layer to support large-scale RAG workflows. What looks like a performance win is also a new compliance surface. Sensitive contracts, medical records or policy documents stop being files that legal can track and instead become millions of opaque vectors spread across infrastructure. Engineering teams often treat these vector stores as mere infrastructure, not as regulated data repositories, while legal may not even know they exist. The result is a growing gap between what the enterprise believes it has under governance and what actually feeds its AI systems, creating discovery blind spots and unclear accountability.

The Hidden RAG Compliance Risks in Vector Databases

RAG compliance risks emerge because embeddings preserve meaning from sensitive text without looking like traditional documents. Industry practitioners report security reviews failing solely because a vector database lacked native audit logging, and regulated deployments being delayed for months when compliance teams were finally looped in late in the process. Regulators are signaling that organizations using AI must show where content came from, how it was retrieved and how it influenced outputs. Yet once documents enter a RAG pipeline, they are broken into thousands of embeddings that don’t map cleanly back to files, pages or custodians. Legal concepts like document holds and chain of custody have no straightforward equivalents in many current RAG stacks. Without explicit ownership, vector databases can accumulate shadow copies, untracked regulated data and retention practices that bear no relation to official records policies, exposing enterprises to discovery failures and regulatory scrutiny.

Accuracy, Governance and the Enterprise RAG Reality Check

Technical limitations in enterprise RAG compound compliance exposure. Allganize’s RARE framework shows that RAG systems performing well on clean benchmarks can collapse when faced with real-world corporate data, where documents are highly redundant and overlapping. A model achieving 77.9% accuracy in a wiki-style setting dropped to 8.5% on finance data and 5.0% on legal data, underscoring how fragile retrieval quality can be in practice. Poor retrieval means the system may surface the wrong policy version, an outdated contract clause or irrelevant guidance, all of which can be problematic in regulated contexts. From an AI data governance perspective, this undermines both accuracy and defensibility: it is harder to prove you applied the right source, and harder to explain why an answer was produced. Weak governance and control around RAG data pipelines therefore become not just an engineering issue, but a material enterprise AI security and compliance concern.

What a Basic Vector Database Audit Should Cover

A vector database audit is the starting point for bringing RAG data pipelines under governance. First, map data lineage: for each embedding, track its source system, document ID, version, chunking logic and embedding model used. Next, enforce access controls that treat the vector store as a system of record, not just a search index, aligning roles and permissions with those on the underlying content. Logging is critical: capture who queried what, which vectors were retrieved, and which documents were presented to the model. Deletion and retention workflows must ensure that removing or expiring a source document cascades to its embeddings and any derived indexes. Finally, validate that RAG outputs can be tied back to specific retrieval events and source artifacts. This level of traceability supports both regulatory expectations and internal e-discovery needs, turning opaque embeddings into auditable, governed assets.

A Pragmatic Checklist for CIOs and the Future of RAG Storage

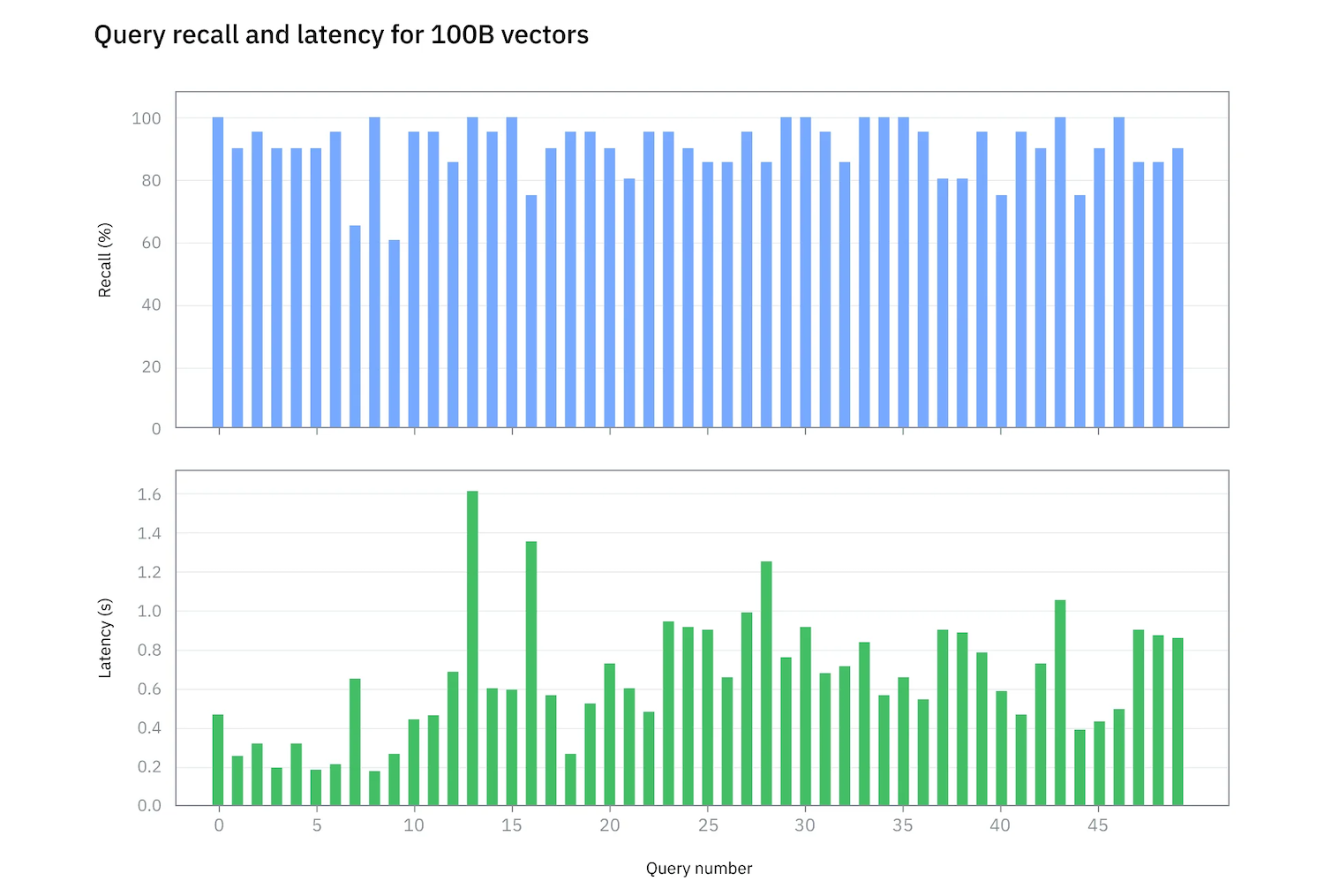

CIOs and heads of data/IT should formalize ownership of RAG systems and integrate them into AI data governance. Start with a vector database audit and update policies to explicitly classify embeddings and vector stores as regulated data where applicable. Establish a cross-functional council spanning legal, information governance, security and AI engineering to review new RAG use cases. Vendor due diligence should ask about audit logging, access control integration, data residency, deletion guarantees and the ability to reconstruct retrieval trails for investigations. Looking ahead, emerging content-aware storage architectures embed vectorization and indexing directly in the storage layer, reducing data movement and potentially simplifying governance by keeping processing close to the source corpus. IBM’s work with large-scale vector databases illustrates how future infrastructure may combine performance with richer control surfaces, helping enterprises better contain, observe and regulate the RAG workloads that now underpin their AI initiatives.