Gemini Chrome Android: From Search Tool to Agentic Web Assistant

Google is turning Chrome on Android into more than a browser. Built on the Gemini 3.1 model, the new integration brings contextual AI directly into the mobile experience, positioning Chrome as a proactive mobile web assistant rather than a passive page viewer. Tap the Gemini icon in the toolbar and a panel slides up, ready to interpret whatever is on the screen. Instead of juggling apps, copying text, or switching tabs, users can ask Gemini to summarize articles, clarify complex explanations, or answer questions tied to the current page. This isn’t just basic Q&A—Gemini Chrome Android also connects with Google services like Calendar, Keep, and Gmail, enabling AI browser automation that acts on what you are reading. The result is a browser that understands content in context and begins to function like a personal web assistant embedded in your everyday browsing.

How Auto Browse Brings Agentic AI to Chrome on Android

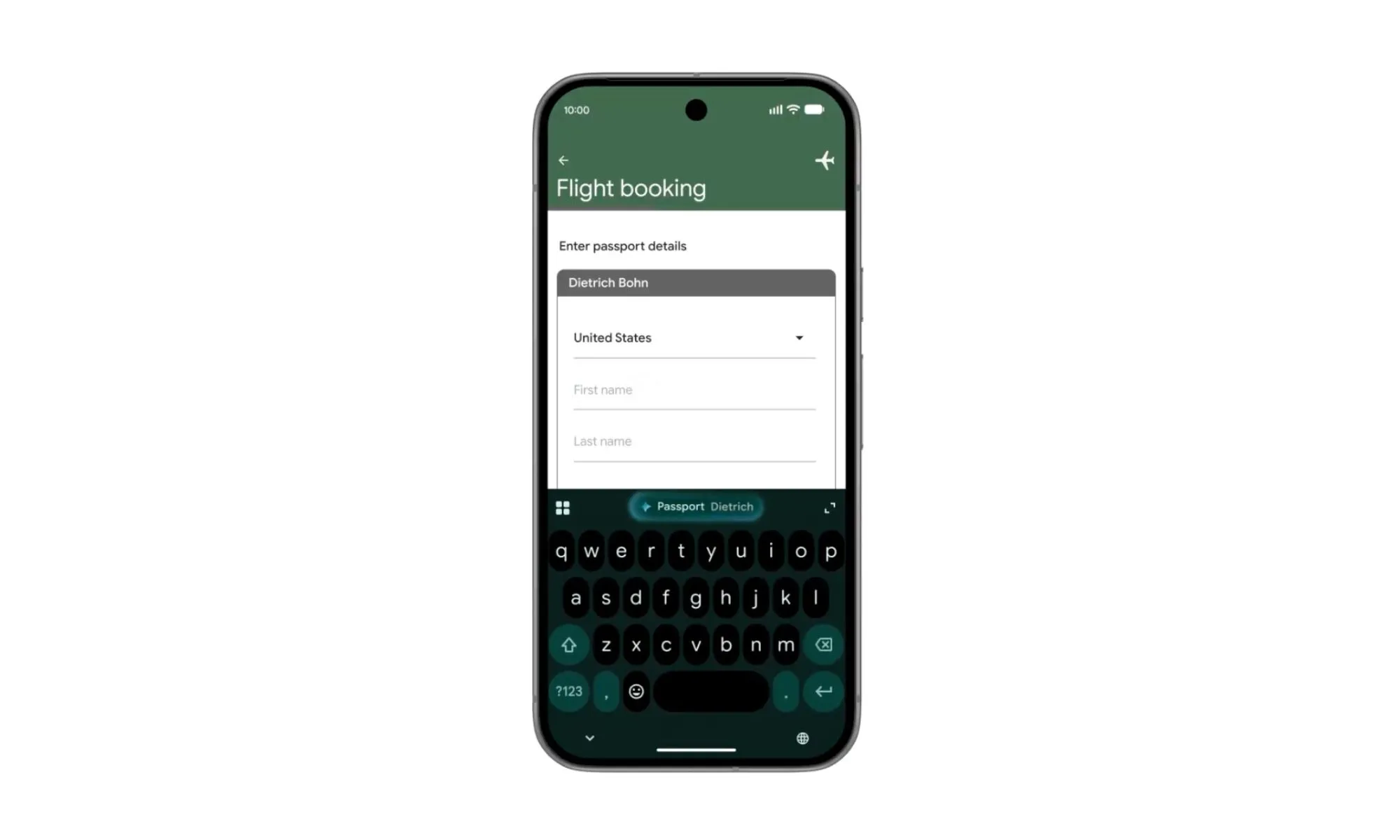

Auto browse is the feature that pushes Gemini from helpful companion into a truly agentic AI for Chrome on Android. Instead of manually clicking through links and forms, you describe an objective—such as finding parking for an upcoming event—and Gemini handles the legwork. The system can read details from your ticket confirmation, open relevant pages, and collect the key information you need, all in the background. This form of AI browser automation allows Chrome auto browse to behave like an intelligent agent that understands goals, not just individual queries. However, Google still requires you to manually approve sensitive actions like making purchases or using saved passwords, keeping you in control. Auto browse effectively offloads repetitive, tedious steps while preserving guardrails, turning the browser into an assistant that plans and executes tasks, not just fetches pages.

From Desktop to Mobile: How the Rollout Changes Everyday Browsing

Auto browse first appeared in Chrome on desktop as a preview, and it is now making its way to Android, bringing a desktop-grade productivity tool to phones. The rollout starts at the end of June for select devices running Android 12 or higher and is initially limited to Google AI Pro and Ultra subscribers. That staged release mirrors how the feature debuted on desktop, but the move to mobile is arguably more transformative. On phones, users are more likely to multitask on the go and rely on their browser as a hub for tickets, directions, and bookings. By bringing the same agentic capabilities to Android, Gemini Chrome Android narrows the gap between desktop and mobile workflows. Chrome begins to feel consistent across devices, with a shared AI layer that can understand context, automate steps, and maintain continuity as you move between screens.

Real-World Use Cases: Parking, Planning, and Personal Productivity

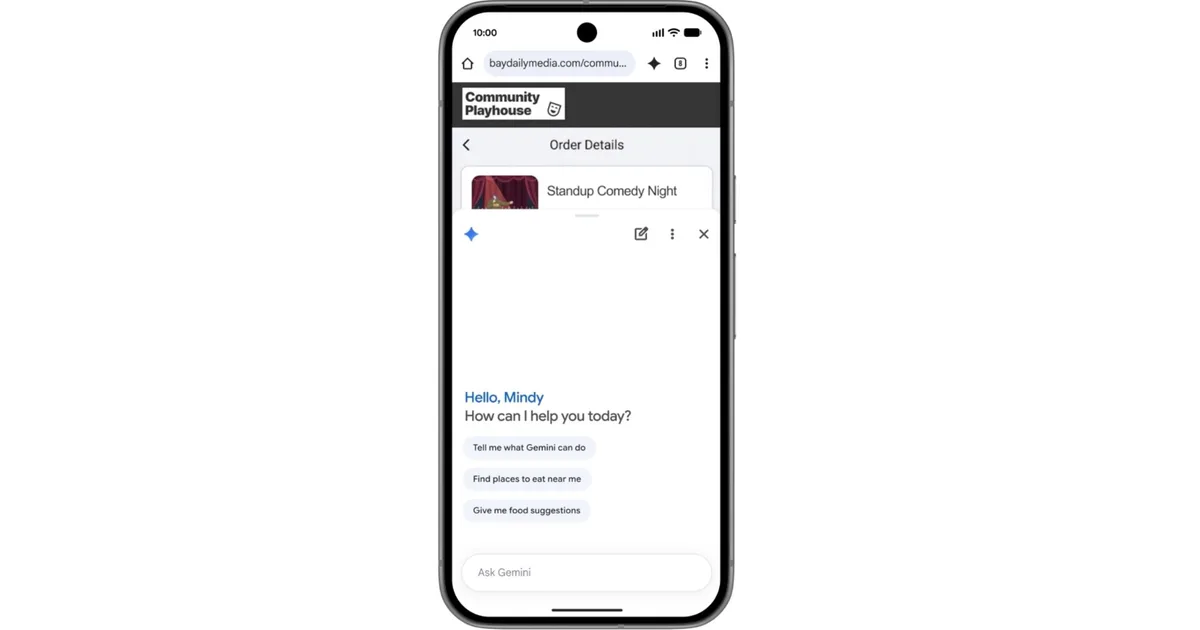

The most compelling part of Chrome auto browse is how it simplifies everyday web errands. Imagine you’re heading to a comedy show: instead of manually searching for nearby parking, opening multiple tabs, and cross-checking times, you can share your event details with Gemini and let it find parking options automatically. The same pattern applies to other scenarios—planning a visit to a venue, gathering logistics like opening hours or access information, or pulling together travel details scattered across emails and sites. Beyond auto browse, Gemini in Chrome can add events to your calendar, drop recipe ingredients into Keep, or surface specific information from Gmail based on what you are reading. These small but cumulative automations turn Chrome into a mobile web assistant that quietly trims away friction, allowing users to focus on decisions rather than the mechanics of browsing.

Why Chrome’s Agentic Future Matters for the Browser

By embedding Gemini deeply into Chrome, Google is redefining what a browser does. Instead of being a neutral window to the web, Chrome is evolving into an active participant in how you think, plan, and act online. AI browser automation via auto browse, context-aware summaries, and cross-app actions collectively push the browser toward an agentic model, where it can interpret goals and execute multi-step workflows. This shift also raises questions of safety and trust, so Google emphasizes protections against issues like prompt injection and requires user confirmation for sensitive operations. If successful, Chrome’s new AI capabilities could set expectations for browsers as true assistants, not just search front ends. For users, that means a future where describing an outcome—rather than performing each click—becomes the primary way to interact with the web on both desktop and mobile.