Why Memory Has Become AI’s Hidden Constraint

As AI models grow larger and more complex, memory has overtaken raw compute as the primary scaling bottleneck. Training and inference pipelines now span racks of GPUs and CPUs, but their effectiveness is constrained by RAM capacity, bandwidth, and power budgets. High-bandwidth tiers like HBM and DRAM deliver microsecond latency, yet their limited capacity and high cost force architects into tradeoffs between speed and scale. On the other side, traditional storage systems offer virtually limitless capacity but operate at millisecond latencies, too slow for real-time inference and long-context reasoning. This gap manifests as underutilized GPUs, elevated latency, and spiraling energy consumption. The result is a growing focus on AI memory infrastructure as a first-class design problem: how to expand effective memory capacity, increase DDR5 RDIMM capacity and bandwidth, and improve memory efficiency scaling without blowing through power envelopes or data center footprint.

Compute Express Link and the Rise of Disaggregated Memory

Compute Express Link (CXL) is emerging as a foundational technology for breaking the tight coupling between CPUs, GPUs, and local DRAM. New “memory godboxes” pool DRAM into shared appliances that multiple servers can access over a CXL fabric, treating memory as a fungible resource rather than a fixed per-node asset. Earlier CXL specifications enabled simple memory expansion and pooling, but next-generation CXL 3.0 adds larger topologies and memory sharing, allowing multiple machines to operate on common datasets. Built on PCIe 6.0, CXL 3.0 can deliver up to 16 GB/s of bidirectional bandwidth per lane, potentially adding hundreds of GB/s of aggregate memory bandwidth per CPU. Latency remains higher than directly attached DDR5, roughly comparable to a NUMA hop, but the ability to dynamically allocate and share memory capacity across nodes directly targets AI memory infrastructure constraints in large-scale training and inference clusters.

Micron’s 256GB DDR5 RDIMMs Push Capacity and Power Efficiency

On the server node itself, Micron is attacking DDR5 RDIMM capacity and power constraints with its new 256GB modules based on 1 gamma technology. These registered DIMMs combine high-density DRAM with advanced 3D stacking and through-silicon via packaging to deliver transfer rates up to 9.2 trillion transfers per second, over 40 percent above current high-volume memory hardware. Crucially, they also improve energy efficiency: replacing two 128GB RDIMMs with a single 256GB RDIMM can cut operating power by more than 40 percent. For AI servers packed with CPU cores and accelerators, this aligns with the push for higher bandwidth and capacity within tight thermal envelopes. By raising per-socket memory ceilings while reducing power draw, such modules help AI infrastructure scale without proportionally increasing energy and cooling demands, addressing a central AI inference bottleneck from within the traditional DDR5 memory hierarchy.

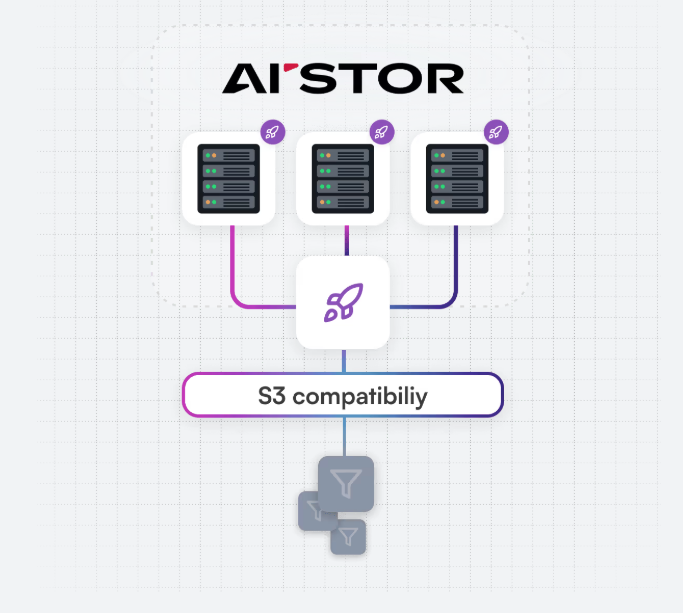

MemKV: A Petabyte-Scale Context Store for Inference

Beyond hardware, software-defined memory tiers are emerging to tackle inference-specific bottlenecks. MinIO’s MemKV introduces a shared context memory store designed for petabyte-scale AI inference memory. In current architectures, GPU-adjacent memories like HBM and DRAM frequently run out of space, forcing models to recompute context across multi-step tasks. MinIO describes this as a “recompute tax” that compounds in hyperscale environments, wasting compute and energy while depressing GPU utilization. MemKV provides a persistent, shared memory layer with microsecond retrieval times, allowing clusters of GPUs to reuse context instead of regenerating it. In internal deployments with 128 GPUs and 128K-token context windows, this approach reportedly boosted GPU utilization from around 50 percent to over 90 percent, while improving time-to-first-token. By bridging the gap between fast but small memory and slow but large storage, MemKV directly targets the AI inference bottleneck created by context retention at scale.

Converging Paths to Memory Efficiency Scaling for AI

Taken together, CXL-based memory appliances, high-capacity DDR5 RDIMMs, and specialized inference memory stores like MemKV highlight a convergence on the same fundamental problem: memory limitations. CXL disaggregation turns memory into a shareable, fabric-attached resource, allowing capacity to be dynamically allocated across compute nodes. Micron’s 256GB DDR5 RDIMMs push node-level limits, improving both density and power efficiency in AI-focused servers. Meanwhile, MemKV operates at the software level, restructuring how AI systems maintain and reuse context across large GPU clusters. Each approach operates at a different layer of the stack, yet all aim to improve AI memory infrastructure by expanding usable capacity, boosting bandwidth, and managing power. As AI workloads grow in scale and complexity, memory efficiency scaling—rather than simply adding more GPUs—appears increasingly central to unlocking the next generation of AI deployment.