AI Tab Organization in Safari: From Manual Groups to Self-Managing Browsing

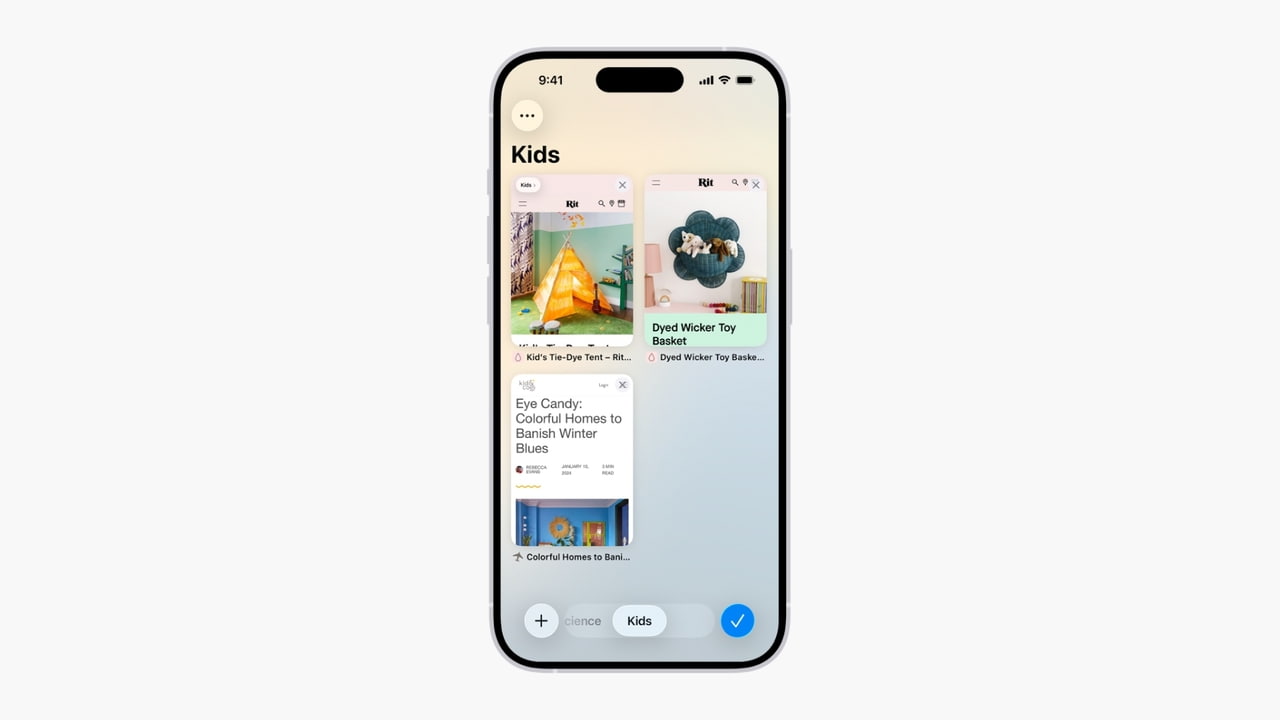

Safari’s upcoming AI tab organization hints at how deeply Apple plans to embed intelligence into routine browsing. Building on existing Tab Groups, which require users to manually separate work, travel, or personal sessions, iOS 27, iPadOS 27, and macOS 27 introduce an “Organize Tabs” option in internal builds. When enabled, Safari automatically sorts open tabs into topic-based collections, using machine learning to interpret page content and user behavior in real time. Instead of dragging individual tabs into folders, users can let the system continuously cluster related research, shopping, or entertainment pages. While Apple is not explicitly labeling this as part of Apple Intelligence yet, the feature reflects a strategic move toward ambient AI that quietly reduces friction. It also aligns Safari with competing browsers that already offer smart grouping, but with tighter integration into Apple’s broader interface and sync ecosystem.

Siri’s Chatbot-Style Redesign and the Rise of the Standalone Assistant

In iOS 27, Siri shifts from a transient voice layer into a persistent, chatbot-style assistant that lives across the system. The redesign centers on conversational interaction: users will see a transparent results card and can swipe down to enter a text-style thread that resembles a messaging conversation. Within this view, Siri can surface in-line mini app cards for weather, calendar events, or notes, turning responses into interactive dashboards rather than static answers. Apple is also preparing a standalone Siri app, which organizes past exchanges into tall rounded cards and provides a dedicated search bar for revisiting older queries. A clean “Ask Siri” prompt field supports text, voice, document uploads, and images, signaling a more multimodal future. Together, these changes recast Siri as an always-on agent that remembers context, manages history, and feels more like a full-fledged productivity tool than a simple voice shortcut.

Dynamic Island as an AI Hub for Search, Notifications, and Quick Actions

Dynamic Island, originally introduced as a visual status area, is evolving into a central hub for iOS 27 AI features. When users invoke Siri with a wake word or power button, a large pill-shaped animation expands at the top of the display, anchoring the assistant to the Dynamic Island. Swiping down from the top reveals a reimagined system search labeled “Search or Ask,” housed directly inside this space. From here, users can type or speak queries, then choose whether to route them through Siri or third-party AI services such as ChatGPT or Gemini. Results appear as a transparent card that can be pulled into full chatbot mode for richer dialogue. This design positions Dynamic Island as the default surface for AI-powered notifications, summaries, and quick actions, reducing the need to jump between apps while keeping the assistant visually subtle yet constantly available.

Apple’s AI Strategy: System-Level Intelligence Over Standalone Apps

Taken together, AI tab organization in Safari and the Siri redesign sketch a clear strategic direction for Apple. Rather than pushing users toward separate AI apps, the company is threading intelligence into the OS layers people already touch dozens of times a day: browser tabs, search, notifications, and voice interactions. The new Siri experience blends open web search with detailed answers, bulleted summaries, and large image results, while remaining tightly bound to personal data and app actions. At the same time, Apple is developing a framework that allows third-party AI assistants, such as Google Gemini or Anthropic’s Claude, to plug into system features. This dual approach—native intelligence plus curated external options—lets Apple maintain control over the interface while giving users flexibility in how they access generative AI. iOS 27, iPadOS 27, and macOS 27 are poised to redefine everyday interactions as quietly, contextually smart.