The Character Drift Problem in Modern AI Art

Most text-to-image models are optimized to nail one beautiful image at a time, not to remember who they just drew. Each prompt is treated as a clean slate, so when you ask for a recurring hero like Captain Vex in different scenes, the system reinterprets the description instead of recalling a specific face. This leads to character drift: hair color subtly changes, jawlines reshape, scars disappear, and iconic jackets swap palettes halfway through a story. For one-off posters or experimental concepts, that inconsistency is tolerable. But for comics, children’s picture books, storyboards, or branded spokescharacters, it becomes a deal-breaker because readers instantly spot continuity errors. Many creators resort to manual inpainting and compositing just to keep a protagonist’s face stable, highlighting how poorly general-purpose AI tools treat character identity as a durable asset rather than disposable style noise.

Why Consistent Character Creation Matters for Storytelling

AI character consistency is not a minor aesthetic nitpick; it underpins how audiences recognize and emotionally track a story. In comics and webtoons, a slightly different face can make it feel like a new cast member has appeared in every panel. In children’s books, kids latch onto tiny details—like a specific eye shape or a familiar jacket—and notice when those shift from page to page. Branded content adds another layer: marketing teams need a recurring mascot or spokesperson whose face stays locked across social posts, ads, and landing pages. Without reliable tools, creators burn time patching frames instead of refining narratives or layouts. A robust cartoon character generator or portrait engine must therefore do more than follow prompts; it has to preserve identity traits—eye geometry, brow arch, hair parting, distinctive marks—through wardrobe changes, new environments, and evolving story moments.

How General Tools Handle Consistency—and Where They Break

Mainstream generators excel at single portraits but falter when asked to maintain the same character across iterations. Systems like Midjourney introduced reference workflows, where you upload one or more images and treat them as anchors so the model can reuse that visual DNA in new scenes. This significantly improves consistent character creation for painterly and semi-realistic styles, making it attractive for stylized comics and graphic novels. However, as you push toward flat 2D cartoon styles, chibi, or modern animation looks, the lock weakens. Proportions creep, facial features soften toward dataset averages, and recognizable markings vanish. Other platforms blend character reference tools with model fine-tuning, offering pre-built consistent character models or letting users train their own. These pipelines deliver solid but not flawless stability, especially as a project stretches beyond a few dozen images, reminding creators that generic diffusion systems were never architected around identity permanence.

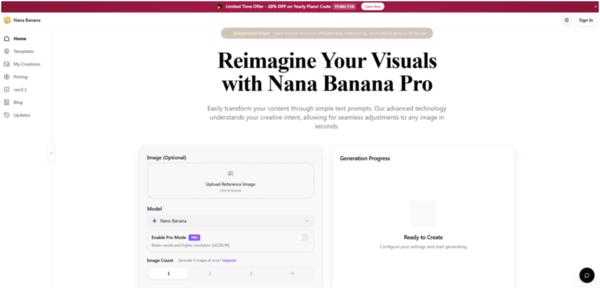

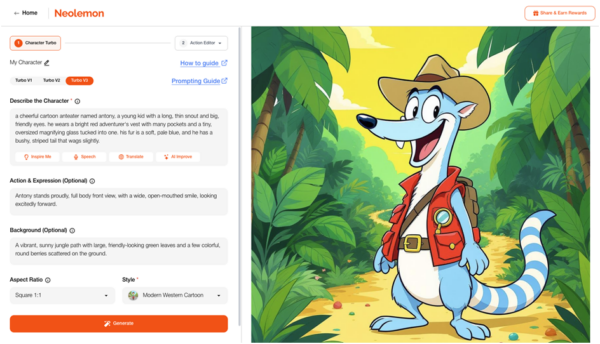

Dedicated Platforms and Character Databases Step In

A new wave of dedicated platforms is built around one goal: treating character identity as a first-class asset instead of a side effect of prompting. Rather than relying solely on ad hoc reference images, these tools construct structured character databases that store facial geometry, texture details, and style cues as reusable profiles. Engines powered by advanced models can then generate the same person in a bookstore, at a foggy train platform, or inside a café while preserving subtle traits like an asymmetrical eyebrow, a specific cupid’s bow, or a persistent beauty mark. Crucially, they attempt to keep hair parting, skin texture, and other high-frequency details intact as outfits, lighting, and environments shift. This database-driven approach turns recurring protagonists and brand mascots into stable entities that can be recalled on demand, dramatically reducing the need for manual cleanup and compositing in long-form projects.

From Parameter Locking to Style-Safe Identity Transfer

Emerging solutions combine two key techniques to combat the character drift problem: parameter locking and style-safe identity transfer. Parameter locking means the system fixes certain identity-related dimensions—eye shape, nose bridge, lip curvature, brow arch, and head proportions—while allowing clothing, pose, and background variables to change. This is often paired with reference-guided pipelines where multiple photos under varied lighting help the model disentangle what belongs to the face versus the scene. Style-safe identity transfer adds another layer, letting creators switch from realistic renders to line art, watercolor, or flat cartoon while preserving core facial geometry. For comic artists and children’s book illustrators, this makes it possible to move a protagonist through different artistic treatments—covers, interiors, marketing assets—without collapsing into a generic face. Together, these methods turn a once-fragile workflow into a repeatable pipeline for consistent character creation across complex, serialized visual projects.