AI’s New Infrastructure Dilemma: Power, Control, and Independence

AI cloud infrastructure has become the main bottleneck for fast-growing model providers. To keep up with surging demand, companies need access to enormous compute capacity, yet they also face pressure to avoid over-dependence on any single hyperscale provider. At the same time, customers are increasingly vocal about data sovereignty concerns, insisting that sensitive workloads stay under stricter regional and contractual controls. This mix of technical and political constraints is pushing AI vendors to search for compute capacity alternatives that still satisfy compliance and procurement requirements. The result is a new kind of arms race: not just for the most powerful models, but for flexible, multi-tenant infrastructure footprints that can scale globally without locking customers—or providers—into one cloud. The recent moves by Anthropic and DeepL show how infrastructure choices are now strategic levers that shape product limits, customer trust, and competitive positioning.

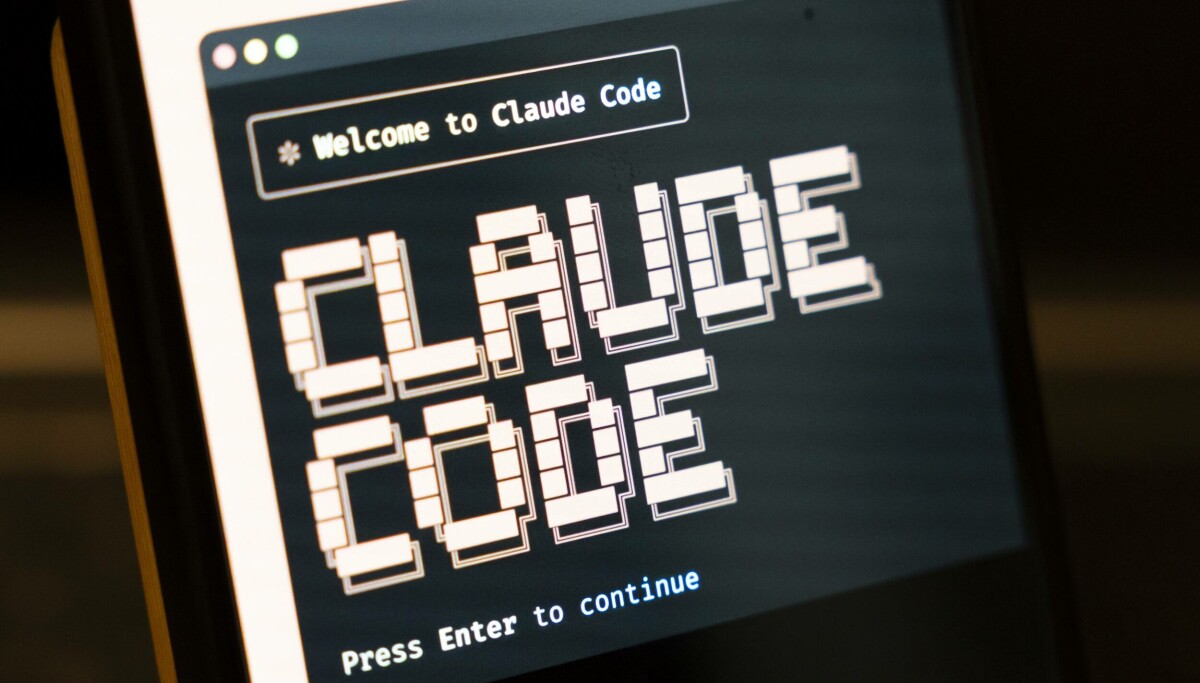

Anthropic Taps SpaceX to Relax Claude Usage Limits

Anthropic’s partnership with SpaceX illustrates how infrastructure deals are increasingly tied directly to product experience. By securing access to SpaceX’s Colossus 1 data center, Anthropic says it can substantially increase its compute capacity and immediately raise Claude usage limits for paying customers. The company has doubled Claude Code’s five-hour rate limits for Pro, Max, Team, and seat-based enterprise plans, raised API rate limits for Claude Opus, and ended the peak-hours limit reduction on Claude Code for Pro and Max accounts. SpaceX describes Colossus 1 as hosting over 220,000 Nvidia GPUs, including dense deployments of H100, H200, and GB200 accelerators. Anthropic already buys capacity from Amazon and Google, but this new arrangement lets it route more inference traffic through a separate provider, easing capacity constraints that had frustrated developers. The company has even expressed interest in future orbital AI compute, signaling that diversification away from traditional data centers is now part of its long-term strategy.

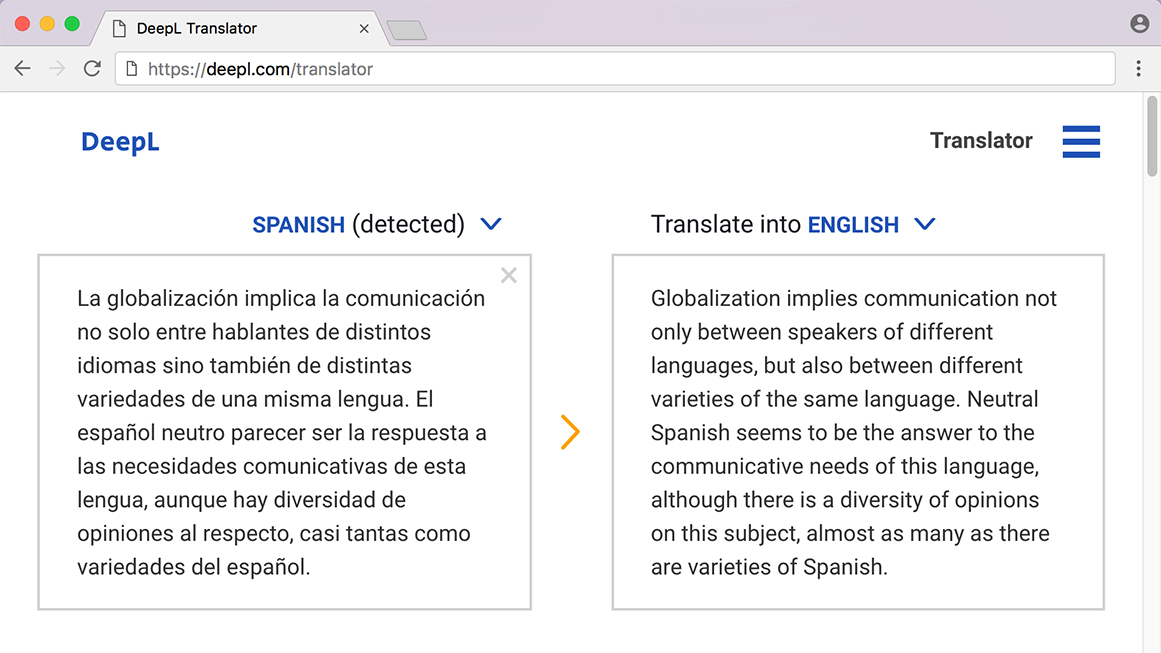

DeepL’s AWS Expansion and the Cloud Sovereignty Trade-Off

DeepL’s decision to add AWS as a sub-processor highlights a different but related tension: how to expand globally without undermining data-control expectations. On April 23, the company shifted away from a strict Europe-only processing default and integrated AWS into its broader infrastructure expansion to improve global scale and lower latency. The move lets existing AWS customers deploy DeepL’s Language AI instantly through their existing billing, identity, and audit frameworks, shortening procurement cycles and simplifying rollout for large enterprises. Critics, however, argue that relying on a major U.S. cloud provider risks weakening the perceived independence of regional AI translation leaders. Buyers now must balance DeepL’s translation quality, security assurances, and performance benefits against concerns that sensitive text could leave preferred jurisdictions. DeepL stresses that paid customer content remains protected and is not used for model training without consent, but the AWS partnership has turned a technical routing choice into a symbolic test of cloud sovereignty in professional translation.

Balancing Compute Hunger with Data Sovereignty and Vendor Choice

Both Anthropic and DeepL show how AI firms are trying to reconcile insatiable compute demand with fears of cloud lock-in and data exposure. For Anthropic, tapping SpaceX’s Colossus 1 provides a powerful new inference backbone that can support higher Claude usage limits for Claude Pro and Claude Max subscribers while reducing reliance on any one hyperscale partner. For DeepL, layering AWS into its stack offers lower latency and faster procurement for multinational customers, but also raises questions about how much foreign infrastructure dependence buyers will tolerate. These moves signal a broader shift toward diversified AI cloud infrastructure, where providers seek multiple compute capacity alternatives rather than bet everything on a single cloud. Customers, meanwhile, are learning to scrutinize not just model accuracy and price, but also where data travels, which vendors process it, and how easily they can switch providers if policies or regulations change. Infrastructure strategy, once a back-office concern, has become central to AI trust and competitiveness.