From Chatbots to Crystal Structures: What Makes MatterChat Different

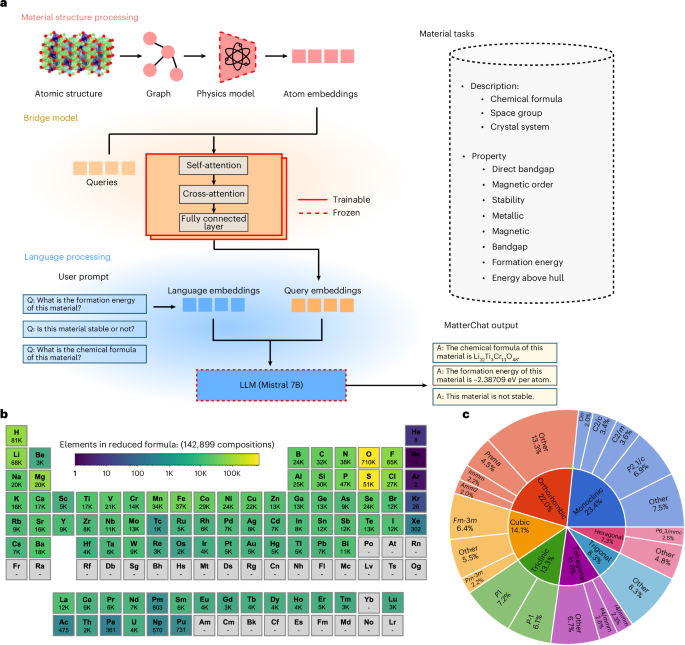

Most people meet large language models as chatbots that juggle text and images. MatterChat is built for something more demanding: solving problems in materials science. Instead of only reading papers, this multimodal large model ingests crystal structures, graphs and other structured representations of matter alongside scientific text. That means it can “see” how atoms are arranged in a solid, not just read a description of the material. Drawing on advances such as crystal graph neural networks and atomistic foundation models, MatterChat acts as a specialised materials science LLM that connects structural information to properties and performance. The goal is not small talk, but helping researchers rapidly screen new compounds, understand quantum phenomena and plan experiments. In effect, MatterChat turns the LLM paradigm into an interactive scientific assistant that understands both the language and the geometry of materials.

What ‘Multimodal’ Means in Scientific AI

In consumer applications, a multimodal model usually means one that can process text, images, and sometimes audio. In scientific multimodal AI, the word covers a richer set of inputs. For materials, this includes atomic coordinates, periodic crystal lattices, graphs of bonds and symmetries, and numerical simulation outputs, all paired with technical language from journal articles and lab notes. MatterChat sits on top of this ecosystem, integrating structural encoders inspired by crystal graph networks and equivariant graph neural networks with a language model backbone tuned to scientific text. The materials science LLM can switch between symbolic reasoning, like explaining a synthesis route, and geometric reasoning, like inferring how a change in composition distorts a lattice. This blend lets it answer questions that ordinary chat-oriented LLMs struggle with, because those models rarely see the underlying physics encoded in structures and equations.

High-Precision Property Prediction as a Discovery Engine

A key promise of the MatterChat AI framework is precise, data-efficient property prediction. Traditional approaches rely on density functional theory and ab initio molecular dynamics, which are accurate but computationally expensive, limiting how many candidate materials can be tested. Recent graph-based and atomistic models have shown that learned representations can approach quantum-chemistry accuracy for many properties. MatterChat connects this capability to natural language interaction, turning a property prediction model into a conversational engine for screening materials. A researcher can ask about bandgaps for semiconductors, ionic conductivity for solid-state batteries, or stability under high pressure, and receive targeted predictions grounded in structural inputs. This speed-up matters for discovering new materials for batteries, catalysts, superconductors and other technologies central to sustainable energy and advanced electronics, where the search space of possible compounds is far too large for brute-force simulation or trial-and-error experimentation.

Why Interpretability and Human-in-the-Loop Reasoning Matter

In high-stakes science, a black-box answer is rarely enough. MatterChat is part of a growing movement to design LLM-based tools that expose their reasoning, not just their conclusions. Building on work on thought chains and structured information extraction, the framework can surface intermediate steps: which structural motifs it considers important, how prior studies support a trend, or why one candidate material is favoured over another. This makes it easier for human experts to spot artefacts, connect predictions to known physics, and decide what to validate in the lab. Interpretable reasoning also addresses ethical concerns about over-reliance on automated systems in research. Rather than replacing scientists, a scientific multimodal AI should function as an amplifier of human judgment, making complex datasets more navigable while leaving final decisions and creative hypotheses firmly in researchers’ hands.

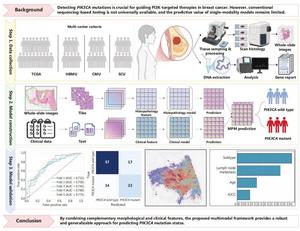

Beyond Materials: Implications for Malaysia’s Research and Industry

MatterChat illustrates a broader trend: domain-specific multimodal LLMs tuned to scientific and engineering tasks. Similar strategies are emerging in healthcare, where multimodal frameworks integrate medical images with clinical data to predict genetic mutations in cancers, and in genomics, catalysis and semiconductor design. For Malaysia, these tools could be highly relevant. Local universities and research institutes working on battery materials, green hydrogen catalysts or next-generation semiconductors could deploy materials-focused multimodal large models to cut down experimental cycles and target the most promising compounds. Coupled with models for pathology and genomics, the same principles could support precision medicine initiatives. As the country invests in green tech and advanced manufacturing, the ability to combine structural data, domain knowledge and AI-driven reasoning may become a competitive advantage, helping Malaysian teams move from incremental improvements to genuinely novel materials and processes.