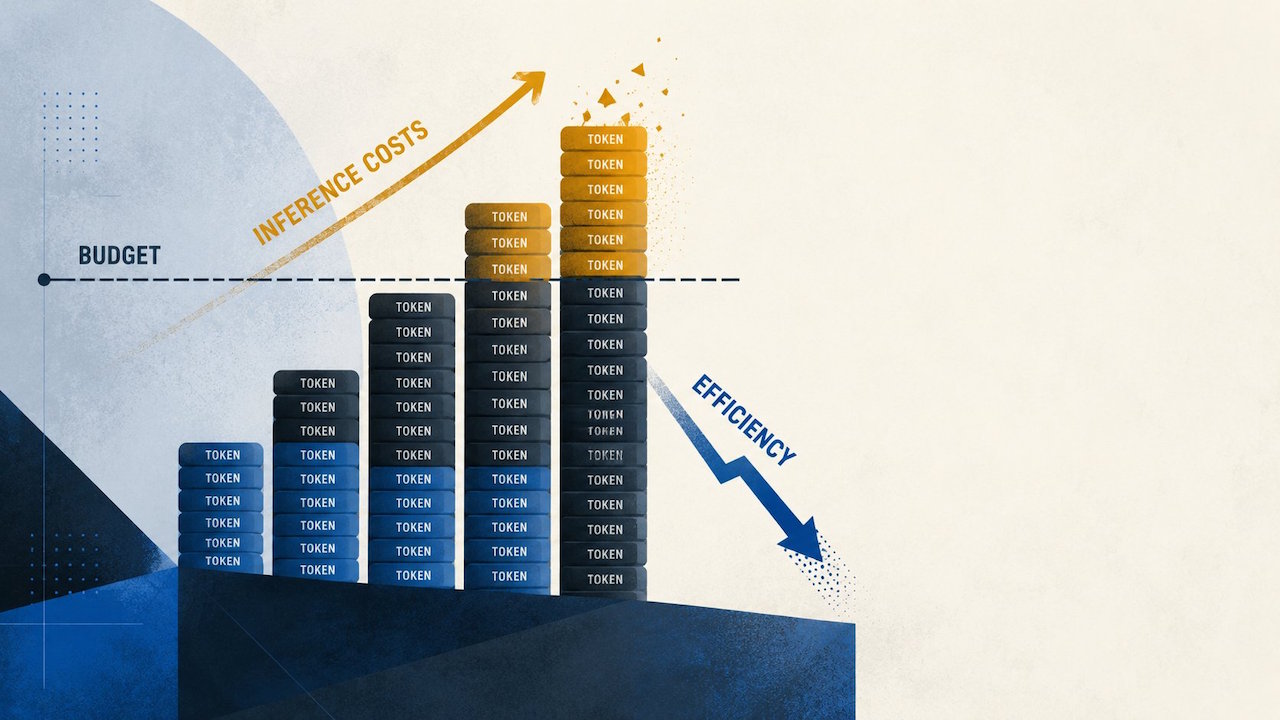

OpenAI’s Efficiency Claim vs. GPT-5.5 Cost Reality

OpenAI positions GPT-5.5 as more token efficient than GPT-5.4, with similar per-token latency and shorter responses expected to offset higher list prices. However, OpenRouter’s April 2026 usage-log analysis tells a different story for production AI costs. After real customers migrated from GPT-5.4 to GPT-5.5, effective spending rose between 49 and 92 percent across live workloads, even though the underlying price schedule had already been modeled. This gap illustrates why AI model pricing in the real world rarely matches neat benchmark narratives. Production workloads bring messy prompt variability, retry loops, and evolving user behavior that transform seemingly marginal changes into significant budget shifts. For teams focused on GPT-5.5 cost analysis, the core lesson is clear: rely on production traces and billing outcomes, not just vendor efficiency slides, before treating any new flagship model as a straightforward upgrade.

How Completion Length Swings Drive Higher Production AI Costs

The biggest surprise in OpenRouter’s GPT-5.5 data is not list prices but how completion lengths shift across prompt bands. For prompts between 2,000 and 10,000 tokens, GPT-5.5 produced completions that were 52 percent longer than GPT-5.4, even as very long prompts above 10,000 tokens saw 19 to 34 percent shorter outputs. That asymmetry matters because many enterprise deployments spend most of their time in the short and mid-range context window, not at the extreme limits highlighted in launch materials. Retrieval assistants, coding copilots, workflow agents, and support bots repeatedly cycle through short prompts, tool calls, and follow-up answers. In that environment, longer mid-range completions quietly dominate the bill. Average cost per million OpenRouter tokens nearly doubled for sub‑2,000‑token prompts and climbed across all bands, proving that completion behavior is now a central lever in AI model pricing real-world conversations.

Why Cost-per-Token Metrics Fail as a Budget Proxy

On paper, GPT-5.5’s list pricing looks like a predictable step up from GPT-5.4. Short-context workloads previously used a baseline of USD 2.50 (approx. RM11.50) per million input tokens and USD 15 (approx. RM69.00) per million output tokens, while GPT-5.5 is set at USD 5 (approx. RM23.00) and USD 30 (approx. RM138.00) respectively. A controlled estimate suggested about 19 percent higher API cost. Yet OpenRouter’s April analysis shows average cost per million tokens in real workloads rising far more sharply, from USD 4.89 (approx. RM22.50) to USD 9.37 (approx. RM43.00) for prompts under 2,000 tokens and from USD 0.74 (approx. RM3.40) to USD 1.10 (approx. RM5.00) in the 50,000–128,000 token band. The gap between list pricing and live billing underscores why finance approvals based on rate cards can mislead. True model upgrade ROI depends on how output expansion, retries, and usage mix reshape the effective token bill after launch.

Recalculating Model Upgrade ROI for Enterprise AI Budgets

For platform leaders, the GPT-5.5 cost analysis forces a rethink of model upgrade ROI and enterprise AI budget planning. A newer model with better quality is not automatically a win if it pushes your workload into more expensive operating bands. Short prompts with repeated tool calls, retrieval reformulations, and follow-up questions can turn modest completion drift into a significant monthly overrun. That means routing strategies become financial strategies: you might reserve GPT-5.5 for long-context or premium-tier jobs while keeping GPT-5.4 or other models for everyday traffic. Rival options such as Claude Opus 4.7 also complicate decisions, since tokenization and response length interact with pricing in non-obvious ways. Before committing to any upgrade, treat the model as a line item in operating expenditure, not just a technical improvement, and run narrow, workload-specific trials that mirror your expected production mix.

Practical Steps: Benchmark Your Own Usage Before Upgrading

Teams evaluating GPT-5.5 or any frontier model should move beyond synthetic benchmarks and run targeted, production-style tests. Start by sampling real prompt logs from your assistants, copilots, and agents, then replay them against GPT-5.4 and GPT-5.5 under the same traffic shape. Track prompt size distribution, median completion length per band, and total tokens per conversation, not just isolated calls. Compare effective cost per million tokens across models and examine where retries, tool errors, or long answer chains inflate spending. This data-driven view reveals your true production AI costs and clarifies whether GPT-5.5’s quality gain justifies a 49–92 percent spend increase in your context. Only after this exercise should you lock in routing rules, user-tier entitlements, and budget forecasts. In a world of rapidly changing AI model pricing real-world dynamics, your own telemetry is the only trustworthy guide.