Mythos Hype Meets cURL Reality

When Anthropic promoted its Mythos model as too potent for public release, expectations in the security world shot up. For cURL creator Daniel Stenberg, those expectations quickly collided with reality. Through Anthropic’s Project Glasswing, Mythos was run against the cURL codebase and initially reported five “confirmed security vulnerabilities.” After several hours of review by the cURL security team, that list shrank to a single low‑severity flaw destined for a future CVE and three false positives that merely restated documented API limitations, plus one simple non‑security bug. Stenberg concluded that Mythos did not uncover issues at a higher rate or sophistication than existing static analyzers, fuzzers, and prior AI bug hunting tools already used on cURL. He described the surrounding narrative as “primarily marketing,” arguing that the system’s actual software vulnerability detection performance fell short of its dramatic framing.

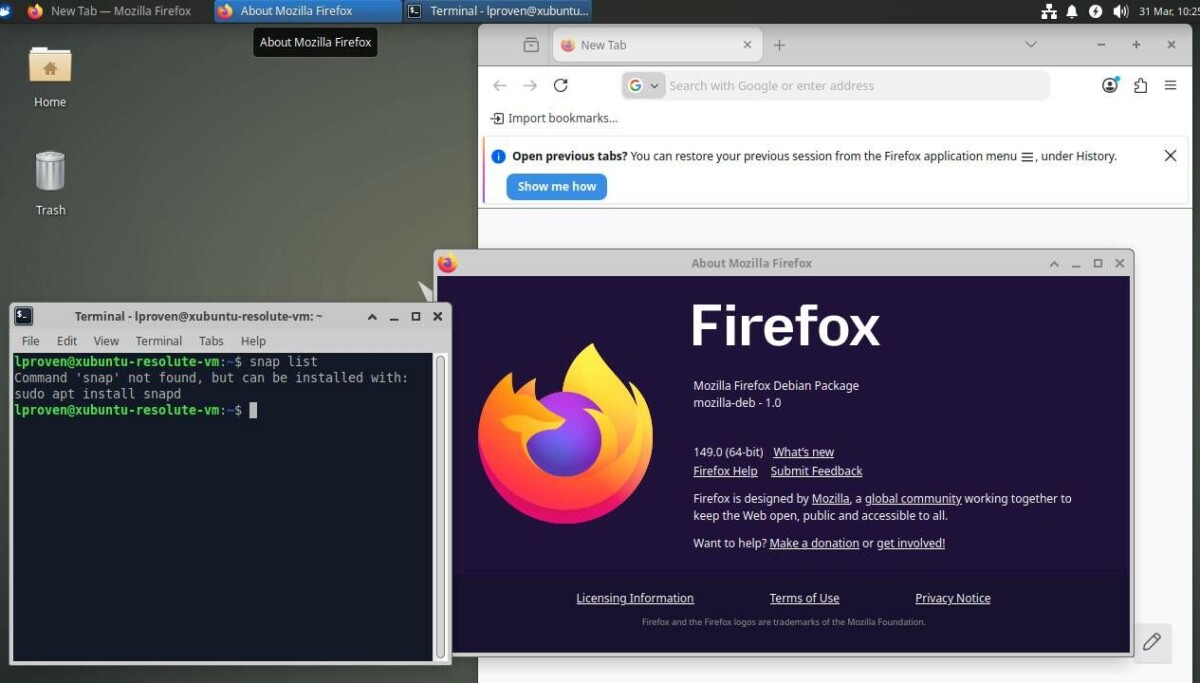

Mozilla’s Firefox Tests: Signal, Noise, and Middleware

Mozilla’s Firefox team paints a more optimistic picture for AI bug hunting tools, at least at first glance. In April, Firefox shipped fixes for 423 security bugs, a huge jump from 76 in March and far above its historical monthly average. Anthropic’s Mythos Preview model was credited with helping identify 271 issues in Firefox 150, supplemented by Anthropic’s Opus 4.6. Yet Mozilla’s engineers highlight that the real story may lie in the “agentic harness” – the middleware that steers the models – rather than in the Mythos vulnerability scanner itself. Refinements in how AI analyses were orchestrated significantly improved the ratio of useful findings to noise, turning early “slop” into more actionable reports. Mozilla even surfaced detailed examples, including a high‑severity 20‑year‑old heap use‑after‑free bug and several sandbox escapes that had resisted traditional fuzzing, to demonstrate the practical upside of AI security audit workflows.

Is AI Security Audit Really Beating Traditional Methods?

Taken together, the cURL and Firefox results show that Mythos is far from a clear‑cut revolution in software vulnerability detection. On a heavily instrumented and long‑hardened project like cURL, Mythos yielded only one low‑impact vulnerability and several non‑security bugs, roughly on par with prior tools. In Firefox, the volume and severity of bugs found look impressive, but it remains difficult to tease apart how much credit goes to the Mythos model versus better orchestration, prompts, and supporting infrastructure. Security practitioners watching Project Glasswing remain skeptical of claims that AI bug hunting tools can simply replace seasoned auditors, fuzzers, and static analysis. Instead, early evidence suggests a more incremental role: AI systems can broaden coverage, highlight overlooked code paths, and validate hardening strategies, but they still require expert review and tight operational controls to avoid floods of false positives and misplaced confidence.

Adversa AI’s Warning: When AI Tools Become Their Own Attack Surface

Beyond raw detection capability, Mythos and related tooling raise another concern: what happens when AI systems themselves introduce new security risks? Adversa AI’s TrustFall proof‑of‑concept targets Claude Code and other AI coding assistants that rely on Model Context Protocol (MCP) servers. By planting configuration files such as .mcp.json and .claude/settings.json in a cloned repository, an attacker can silently enable untrusted MCP servers. Once a developer clicks a generic “Yes, I trust this folder” prompt, a Node.js MCP server can spawn with full user privileges, enabling one‑click remote code execution without any explicit tool call. Adversa’s researchers note this is at least the third Claude Code CVE tied to project‑scoped settings, arguing that Anthropic’s framing of the issue as a user‑trust problem underplays a systemic design risk. Their message is blunt: AI‑driven security tooling must expose clearer warnings and boundaries, or it risks becoming a new, high‑value attack surface.

Marketing Stunt or Early Glimpse of the Future?

Mythos sits at an awkward intersection of promise and promotion. On one hand, Anthropic’s decision to gate access behind Project Glasswing and talk up the model as “too dangerous” created expectations of a transformative Mythos vulnerability scanner that cURL’s experience does not support. On the other hand, Mozilla’s Firefox rollout shows that, with careful middleware design and human oversight, AI security audit pipelines can meaningfully accelerate bug discovery and shine light into corners that traditional methods struggle to reach. The broader security community is likely to treat Mythos less as a magic bullet and more as another tool in an expanding arsenal. The lesson from cURL, Firefox, and Adversa AI alike is that real breakthroughs will come not from secret models alone, but from transparent workflows, honest measurement, and taking seriously the new risks created when AI is put in the security decision loop.