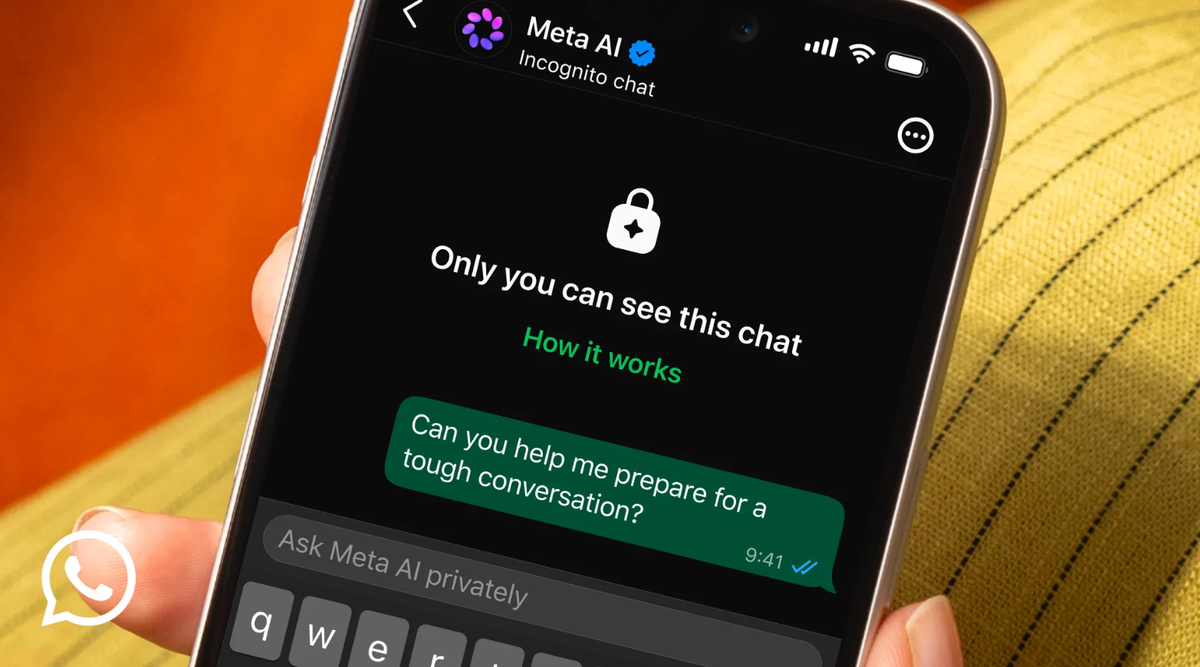

What Is WhatsApp Incognito AI and How Does It Work?

Meta is rolling out WhatsApp incognito AI chats, a new mode designed for private AI conversations that behave more like secure, temporary rooms than standard chat threads. When users enable Incognito Chat with Meta AI, their messages are processed inside what the company calls a “secure environment” that even internal teams supposedly cannot access. The session does not create a lasting log: conversations are not saved by default, and once you close the app or end the session, the disappearing AI chats are wiped rather than archived. Meta says the AI will not remember previous sessions, so every conversation starts fresh with no history to draw on. At launch, Incognito Chat supports text-only interactions, with no ability to upload or generate images, and it inherits WhatsApp’s existing age limits, excluding users under 13 from accessing the feature.

Private AI Conversations for a More Sensitive Digital Life

The new incognito mode targets a clear shift in how people use AI. Meta acknowledges that users now bring deeply personal topics into AI chats, asking about finances, health issues, work dilemmas, relationships, and major life decisions. These are exactly the kinds of conversations that feel too intimate to be stored on corporate servers or reused to train future models. By positioning Incognito Chat as a separate, highly private AI interaction layer, Meta is trying to reassure users that they can share sensitive details without inviting surveillance. Messages in this mode are not only shielded from human review but also excluded from training data, according to the company’s description of its Private Processing technology. In practice, that means users can lean on Meta AI for guidance or emotional support while keeping their questions and context confined to an ephemeral, locked-down session.

Why Meta Is Betting on AI Privacy Features Now

Incognito AI on WhatsApp lands at a moment when trust is becoming the battleground for AI adoption. Generative models thrive on data, yet the more personal our prompts become, the more uncomfortable people feel about opaque data handling. AI privacy features are emerging across the industry: Google’s Gemini and ChatGPT already let users limit or disable chat history and training usage, but they often keep short-term records for safety and system improvements. Meta is trying to go further by promising no stored logs at all for Incognito Chat. Company leaders frame this as an extension of the privacy expectations built around WhatsApp’s end-to-end encryption, now applied to an AI “third participant” inside the conversation. If Meta can convincingly prove that these disappearing AI chats truly remain inaccessible, it could turn privacy from a defensive talking point into a competitive advantage.

Balancing Safety, Anonymity, and the Future of AI Assistants

This stronger privacy posture raises tough questions about safety oversight. Other AI platforms sometimes review flagged conversations about self-harm or violence, relying on stored data to intervene or refine safeguards. With WhatsApp’s Incognito Chat, Meta says conversations are not logged, which means there is no record to audit after the fact. The system can still refuse harmful prompts, block certain behaviors, or redirect users to supportive information in real time, but it cannot rely on historical traces for moderation or improvement. That trade-off underscores how AI assistants are changing expectations inside messaging apps: they are no longer just tools but quasi-confidants. For users who worry about corporate monitoring, Incognito AI offers a way to explore difficult topics without leaving a trail. The bigger test is whether this model of ultra-private, disappearing AI chats can scale while keeping both privacy and safety intact.