Val Kilmer’s Digital Return and the Emotion of a Synthetic Performance

In the trailer for As Deep as the Grave, audiences watch a paradox: Val Kilmer is back on screen after his death in 2025, yet only as an AI avatar. His face and voice have been meticulously reconstructed from archives and approved material so he can complete the role of Father Fintan in a film he began while alive. The result is both haunting and reverent. Viewers see a familiar presence that anchors a story about Navajo history and archaeological mystery, yet they also know the performance is assembled by code rather than captured on set. This moment crystallises the emotional and ethical stakes of AI avatar technology: can a digital human clone truly honour an actor’s legacy, and where is the line between tribute and exploitation when an algorithm is speaking through a beloved face?

From CEO Digital Twins to Citi Sky: Avatars Enter Serious Business

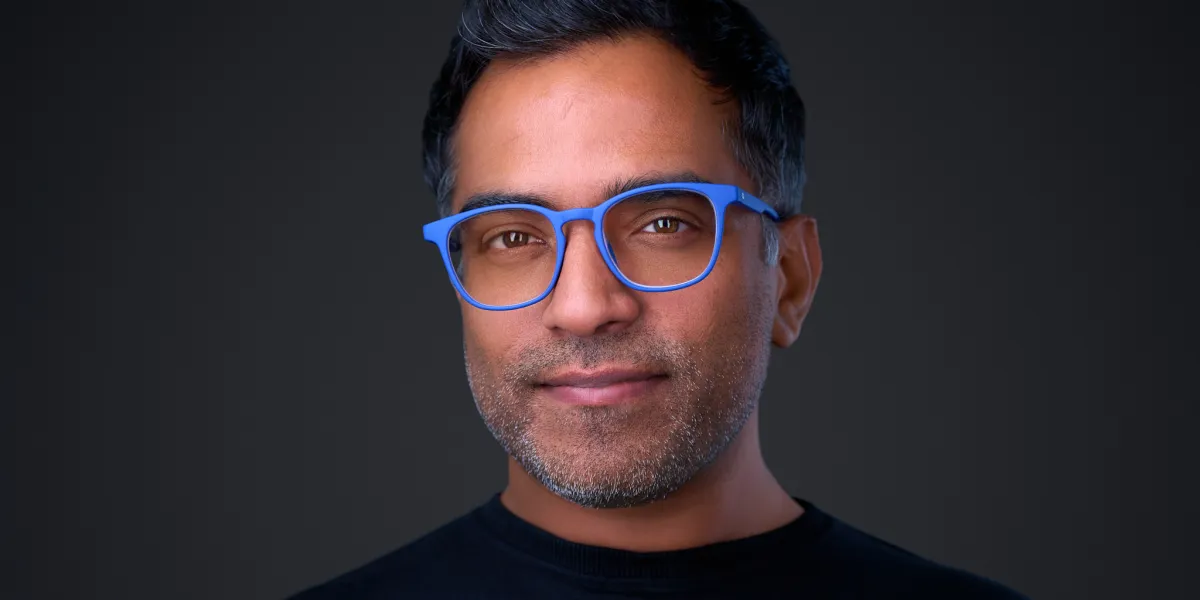

While Hollywood experiments with posthumous stars, corporations are quietly normalising AI avatars as co-workers. Video platform Kaltura has put its own CEO, Ron Yekutiel, into production as a conversational CEO digital twin. Built on the company’s Agentic Avatar technology, his bi-directional avatar delivers strategic briefings, customer messages, and updates in his voice, likeness, and style, scaling executive communication without endless studio time. In finance, Citi Wealth’s new Citi Sky uses Google Cloud and Google DeepMind’s real-time avatar technology to become an always-on member of the wealth team. Clients can speak to Citi Sky for guidance on market insights and certificate of deposit maturity events, in natural, conversational interactions that start in English and Spanish. Together, these systems show digital human clones moving from novelty demos to embedded infrastructure in corporate and financial life, where trust, compliance, and accountability matter as much as technical innovation.

Talking Avatar Generators and the Rise of the Solo Synthetic Presenter

Beyond big institutions, AI avatar tools are turning solo creators into their own virtual studios. Modern AI avatar generators let users type a script and instantly produce videos with realistic digital presenters that speak, emote, and lip-sync in multiple languages. Platforms highlighted by industry reviewers now emphasise AI twin creation, allowing individuals to build a reusable on-screen persona that can front YouTube channels, courses, or marketing campaigns without new shoots. AI talking avatar generator services combine text-to-speech, facial animation, and automated editing so non-experts can create consistent, branded video at scale. Instead of hiring actors, setting up lighting, or mastering post-production, a single person can deploy a fleet of AI avatars to deliver content across social media, training, and customer support. This is AI avatar technology not as spectacle, but as everyday creative infrastructure for people who previously lacked the budget or skills for professional video.

James Cameron’s Digital Humans Made Real—and His Warnings

James Cameron’s worlds have long revolved around synthetic bodies and unstable identity: from Terminator’s infiltrating machines to Avatar’s remote-controlled Na’vi. Today’s AI avatars echo those ideas in quieter but tangible ways. Val Kilmer’s AI-driven performance feels like a softer Skynet, repurposing a human likeness beyond a natural lifespan. Corporate systems such as CEO digital twins and Citi Sky resemble Cameron’s networked intelligences—entities that speak with human cadence but are ultimately software. Cameron has voiced practical concerns in interviews about AI and deepfakes undermining trust and actor autonomy, and current avatar tech brings those worries into focus. When a likeness can be reconstructed from existing footage and voice samples, whose consent governs future performances, and for how long? Acting careers, residuals, and reputations could be shaped by contracts written for a pre-avatar era, raising urgent questions about rights, regulation, and what counts as a “real” performance.

The Near Future: AI Concierges, Personal Doubles, and Virtual Crowds

If current trajectories hold, most people will not first encounter AI avatars through blockbuster resurrections, but through mundane interactions. Wealth clients may chat with a lifelike avatar such as Citi Sky to understand portfolio moves, while employees see a CEO digital twin pop up with tailored updates instead of long town halls. Consumer-grade talking avatar generators will make it normal for freelancers, educators, and small businesses to front brands with synthetic presenters that never tire, age, or miss a shoot. Personal digital doubles could handle routine video replies, onboarding, or language-localised versions of one’s content. In entertainment, studios can populate scenes with AI-driven virtual extras and minor roles, freeing budgets but also reshaping work for human performers. Rather than a single Skynet moment, AI avatar technology is likely to seep into everyday life as a swarm of polite, hyper-available digital humans that gradually blur where our presence ends and our proxies begin.