From Chat Windows to Live, Voice-First AI

OpenAI is reframing how developers think about AI interaction by launching three new real-time audio models through its Realtime API. Instead of treating AI as a text chat widget, these OpenAI voice models are built to operate inside live conversations, turning speech into an active control layer for applications and workflows. GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper collectively support live reasoning, real-time translation AI, and streaming transcription with low latency. This setup means users can speak naturally while software listens, reasons, and responds as things happen, not after the fact. Businesses can embed voice into customer support, sales, education, and internal tools so that AI assistants no longer sit on the sidelines. They join the workflow in progress, handling instructions, clarifications, and interrupts in real time. It marks a shift from static chatbots to responsive, voice-first, reasoning-enabled AI agents.

GPT-Realtime-2: GPT-5-Class Reasoning for Spoken Conversations

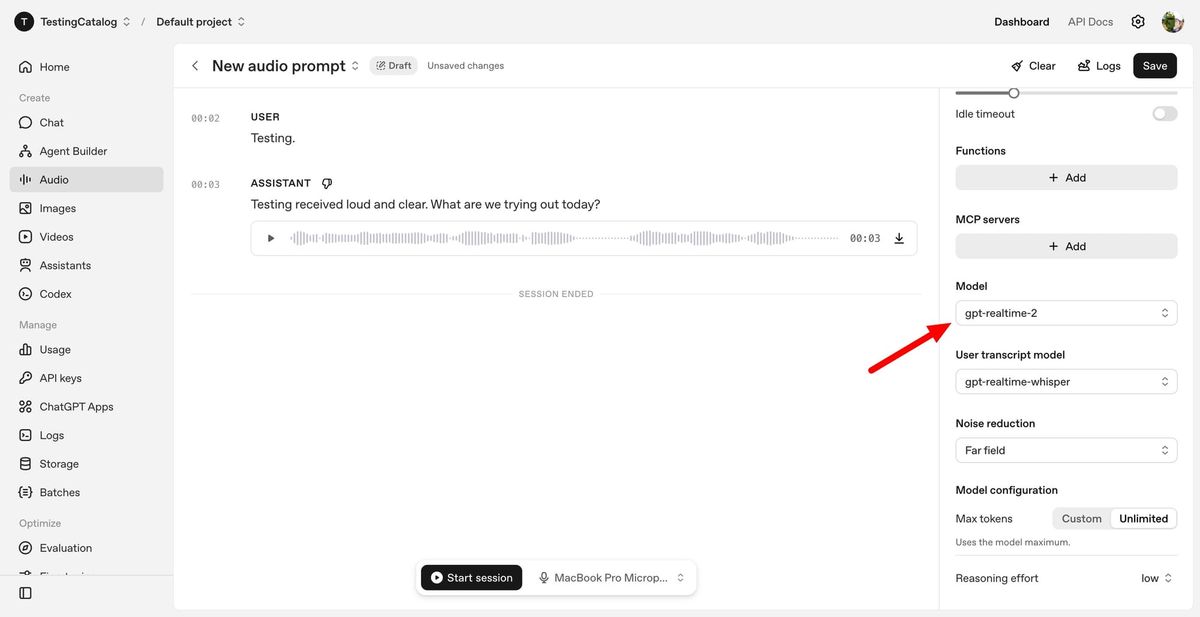

GPT-Realtime-2 is the centerpiece of this launch, described as OpenAI’s first voice model with GPT-5-class reasoning capabilities. Designed for live conversations, it can follow complex requests, track context over time, and manage interruptions without collapsing into a rigid question–answer pattern. The model features a significantly expanded 128K-token context window, up from 32K, allowing much longer and more coherent dialogues. Developers can tune reasoning effort from minimal to xhigh, trading latency for deeper analysis in demanding scenarios like multi-step troubleshooting or voice-driven workflows. Crucially, GPT-Realtime-2 supports parallel tool calls, enabling the assistant to say things like “let me check that” while performing background actions. It also handles corrections more naturally and recovers gracefully when tasks fail, narrating issues instead of going silent. These improvements turn voice agents from brittle IVR-like bots into more resilient, workflow-aware assistants.

Realtime Translation and Live Transcription as Core Capabilities

Alongside GPT-Realtime-2, OpenAI is shipping two specialized models that move voice AI deeper into practical business scenarios. GPT-Realtime-Translate is a real-time translation AI system that accepts speech in more than 70 input languages and outputs in 13, while keeping pace with fast speakers and shifting topics. It is tailored for use cases like multilingual customer support, cross-border sales calls, live events, and media localization. GPT-Realtime-Whisper brings continuous, streaming speech-to-text to the voice AI API, transcribing conversations as they unfold. This enables live transcription for meeting notes, captions, and voice-controlled workflows where text is needed immediately. Together, these models allow developers to chain speech input, reasoning, translation, and transcription in a single pipeline. Voice becomes not just an interface, but a real-time data stream that applications can interpret, log, and act upon while the conversation is still underway.

Voice Becomes a Working Interface for Business Workflows

These models are explicitly aimed at making voice a working interface rather than a novelty. OpenAI highlights emerging patterns such as voice-to-action, where users speak tasks that the system executes in the background, and systems-to-voice, where software proactively speaks to users based on live data. Imagine a property search assistant that filters listings and books tours through conversation alone, or a travel app that informs users of delays, recommends routes, and confirms changes in real time. The voice-to-voice pattern extends this to multilingual scenarios, with GPT-Realtime-Translate bridging language gaps during live calls. Because the models are exposed through an API, developers can embed them into mobile apps, in-car systems, and enterprise dashboards. This positions voice AI not as a separate support channel, but as an integrated layer of live assistance across business workflows.

A Shift Toward Voice-First, Reasoning-Enabled Assistants

Taken together, OpenAI’s new real-time audio models represent a strategic shift from chat-based AI toward voice-first assistants that can reason, translate, and transcribe continuously. GPT-Realtime-2 brings GPT-5-class reasoning into spoken exchanges, with configurable depth so teams can prioritize speed or analytical power. GPT-Realtime-Translate and GPT-Realtime-Whisper extend that intelligence to multilingual communication and live transcription, turning conversations into structured data streams. For developers, this means the voice AI API is no longer just about sounding natural; it is about orchestrating tools, managing context, and keeping conversations productive even when they become messy and non-linear. For businesses, it opens the door to AI that participates in workflows as they happen—from support calls to operations dashboards—rather than responding after the fact. The result is an emerging generation of live business assistants that listen, think, and act in real time.