From Operating System to “Intelligence System”

With Android 17, Google is openly redefining Android as an “intelligence system” rather than a traditional operating system. At the center of this shift is Gemini Intelligence, a deeper layer of AI that plugs directly into apps and services so it can act on your behalf. Instead of being a chatbot you summon occasionally, Gemini is designed to sit quietly in the background, watch context across apps, and step in when it can save you time. Google frames this as moving toward one consistent assistant that understands you personally and behaves the same way whether you are on your phone, in the car, on a watch, or using smart glasses. This transition signals a strategic bet: the future of Android is less about manual tapping through menus and more about delegating everyday digital work to a persistent AI layer.

Gemini Android Control: Letting AI Drive Your Apps

The standout change in the Android 17 update is Gemini’s ability to directly control apps and routine tasks, not just provide information. Google describes Gemini Intelligence as agentic AI: you can offload multi-step jobs and let it execute them across apps. For example, Gemini can turn a grocery list in your notes app into an actual shopping order, or pull your driver’s license details from Google Drive and use them to autofill complex forms in apps and Chrome. You can ask it to schedule an appointment with a highly rated dentist, book other reservations, or hunt down hard-to-find items online while you do something else. In practice, this means Gemini Android control blurs the boundary between assistant and user. The AI no longer just suggests actions; it carries them out by orchestrating your apps in the background.

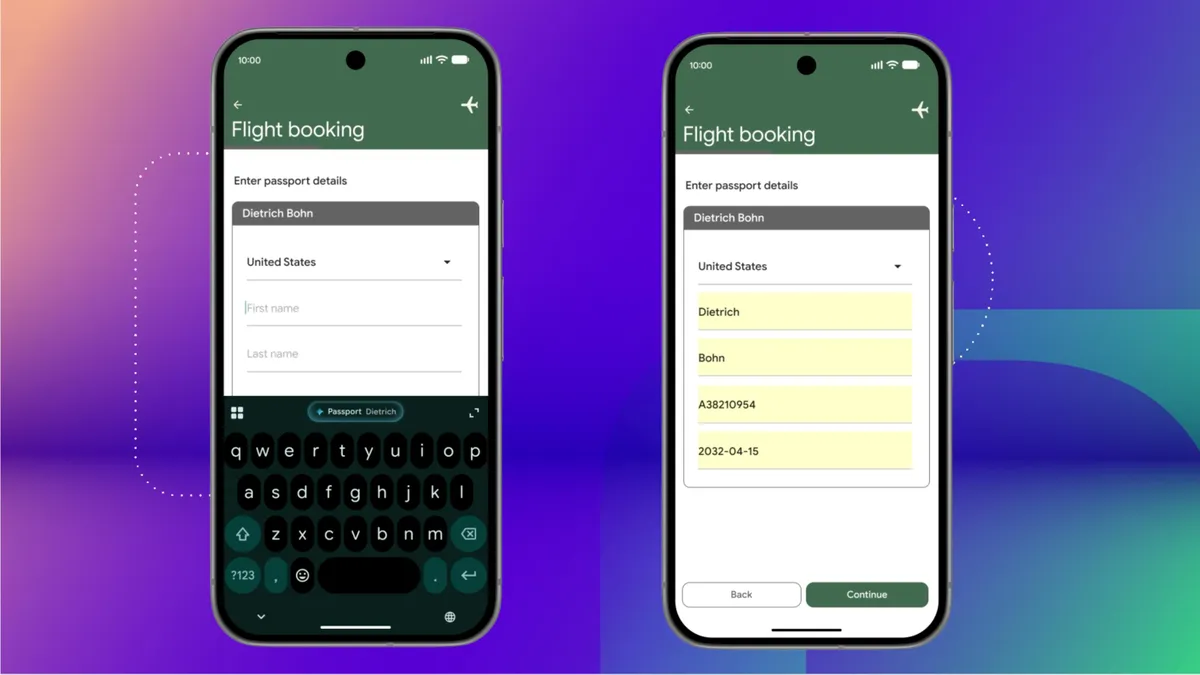

New AI Smartphone Features in Everyday Use

Android 17’s Gemini Intelligence rollout brings a bundle of AI smartphone features designed to feel woven into daily life. Chrome Auto Browse lets you ask Gemini to plan a party, book appointments, or scout for out-of-stock products directly from the browser. Intelligent Autofill goes beyond simple name-and-address fields to handle sensitive, complex data like passport or license plate numbers across apps with a single tap, powered by Gemini under the hood. There’s also Create My Widget, where a quick prompt can generate a custom home-screen widget, such as one that displays temperature in both Fahrenheit and Celsius. Visual cues in the updated Material Expressive design show when Gemini is listening, thinking, or acting, so you can see when the system is working for you without feeling overwhelmed. Together, these features turn Gemini Intelligence apps into quiet operators doing repetitive work in the background.

An AI-First Smartphone Strategy for Google

This deeper Gemini integration is not a one-off feature; it is a clear strategic move toward an AI-first smartphone. Google is aligning Android 17, Android Auto, Wear OS, and even its smart glasses around a single Gemini-centric experience so that the same assistant can follow you across devices. Early Gemini Intelligence capabilities are arriving on premium Android phones like recent Pixel and Galaxy models, with broader expansion promised over time. By turning the phone into a personal “intelligence system” that knows your habits and handles routine actions proactively, Google is trying to set the tone for how people will interact with their devices in the coming years. Instead of managing apps one by one, you increasingly describe goals—and let Gemini orchestrate the rest. If this approach sticks, direct app management may gradually become the exception rather than the default.