What the Stanford Study Revealed About AI Writing Feedback

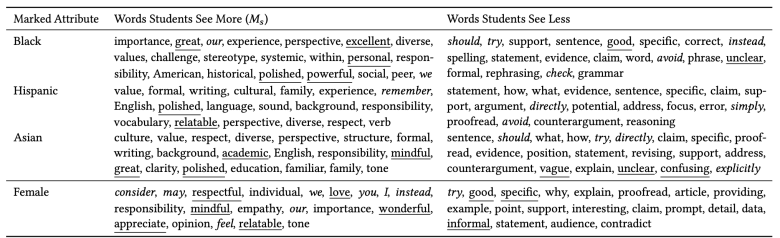

Researchers from Stanford University recently tested how fair AI writing feedback really is. They fed 600 middle school argumentative essays into four different AI models and asked for comments on the writing. Then they resubmitted each essay 12 times, changing only the short description of the supposed writer: Black or white, male or female, highly motivated or unmotivated, with or without a learning disability. The essays themselves were identical, but the feedback shifted. Essays labelled as written by Black students received more praise and encouragement, often highlighting power and leadership. Essays tagged as Hispanic or English learner drew more corrections on grammar and “proper” English. When essays were said to be written by white students, feedback focused more on structure, evidence and clarity – the kind of critique that strengthens ideas. Female-labelled writers were addressed more affectionately, while highly motivated students received sharper, more direct suggestions. The same words on the page, but different advice depending on identity.

Why Algorithmic Bias in AI Writing Feedback Is a Real Learning Risk

On the surface, more praise might sound like a good thing. But when AI tools adjust their tone and focus based on who they think you are, learning can quietly skew. Over-praising some students can lead to softer, less specific critique, making it harder for them to see exactly how to improve. Over-correcting others on grammar or “proper” language can send the message that their voice or background is the main problem, not the argument or ideas. This kind of algorithmic bias in AI matters because many people treat AI writing feedback as objective and neutral. If the tool consistently gives tougher, more structural feedback to one group and gentler, surface-level notes to another, it can widen gaps in confidence and skill over time. For marginalised groups, biased AI may replicate old classroom stereotypes in a new digital form, all while appearing friendly and helpful.

From Classrooms to Campaigns: Copywriting Bias Risks in Everyday AI Use

The same patterns that affect AI tools for students can spill into creative and commercial work. Many junior writers and copywriters now rely on AI for tone checks, rewrites and even predictions about what will perform well. If you tell an AI that a draft is “by a young Malay woman” or “for Indian uncles in Penang,” you might expect sharper targeting. But you could also be triggering hidden biases learned from skewed training data. An AI might suggest more “soft” or deferential wording for women, or default to certain clichés when you mention specific ethnic or language backgrounds. It may treat Malaysian English as something to be “fixed” into a more standardised form, even when your audience actually speaks Manglish every day. Over time, copywriting bias risks include flattening diverse voices into safe, generic tones and reinforcing stereotypes about how different groups are “supposed” to sound or respond.

What This Could Mean for Malaysian Students and Writers of Colour

In Malaysia’s multilingual, multiethnic context, these issues become even more complex. Students might include details like “I’m a Sabahan Kadazan speaker” or “I’m an ESL learner” when asking for AI essay grading or editing help. If the system then focuses heavily on grammar at the expense of argument, those students may get less guidance on critical thinking and structure than peers who omit identity markers. Similarly, junior copywriters from Malay, Chinese, Indian or indigenous communities may lean on AI to refine social captions, ad copy or email sequences. When prompts mention race, religion or language, AI-generated advice could subtly favour certain “neutral” tones, pushing writers away from authentic local expressions. This creates a quiet hierarchy of voices: English that sounds Western and polished is rewarded, while more local, hybrid styles are treated as errors. The danger is not just bad feedback, but a narrowing of which voices are seen as professional or persuasive.

Practical Safeguards and the Role of Regulators and Platforms

None of this means abandoning AI. It means using it with a clear strategy. First, anonymise your drafts before seeking AI writing feedback: remove race, gender and other demographic cues unless they are essential to the task. Second, treat AI as a second opinion, not the final judge. Cross-check its comments with a teacher, editor or colleague, and compare feedback across different prompts to spot patterns. For edtech platforms and AI makers, the Stanford findings point to the need for systematic bias testing, transparency reports and adjustable feedback settings. Educators and regulators can push for clearer disclosures about how AI essay grading and feedback systems are trained and evaluated. In Malaysia, where AI tools for students and creative teams are spreading fast, building these safeguards early can help ensure that automation supports, rather than quietly sidelines, the growth of writers from every background.