From Developer Tool to No‑Code VR Development Platform

Meta’s Immersive Web SDK (IWSDK) began as a WebXR framework designed to simplify common VR development tasks like physics, hand‑tracking, movement, grab interactions, and spatial UI. The latest update pushes it beyond a traditional developer library and toward a no‑code VR development platform. By embedding AI coding assistants directly into the workflow, IWSDK lets creators describe what they want in natural language while the system handles implementation details. Instead of wrestling with low‑level engineering, users can concentrate on interaction design, storytelling, and visual style. Because IWSDK runs on the open web, projects can be tested instantly in a browser and shared via a simple URL. This combination of WebXR delivery and AI‑driven automation positions the toolkit as a bridge between professional developers and non‑technical creators who previously found VR tooling too complex to approach.

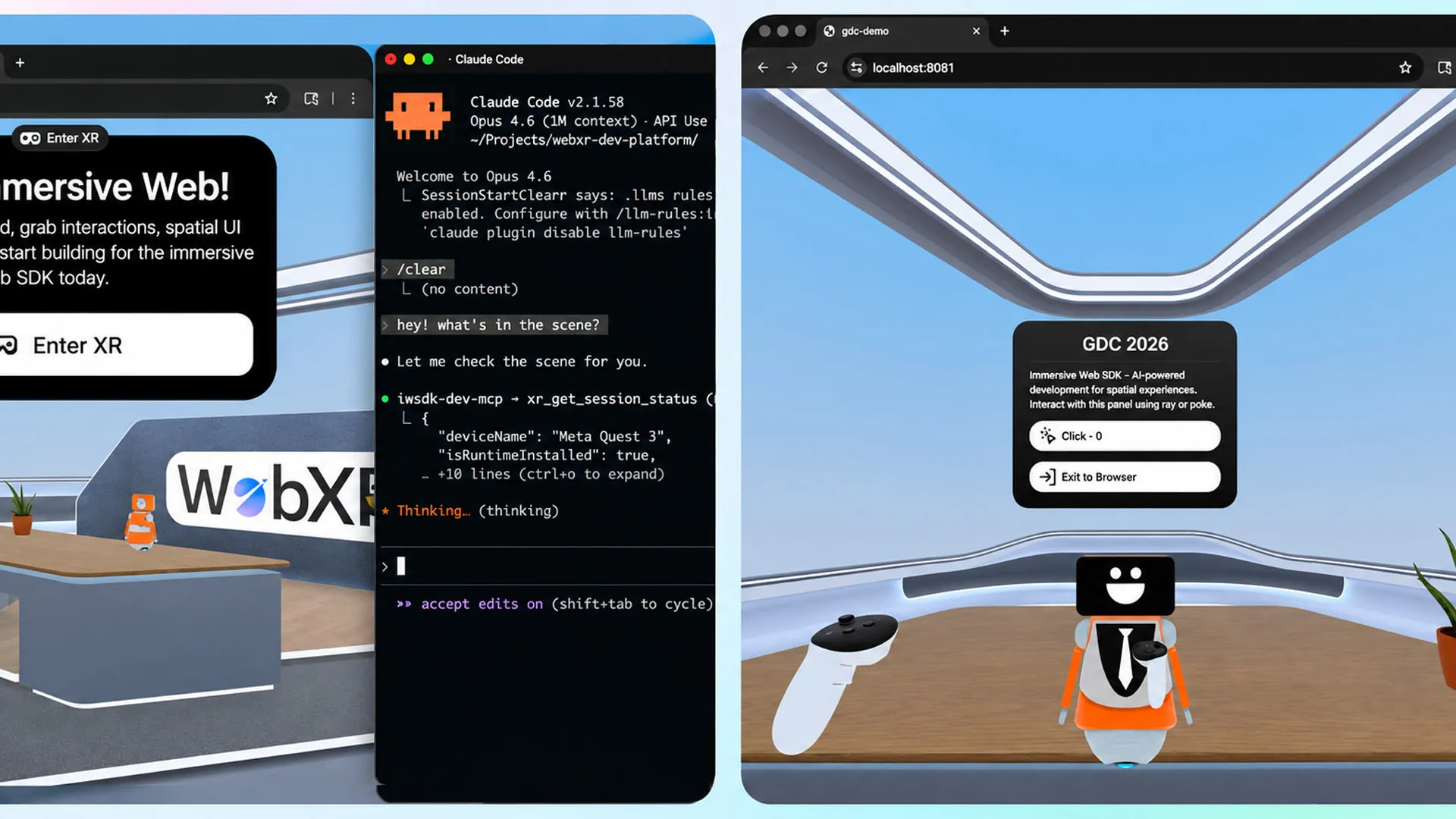

How Agentic AI Workflows Power AI‑Driven VR Creation

The core of Meta’s update is an “agentic workflow” that turns AI assistants into active collaborators rather than one‑off code generators. Tools such as Claude Code, Cursor, GitHub Copilot, and Codex are integrated so the AI can iteratively write, test, and validate code inside the IWSDK environment. Meta describes this as a closed‑loop system: the AI proposes changes, runs them, checks for errors, then refines the implementation until the experience behaves as intended. For VR authors, this transforms AI‑powered VR creation from simple boilerplate generation into end‑to‑end project support. Meta’s own demonstration—rebuilding the ‘Project Flowerbed’ gardening experience using existing art assets—showed that an application previously comprising tens of thousands of lines of code could be recreated in about 15 hours via the new workflow, underscoring how much engineering overhead the agentic process can offload.

Lowering Barriers for Designers, Artists, and New Creators

By embedding AI directly into a WebXR framework, Meta is targeting creators who may have strong concepts but limited programming expertise. IWSDK’s abstractions for interaction, physics, and spatial UI reduce the need to understand engine internals, while the agentic AI workflow translates natural‑language instructions into functioning VR scenes. Designers can iterate on layouts, artists can experiment with spatial storytelling, and educators can prototype interactive lessons without mastering JavaScript or WebXR APIs. The toolkit’s web‑first nature further reduces friction: experiences run in standard browsers, and Meta notes that over one million monthly users already access WebXR content on Quest, giving creators an immediate audience. As AI fills in technical gaps, the creative emphasis shifts toward narrative, pacing, and user comfort. This democratization of no‑code VR development could significantly expand who feels confident building immersive experiences in the first place.

Open‑Source Strategy and the Future of the Immersive Web

Meta’s decision to keep IWSDK open‑source under an MIT license aligns with its broader push to grow the immersive web ecosystem. An open codebase invites contributions from independent developers, toolmakers, and researchers who can extend the framework with new interaction patterns or AI capabilities. Because projects are delivered via WebXR, creators are not locked into a single app store or platform, and distribution via simple URLs makes experimentation low‑risk. For Meta, nurturing an ecosystem of AI‑assisted WebXR experiences strengthens the value of its hardware while encouraging cross‑device compatibility. For the wider community, it offers a shared foundation for building and sharing reusable components, templates, and AI prompts. As more non‑technical creators adopt IWSDK, the immersive web SDK may become a de facto standard for browser‑based VR, blending open technology, AI assistance, and creator‑friendly workflows into a more accessible future for immersive content.