AI Memory Infrastructure Hits the Limits of Traditional Architectures

AI workloads, particularly large language models and agentic systems, are pushing memory systems harder than any previous wave of computing. Training and inference both rely on enormous working sets that must be kept as close to compute as possible, yet conventional server designs still treat memory as a fixed, socket-bound resource. DRAM shortages have amplified this tension, exposing how difficult it is to keep adding capacity through conventional DIMMs alone. At the same time, GPU-centric AI clusters are revealing a structural tradeoff: high-speed memory tiers like HBM and DRAM deliver microsecond latency but remain tightly capacity-constrained and expensive, while traditional storage offers scale at latencies unsuited to real-time inference. The result is a pronounced bottleneck in AI memory infrastructure, where recomputing or reloading context becomes a hidden tax on performance, power and total infrastructure footprint.

Compute Express Link and Memory Godboxes Make Memory a Pooled Resource

Compute Express Link is emerging as a cornerstone of disaggregated, memory-centric data center designs. Initially introduced as a cache-coherent interface that rides on PCIe, CXL now enables servers to tap into external “memory godboxes” that pool DRAM and expose it as a fungible resource across systems. Early CXL 1.x implementations made it possible to add expansion memory devices that appear to the operating system as additional NUMA nodes. With CXL 2.0, switching support allows this memory to be dynamically pooled and reallocated between servers, while CXL 3.0 goes further by enabling fabric-scale topologies and shared memory regions that multiple machines can access concurrently. Bandwidth scales with PCIe 6.0, reaching up to hundreds of gigabytes per second, though with added latency comparable to a NUMA hop. Together, these capabilities underpin data center memory scaling without requiring every node to be overprovisioned with local DRAM.

High-Density DDR5 RDIMM Technology Boosts Capacity and Power Efficiency

While CXL tackles disaggregation, advances in DDR5 RDIMM technology are attacking the problem from the module level. Micron’s 256GB DDR5 RDIMMs, built on its 1 gamma DRAM process and advanced 3D stacking with through-silicon vias, are designed specifically for AI data centers that demand both capacity and efficiency. These modules deliver transfer rates up to 9.2 trillion transfers per second, over 40 percent higher than current high-volume memory hardware. Just as importantly, replacing two 128GB RDIMMs with a single 256GB module can cut operating power by more than 40 percent, easing thermal and energy constraints in dense AI servers. That combination of bandwidth, capacity and power savings allows architects to push more CPU cores and AI agents per node without breaching power envelopes. Co-validation with current and next-generation server platforms is intended to accelerate adoption, turning high-capacity DDR5 RDIMMs into a foundational layer of modern AI memory infrastructure.

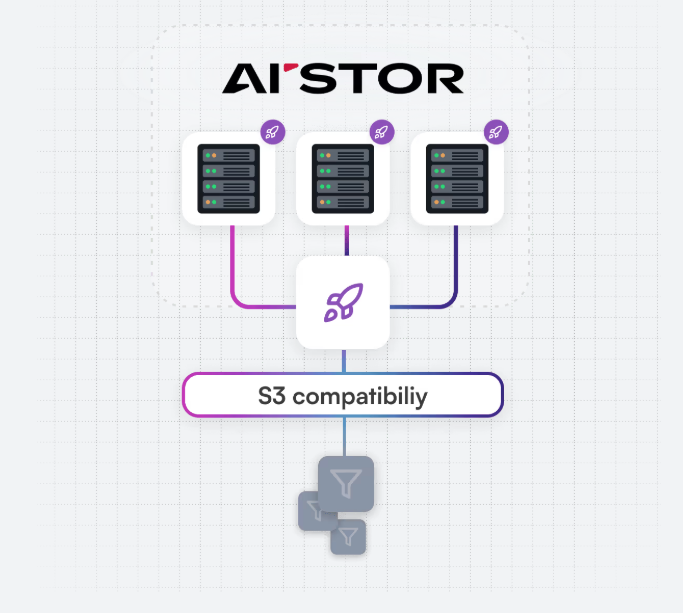

MemKV: A Petabyte-Scale Memory Store for AI Inference Context

Beyond training-time capacity, inference introduces its own memory challenges, especially as AI agents evolve toward multi-step reasoning and long-context interactions. MinIO’s MemKV targets this gap by providing a shared, persistent context memory store designed for petabyte-scale AI inference memory. In today’s architectures, limited HBM and DRAM capacity near GPUs often forces the system to discard context between inference cycles, incurring a recompute tax as GPUs regenerate past state. MemKV addresses this by offering microsecond-level retrieval of context at massive scale, effectively extending the memory tier without falling back to high-latency storage. In internal benchmarks on a 128-GPU deployment with 128K-token context windows, MinIO reports GPU utilization jumping from about 50 percent to over 90 percent, translating into substantial compute savings. Running on NVIDIA BlueField-4 STX and integrated with NVIDIA’s networking stack, MemKV enables entire GPU clusters to share context data efficiently across inference workloads.

From Storage-Centric to Memory-Centric AI Infrastructure Design

Taken together, CXL-based memory fabrics, high-density DDR5 RDIMMs and specialized inference stores like MemKV signal a structural pivot in data center design. Instead of treating storage as the primary scaling vector and memory as a fixed, socket-local afterthought, AI infrastructure is becoming explicitly memory-centric. CXL memory godboxes dissolve the rigid link between servers and their DRAM, letting operators pool and share capacity across racks. Advanced DDR5 RDIMM technology increases per-node density and power efficiency, making it feasible to host larger models and more concurrent agents. MemKV extends the memory tier into a shared context layer tuned for long-running, multi-step AI tasks, closing the gap between low-latency memory and high-capacity storage. This new stack is not merely an optimization; it is a prerequisite for sustaining AI growth, enabling data center memory scaling that can keep up with ever-larger models and more demanding inference patterns.