OpenAI Splits Its Real-Time Voice API into Three Specialized Models

OpenAI has expanded its real-time voice API with three dedicated GPT-Realtime models, signaling a shift from generic chatbots to task-focused voice infrastructure. Instead of a single, monolithic model, developers now get a split stack: GPT-Realtime-2 for reasoning, GPT-Realtime-Translate for live translation, and GPT-Realtime-Whisper for streaming transcription. All three are exposed through the Realtime API and are designed to respond while people are still speaking, keeping latency low enough for natural conversation. This modular design matters for teams building live assistants, call flows, and tool-using agents, where not every step needs the same level of intelligence or cost. By separating reasoning, translation, and transcription, OpenAI aims to move orchestration complexity—such as context handling, interruptions, and tool calls—back into the model layer, giving developers more direct control over which workloads get deeper processing and which prioritize speed.

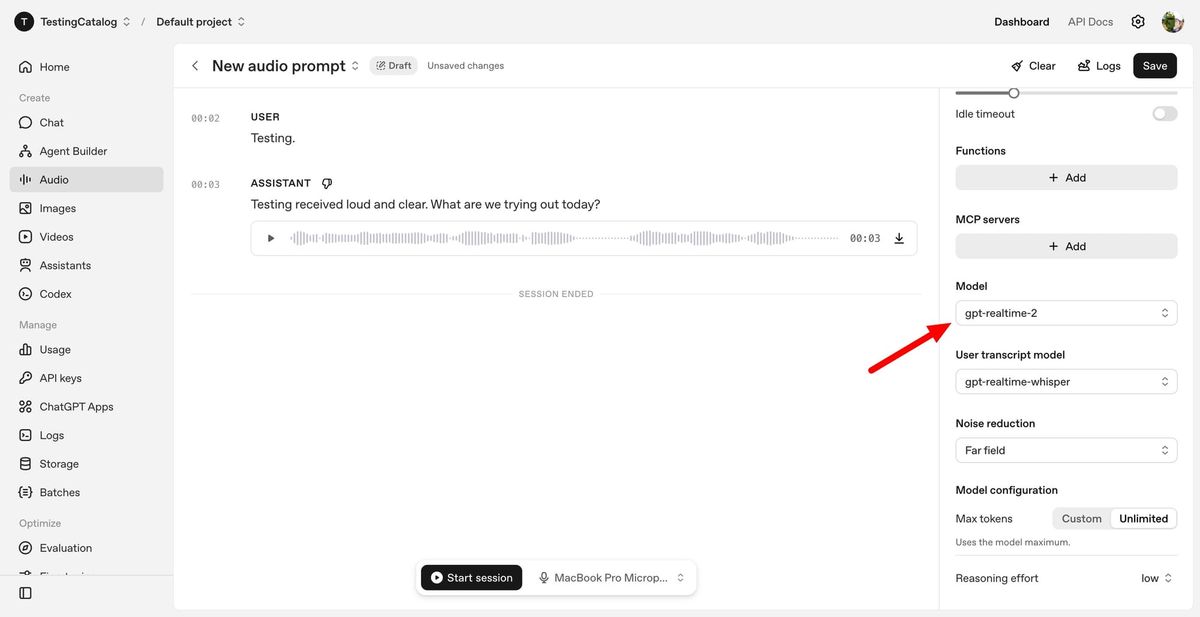

GPT-Realtime-2 Brings GPT-5-Class Reasoning to Live Voice Agents

GPT-Realtime-2 is the reasoning engine in OpenAI’s new audio lineup, built for live voice interactions that behave more like human conversations than scripted IVR trees. OpenAI describes it as using GPT-5-class reasoning, with a context window expanded from 32K to 128K tokens so it can track longer, more complex exchanges. The model can handle interruptions, follow-up questions, and topic shifts while continuing to manage tools in the background. Features like short spoken preambles—“let me check that”—help mask background processing, and parallel tool calls allow the system to perform multiple actions simultaneously. Developers can tune reasoning effort from minimal to xhigh, trading latency for depth when tasks become more demanding. Internal benchmarks show GPT-Realtime-2 outperforming GPT-Realtime-1.5 on audio intelligence and instruction-following tests, aiming to reduce brittle behavior where voice agents previously froze, lost context, or failed silently mid-task.

GPT-Realtime-Translate Targets Live Multilingual Conversations

GPT-Realtime-Translate focuses on live translation AI for voice, converting speech from more than 70 input languages into 13 output languages while keeping pace with the speaker. Designed for customer support, cross-border sales, education, events, and media localization, it aims to preserve meaning and context even when people talk quickly, change topics suddenly, or use regional pronunciations and domain-specific jargon. The model can provide translated speech and, where needed, real-time transcriptions, enabling experiences like bilingual customer-service calls or multilingual webinars without manual interpreters. Because translation is separated from deep reasoning, developers can pair GPT-Realtime-Translate with GPT-Realtime-2 only when advanced logic is required, keeping most translations fast and cost-efficient. Early adopters in telecom and media are testing it for multilingual voice interactions, highlighting how real-time voice APIs are becoming a core layer for global-facing products rather than an add-on convenience.

GPT-Realtime-Whisper Delivers Low-Latency Streaming Transcription

GPT-Realtime-Whisper extends OpenAI’s Whisper lineage into a streaming voice transcription API optimized for live workflows. Instead of waiting for recordings to finish, developers can capture text as participants speak, enabling live captions, real-time meeting notes, or voice-driven interfaces where text must update continuously. OpenAI positions the model for use cases like operations dashboards, creator tools, and productivity apps that rely on continuous speech recognition. Improvements over earlier Whisper-based offerings include lower latency and better handling of conversational dynamics such as overlapping speakers and shifting topics. In practice, GPT-Realtime-Whisper can sit alongside GPT-Realtime-2 and GPT-Realtime-Translate: one layer turns speech into text, another reasons over it, and a third converts it into another language when needed. This separation lets teams decide where to invest higher reasoning levels and where a lightweight, fast transcription pipeline is sufficient for their voice applications.

Why a Modular GPT-Realtime Stack Matters for Developers and Businesses

The GPT-Realtime models are designed as building blocks rather than a one-size-fits-all assistant, reflecting how voice AI is moving from novelty to operational infrastructure. Businesses are exploring three patterns—voice-to-action, systems-to-voice, and voice-to-voice—where spoken commands trigger workflows, software systems speak back, and two parties converse through an AI intermediary. By separating reasoning, translation, and transcription, OpenAI lets teams dial up model depth only where it pays off, such as complex troubleshooting or tool orchestration, while keeping more routine tasks fast and inexpensive. GPT-Realtime-2 can carry conversation state through interruptions and tool hops, reducing reliance on fragile session logic outside the model. Meanwhile, GPT-Realtime-Translate and GPT-Realtime-Whisper handle multilingual and transcription workloads as dedicated services. Together, they turn the real-time voice API into an extensible layer developers can embed across apps, call centers, and devices, opening room for richer voice-native experiences.