Real-World GPT-5.5 Pricing Undercuts Efficiency Narrative

OpenRouter’s April 2026 analysis of live API traffic shows that GPT-5.5 pricing behaves very differently in production than headline claims suggest. After customers migrated from GPT-5.4, effective AI model costs for GPT-5.5 rose between 49 and 92 percent across prompt-size bands, even though OpenAI positioned the model as more token efficient. The gap stems from how production workload efficiency actually plays out once prompt and completion patterns stabilize at scale. OpenAI doubled list prices from a short-context baseline of USD 2.50 (approx. RM11.50) per million input tokens and USD 15 (approx. RM69.00) per million output tokens for GPT-5.4 to USD 5 (approx. RM23.00) and USD 30 (approx. RM138.00) respectively for GPT-5.5, with an even higher GPT-5.5 Pro tier. But OpenRouter’s usage logs indicate that, for many enterprise AI expenses, completion behavior rather than nominal rates ultimately decides the bill.

How GPT-5.5 Output Patterns Drive Higher AI Model Costs

The OpenRouter study shows that GPT-5.5’s token efficiency is highly context-dependent. For very long prompts above 10,000 tokens, GPT-5.5 produced 19 to 34 percent fewer completion tokens than GPT-5.4, which can offset higher unit prices in niche long-context workloads. However, in the crucial 2,000–10,000 token band, median completions jumped 52 percent, and even sub‑2,000 token prompts saw a 7 percent increase. This shift matters because most production workloads—retrieval assistants, coding copilots, workflow agents, and support bots—operate mostly in short and mid-range prompts, not at the extreme context limits showcased in benchmarks. OpenRouter reports that average cost per million tokens nearly doubled for prompts under 2,000 tokens, rising from USD 4.89 (approx. RM22.50) to USD 9.37 (approx. RM43.00), while larger windows like 50,000–128,000 tokens still climbed from USD 0.74 (approx. RM3.40) to USD 1.10 (approx. RM5.00).

Production Workload Efficiency vs. Marketing Benchmarks

OpenAI maintains that GPT-5.5 is more token efficient than GPT-5.4 and delivers similar per-token latency in real-world serving. Technically, both claims can be true while still masking higher enterprise AI expenses. Controlled benchmarks typically isolate long-context tasks and single-turn prompts, minimizing retries, tool calls, or user reformulations. Actual production workloads are messier: short prompts are repeatedly invoked, tools are called and retried, and users ask follow-up questions. Each additional turn generates new output tokens, amplifying any tendency toward longer completions in the 2,000–10,000 token range. OpenRouter’s April data indicates that shorter answers at the longest contexts are not enough to neutralize the extra cost where most traffic resides. For buyers, this exposes a critical gap between benchmarked production workload efficiency and the real effective cost per interaction once systems go live.

Enterprise Budgeting: From List Prices to Token-Behavior Reality

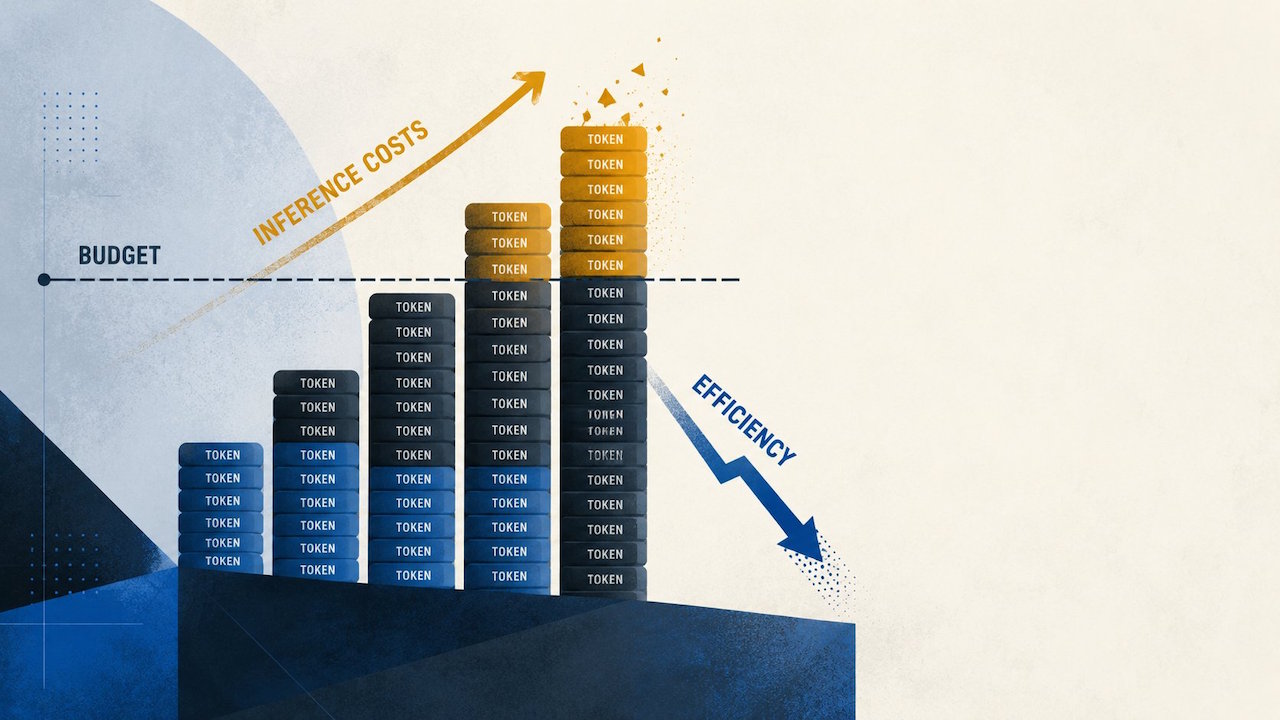

The discrepancy between list pricing and observed GPT-5.5 pricing forces enterprises to rethink how they approve and manage AI model costs. Finance teams may greenlight GPT-5.5 based on OpenAI’s rate card, assuming advertised efficiency gains will translate into savings. But platform engineers and product owners inherit the ongoing token bill, which is governed by prompt mix, completion drift, and user behavior. OpenRouter’s data shows that for prompts under 2,000 tokens, the average cost per million tokens rose 92 percent, and even the 2,000–10,000 token band saw a 69 percent increase. That means routing strategies become budget levers: organizations may constrain GPT-5.5 to long-context jobs, premium user tiers, or narrow use cases where output compression genuinely dominates. Without production traces and controlled canary rollouts, adopting GPT-5.5 can quietly lock teams into a structurally higher monthly AI spend.

Implications for Model Selection and Pricing Transparency

The OpenRouter telemetry also reframes the broader competitive landscape. A separate comparison cited in the analysis notes that GPT-5.5’s base output pricing sits above Anthropic’s Claude Opus 4.7, while input pricing remains roughly comparable. Anthropic, for its part, warns that its newer tokenizer can consume more tokens depending on content, underscoring that list rates alone rarely predict real AI model costs. For enterprises, the GPT-5.5 experience highlights a need for deeper pricing transparency around tokenization, response length distributions, and expected completion ratios across prompt bands. Procurement and architecture teams should treat model selection as an empirical exercise: collect production traces, simulate user behavior, and evaluate effective cost per resolved task, not per token. Until vendors expose clearer guidance on how their models behave across realistic workloads, organizations will have to build their own observability layers to keep enterprise AI expenses under control.