Gemini Omni Surfaces as a Unified AI Video Editing Model

Gemini Omni is emerging as Google’s next step toward unified AI video editing, quietly appearing in a revised Gemini interface ahead of its formal debut. A new model card describes the experience as “Create with Gemini Omni,” promising the ability to remix videos, edit directly in chat, and apply templates within a single surface. Early access appears limited and possibly accidental, with usage limits and fast‑burning credits hinting at a metered access model. Initial reactions suggest that Omni’s raw video generation quality lags behind leading rivals, particularly in cinematic fidelity, but its strength lies elsewhere. The model excels at editing tasks such as removing watermarks, swapping objects in clips, and rewriting scenes based on natural language instructions. This mirrors Google’s earlier approach with its Nano Banana image model, prioritising powerful edits over headline‑grabbing generation quality as it builds a cohesive multimodal Gemini stack.

From Image Playbook to Video: Why Editing Comes First

The early Gemini Omni features indicate that Google is deliberately optimising for AI video editing rather than chasing the most photorealistic video generation from day one. By investing in robust edit‑in‑chat capabilities—replacing elements, adjusting scenes, and applying structured templates—Google is betting that creators value control and iteration more than purely novel clips. This strategy echoes the trajectory of Nano Banana, which launched as a native Gemini image model with average generation quality but standout editing performance before maturing into a more advanced system. Hints of tiered Omni variants, likely Flash and Pro, also suggest Google will tune different levels of performance and cost for various creator needs. For working editors and social video teams, the real appeal is not abstract benchmarks but shaving minutes or hours off repetitive cuts, clean‑ups, and revisions that previously required manual keyframe work inside traditional timelines.

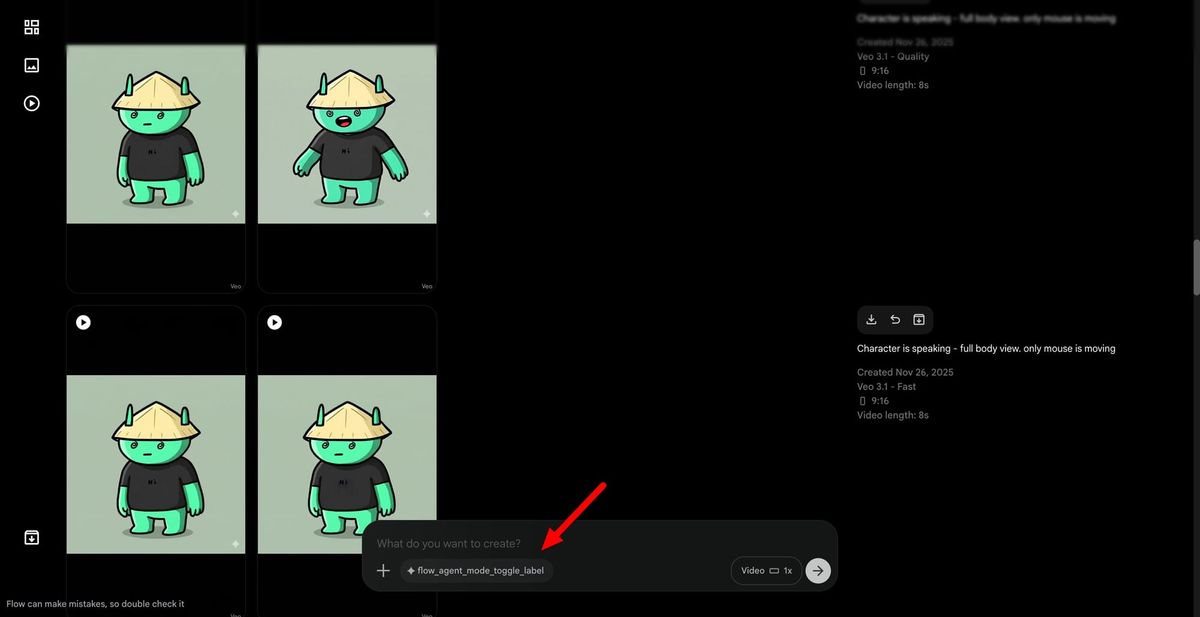

Flow’s Agent Mode Turns Chat Into a Video Production Console

Alongside Gemini Omni, Google is preparing a new Agent Mode for its Flow video tool built on the Veo model family, pushing automated video production deeper into the workflow. Hidden references in recent builds describe a toggle on the prompt bar that lets creators decide when to hand control to an AI video assistant. Once enabled, this agent can plan scenes, discuss in‑progress changes, trigger generation workflows, and manage both project‑level and app‑level creative tools directly from a chat interface. Instead of manually bouncing between panels to storyboard, queue clips, and swap reference assets, filmmakers could describe ideas conversationally and watch the agent assemble and revise the project. This pattern mirrors a broader trend across Google’s creative products, such as Stitch, where an embedded agent coordinates multi‑step tasks, positioning conversation as the central interface for complex, iterative media work.

How AI Video Assistants Could Reshape Creator Workflows

Taken together, Gemini Omni and Flow’s Agent Mode reveal Google’s vision of AI video assistants as orchestration layers, not just effect engines. Omni brings multimodal intelligence into the edit itself—interpreting prompts, manipulating scenes, and unifying tools—while Flow’s agent coordinates the higher‑level process of planning and progressing entire projects. For creators, this could mean less time spent on mechanical editing and more on refining narratives, aesthetics, and brand voice. Routine tasks such as rough cuts, asset swaps, and small visual fixes could be delegated to automated video production pipelines guided by simple chat instructions. At the same time, the presence of usage limits and credit‑based access suggests that creators will have to balance AI convenience with resource management. As these systems mature, the real competitive edge may go to those who learn to “direct the director,” using natural language to steer AI without surrendering creative control.