AI Agent Hype Meets Near-Zero Enterprise Adoption

AI agents promise autonomous workflows and task absorption, but enterprise AI deployment remains nascent. At the AI Agent Conference, investors such as Jai Das argued that enterprise AI adoption sits at “zero or maybe at one” on a ten-point scale, even as the event itself grew to around 3,000 attendees. Startups and frameworks pitch agentic platforms that automate sales follow-ups or marketing workflows, while conference organizers describe a “next wave” of technology that removes tasks rather than adding more. Yet production AI agent adoption is limited to narrow domains like customer support, where T-Mobile’s agents handle hundreds of thousands of conversations daily after a year-long build. The contrast is stark: rapid prototyping and glossy demos versus the painstaking work needed to make AI agent production safe, reliable, and auditable across diverse enterprise environments.

Imperfect Data Is a Bottleneck, Not a Blocker

Enterprises often delay AI agent production because their data estates are messy, fragmented, and incomplete. Joe Rose of JBS Dev challenges the assumption that data quality must be pristine before meaningful generative or agentic workloads can launch. Modern large language models exhibit surprising robustness, extracting value from half-written prompts and heterogeneous records. In one medical billing project, gen AI stitched together PDFs, images, and inconsistent fields—sometimes the doctor’s name, sometimes the procedure—then used agentic workflows to compare patient records with insurance contracts. The outcome was not flawless and still required a human in the loop, but it showed that imperfect data can support real business workflows. The lesson for data quality machine learning initiatives is pragmatic: data issues are a critical bottleneck to manage with guardrails, validation, and escalation paths, not an absolute blocker that justifies endless transformation programmes before deploying any AI.

Security, Simulation, and Human Oversight in Production Agents

As AI agents inch toward enterprise AI deployment, security and testing have become central concerns. Datadog’s chief scientist Ameet Talwalkar warns that agent-generated “vibe-coded” software cannot simply be trusted in production; reviewing and validating this code is now harder than building traditional systems. Datadog is responding by extending observability tools to model real-world systems and predict issues before they occur. Framework vendors echo this shift. CrewAI’s founder notes that customer demand pushed them from pure agent-building toward enterprise-grade security and governance features. Simulation is another emerging pillar: ArklexAI’s ArkSim product generates synthetic user interactions to stress-test non-deterministic agents, particularly customer-facing chatbots. Leaders acknowledge that one can spin up an agent in minutes, but predicting its behavior with thousands of users requires simulated environments plus human oversight. This triad—security controls, simulation testing, and humans in the loop—is becoming the minimum bar for credible AI agent production in enterprises.

The AI Last Mile: From Model Capability to Cost Sustainability

Even when models and agents work technically, many organisations stall at the “AI last mile”: making systems economically viable and operationally sustainable. JBS Dev’s Joe Rose highlights how expectations shaped by traditional software—“we build it, it works, we forget about it”—clash with probabilistic AI systems that require ongoing monitoring, retraining, and human intervention. Tooling can now compensate for poor data quality, but every layer of guardrails, human review, and evaluation adds operational cost. Enterprises must therefore design workflows where humans focus on edge cases and high-risk decisions, while agents reliably handle routine tasks. Without this balance, AI cost sustainability suffers, eroding the ROI narrative behind enterprise AI deployment. The path forward involves carefully scoped use cases, explicit tolerances for error, and metrics that track not just accuracy but end-to-end unit economics of AI-assisted processes, from infrastructure spend to human review time.

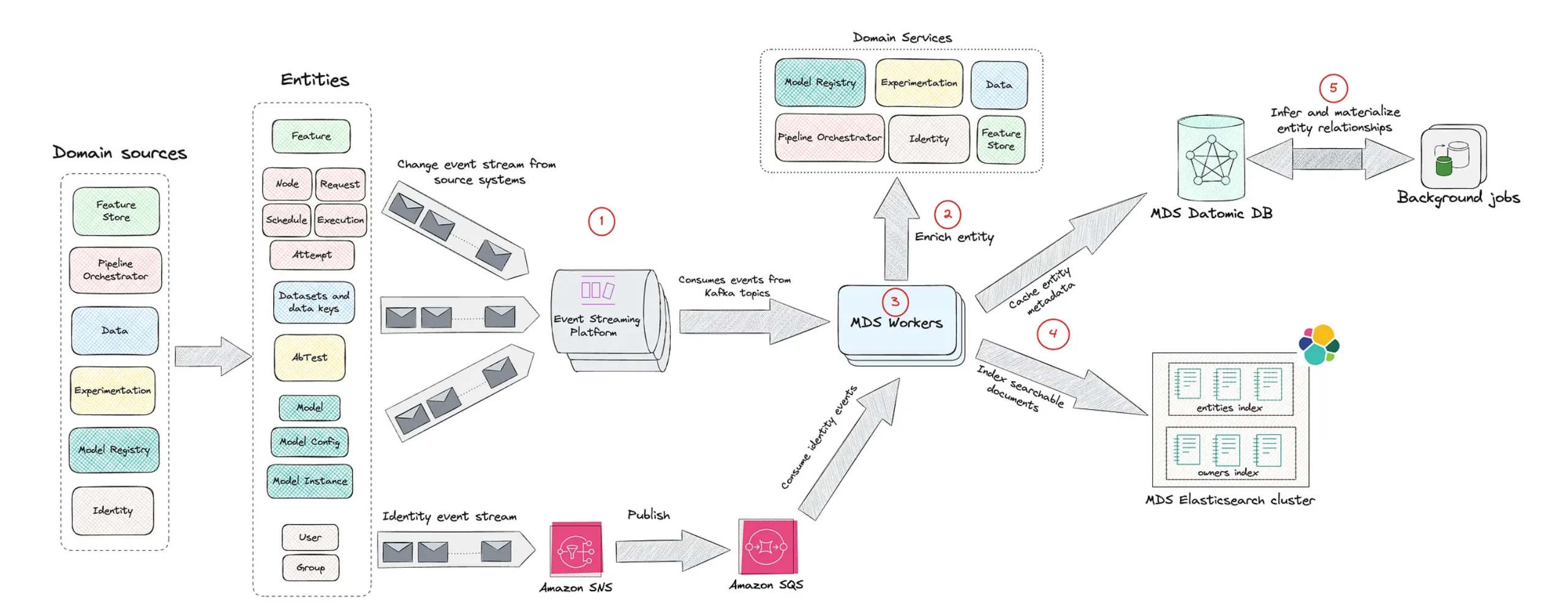

Netflix’s Model Lifecycle Graph and the Future of ML Governance

As enterprises scale machine learning, the challenge shifts from building individual models to managing sprawling ecosystems of datasets, features, workflows, and services. Netflix’s Model Lifecycle Graph exemplifies emerging ML model lifecycle management strategies. Instead of treating pipelines as linear, Netflix models ML assets as a graph of nodes and relationships, capturing dependencies between datasets, feature sets, models, evaluations, and production systems. This metadata-centric approach improves discoverability—teams can find and reuse existing assets—and clarifies lineage, so engineers see how an upstream data change cascades to downstream models and applications. For AI agents, which often orchestrate multiple models and data sources, such graphs are vital. They underpin governance, impact analysis, and incident response, enabling enterprises to trace outcomes back through complex chains of ML components. Netflix’s architecture signals where AI agent production must head: toward rich, interconnected metadata layers that make complex AI environments observable, governable, and auditable at scale.