From AI Hype to AI Slop: Why Reliability Is Now the Main Feature

AI coding agents are no longer experimental sidekicks; they are increasingly woven into day‑to‑day software work. That shift is exposing a hard problem: the agents can generate code faster than teams can safely review it. When vague prompts become vague specifications, agents fill in the gaps with undocumented assumptions, creating what critics now call “AI slop” — code that compiles but hides contradictions, missing requirements, or subtle misbehaviors. After early incidents and public scrutiny around agent reliability, major platforms are moving from raw capability to structured control. Instead of simply making agents write more code, AWS, GitHub, OpenAI, and Anthropic are building scaffolding around them: formal requirement checks, spec-driven development workflows, automated orchestrators, and open AI alignment testing toolkits. Together, these tools are reframing the conversation from “Can agents code?” to “Can we systematically verify what they are doing before it reaches production?”

AWS Kiro Turns Requirements into Math Problems, Not Just Prompts

Amazon Web Services is going after AI slop at its source: flawed or ambiguous requirements. Its Kiro AI coding tool now includes a Requirements Analysis feature that runs before any agent writes a line of code. The system uses a large language model to translate natural-language requirements into formal logic, then feeds that logic into an SMT solver — an automated reasoning engine that can mathematically prove whether requirements contradict each other or leave exploitable gaps. By catching inconsistencies up front, Kiro targets some of the hardest and most expensive bugs to fix: those baked into the specification, not the implementation. AWS’s applied scientists warn that every vague prompt leads to a vague spec and hidden agent decisions. Requirements Analysis aims to expose those decisions, turning fuzzy intent into checkable structure and tying AI coding agents directly into a more rigorous code quality verification pipeline.

GitHub’s Spec-Kit Makes Agents Follow the Spec, Not Improvise

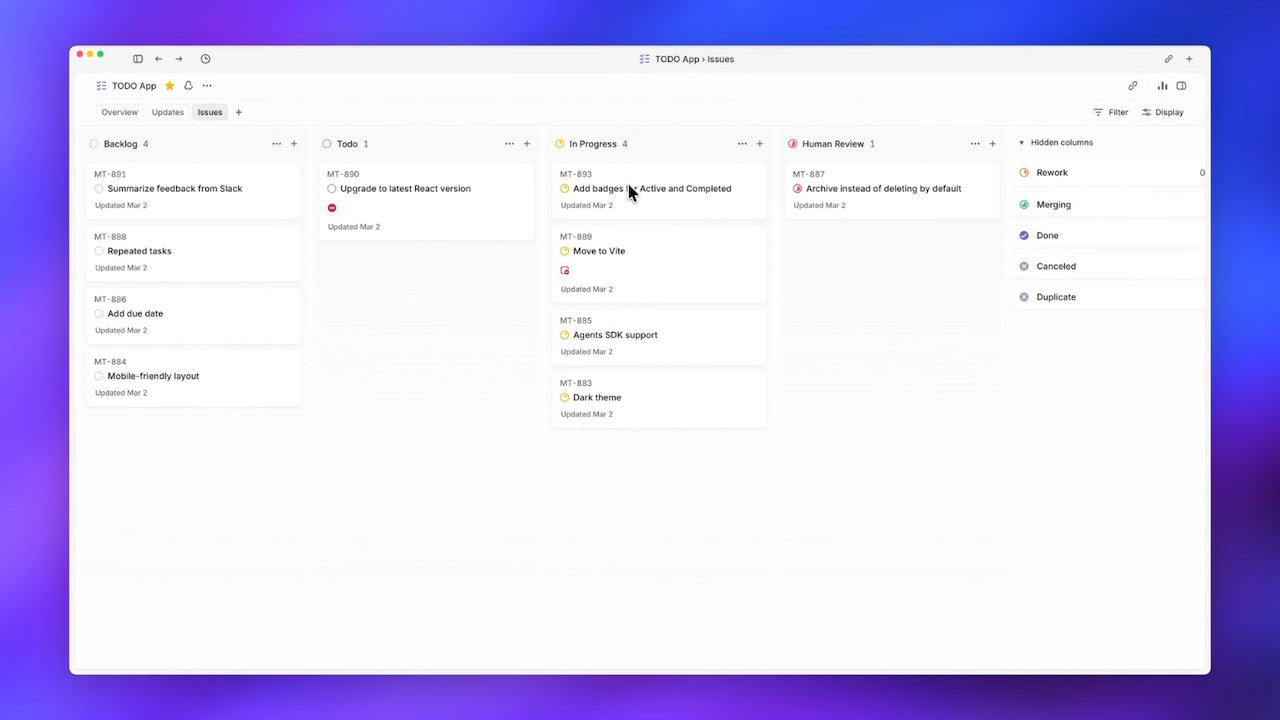

GitHub is tackling AI agent reliability with Spec-Kit, an open-source toolkit designed for spec-driven development. Rather than asking an AI coding agent to handle a feature from a single, monolithic request, Spec-Kit structures work into a pipeline: Specify, Plan, Tasks, and Implement. A feature idea first becomes a written spec, then a detailed plan, then a list of discrete tasks before any implementation steps are generated. This workflow pushes teams to externalize intent and assumptions, giving both humans and agents a shared source of truth. With more than 90,000 stars and over 8,000 forks before its full open-source release, Spec-Kit is already attracting teams willing to trade raw speed for predictable behavior. The challenge now is operational: managing installation details, Python dependencies, and extension drift so that spec-driven development becomes a practical standard for AI-assisted projects rather than an aspirational pattern.

Anthropic’s Petri 3.0 and OpenAI’s Symphony Bring Production Discipline

While AWS and GitHub focus on how agents start coding, Anthropic and OpenAI are tightening how they are evaluated and orchestrated in real environments. Anthropic’s Petri 3.0, now stewarded by Meridian Labs, separates the auditor and target models so evaluators can be tuned independently of the systems under test. The update adds Dish and Bloom-based behavior checks, making AI alignment testing more modular and production-aware for deployed models. OpenAI’s Symphony tackles a different bottleneck: human attention. Released as an open-source specification with an Elixir reference, Symphony lets Codex agents pull tickets directly from Linear, treating the ticket system as a state machine. Each ticket gets its own agent, and crashed agents are automatically respawned, yielding a reported sixfold increase in merged pull requests over three weeks. Together, Petri and Symphony show how structured evaluation and orchestration can scale AI agents without surrendering oversight.

Toward a Verified Future for AI Coding Agents

Taken together, these efforts point to an industry-wide shift: preventing AI slop through up-front structure and continuous verification, not after-the-fact firefighting. AWS is hardening the very notion of requirements with formal methods. GitHub is normalizing spec-driven development workflows so AI coding agents operate within explicit plans and task lists. Anthropic’s Petri 3.0 turns AI alignment testing into a modular, open toolbox for assessing real deployments, while Meridian Labs is tasked with broadening its trust and usability. OpenAI’s Symphony shows that coordination and automation can multiply throughput, but only when paired with clear workflows and guardrails. For engineering leaders, the emerging message is that AI agent reliability won’t come from a single smarter model. It will come from an ecosystem of verification, orchestration, and alignment tools that make agents legible, testable, and accountable before their code touches production.