Why Use an ESP32-S3 for an AI Voice Assistant?

The ESP32-S3 is a powerful yet affordable microcontroller that can handle on-device AI tasks, making it ideal for a local ESP32 voice assistant. With its dual-core processor, generous RAM (on boards like the FireBeetle 2 ESP32-S3 N16R8), and support for high-speed peripherals, it can manage audio input, AI inference, and display rendering without relying on the cloud. This means your AI chatbot microcontroller can respond quickly, with low latency and improved privacy, because no audio needs to be sent to external servers. In projects like the Xiaozhi-based assistant, the ESP32-S3 runs a compact local language model Arduino-style using Espressif’s ESP-IDF toolchain. The result is a self-contained voice assistant that listens through a MEMS microphone, processes your commands locally, and speaks back through an I2S amplifier and speaker while showing status on a small color display.

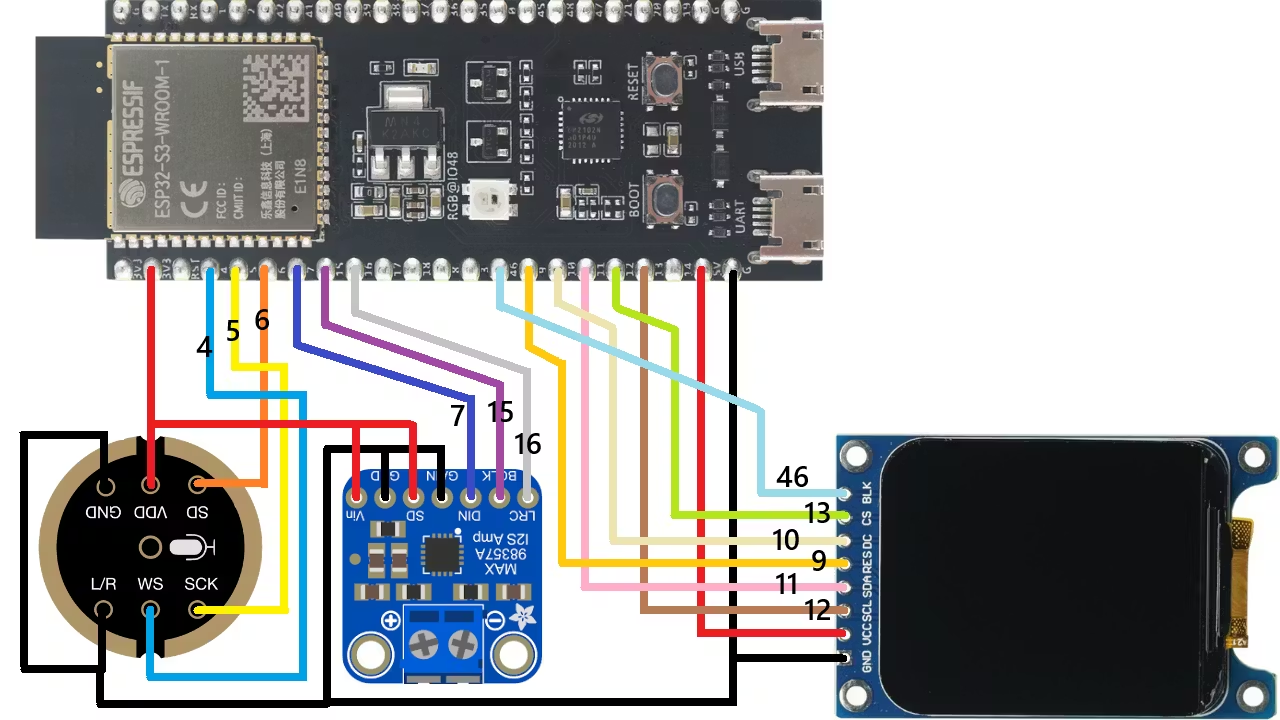

Hardware Setup: Microphone, Amplifier, and ST7789 Display

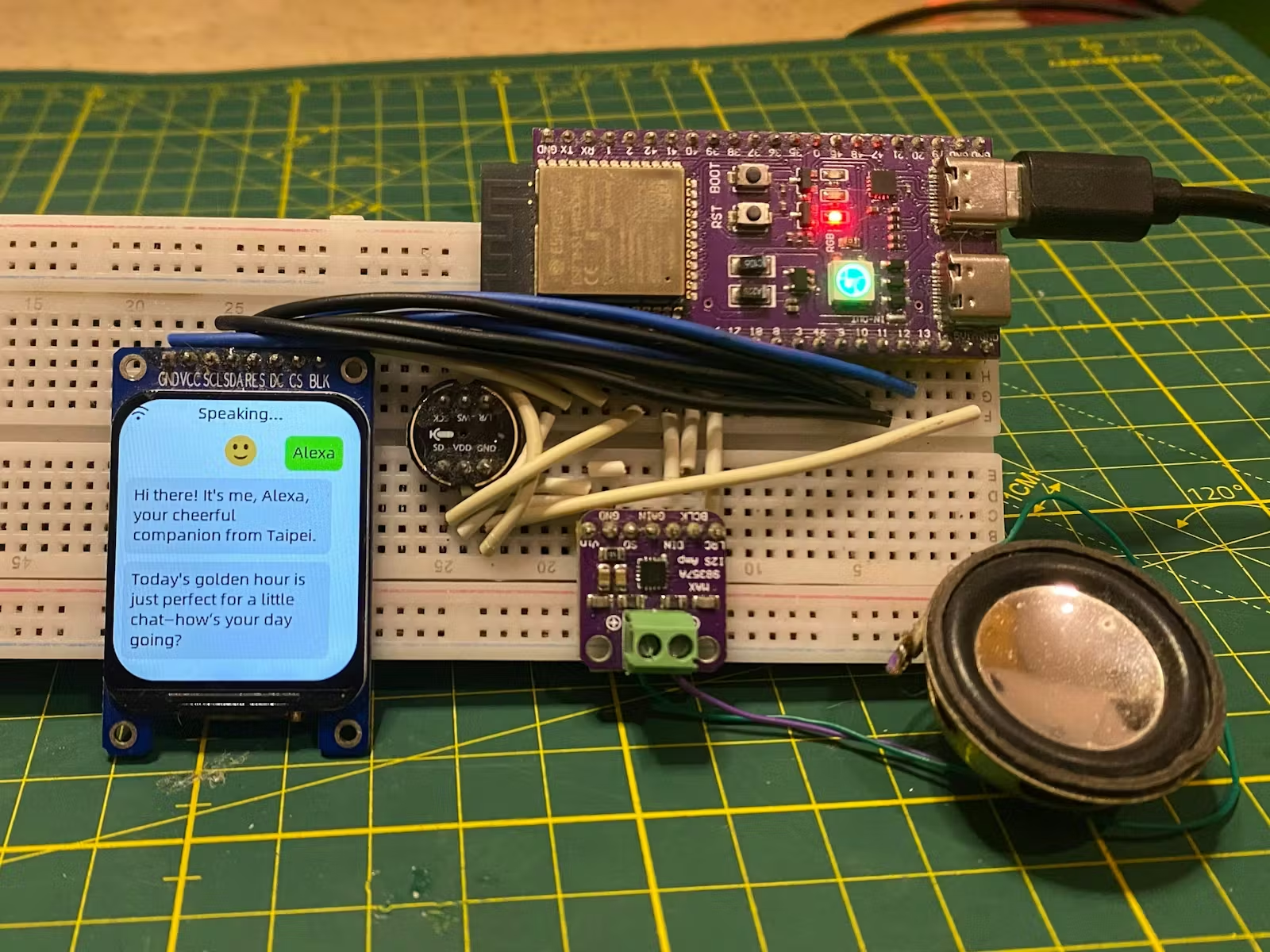

To build a functional ESP32 voice assistant, you need three core hardware blocks: audio in, audio out, and visual feedback. For audio input, an INMP441 MEMS microphone connects to the ESP32-S3 via I2S, capturing clear digital audio directly on the microcontroller. For audio output, a MAX98357A I2S amplifier drives a small speaker, turning the AI assistant’s responses into audible speech. Visual interaction comes from an ST7789 TFT display, which connects over SPI and can show real-time chatbot messages, listening indicators, and system status. Following the wiring schematic from the Xiaozhi project, you place everything on a breadboard with jumper wires, keeping I2S and SPI lines short and tidy. This combination of components gives your AI chatbot microcontroller the same multimodal feel as a smart speaker with a screen: you can see what it heard, what it’s thinking, and how it replied.

Installing ESP-IDF and the Xiaozhi Framework

With the hardware assembled, the next step is preparing the software environment. Install Visual Studio Code and add Espressif’s ESP-IDF extension (version 5.5.4 or lower, as used in the Xiaozhi ESP32-S3 tutorial). ESP-IDF gives you full control over the ESP32-S3, from configuring I2S and SPI drivers to flashing firmware. The Xiaozhi framework provides the AI layer: it bundles a compact local language model and helper code to implement wake-word detection, speech-to-text, and chatbot-style text responses. By starting from the project’s source code, you avoid having to design an AI inference pipeline from scratch. Instead, you configure board pins, Wi-Fi (if needed), and display settings, then compile and flash. Once deployed, the ESP32-S3 can run a local language model Arduino-style logic while still benefiting from the richer APIs and debugging tools of ESP-IDF.

Voice and Display Interaction: Beyond Buttons and LEDs

Traditional microcontroller projects rely heavily on push buttons, simple menus, and blinking LEDs. A local AI chatbot microcontroller changes that paradigm. With Xiaozhi running on the ESP32-S3, you control your device using natural language instead of rigid button presses. Say a command, and the assistant interprets your intent, then triggers the appropriate function—similar to how the OpenClaw bridge exposes functions like toggle_led and get_status on an Arduino board. The difference is that the voice assistant can map everyday language to these actions. Meanwhile, the ST7789 display ESP32 integration gives you constant visual feedback: prompts when the device is listening, transcribed text, chatbot replies, or system status. This combination of speech and visuals produces a more intuitive interface, especially for complex tasks where a single button can no longer capture all possible user intents.

Local Processing, Privacy, and Next Steps

Because the Xiaozhi framework runs directly on the ESP32-S3, your assistant can operate with minimal or no cloud access. Local processing means responses are faster and consistent, even without a reliable internet connection, and your spoken data stays on the device. You can start with basic chatbot functions, then expand into home automation, sensor dashboards, or robot control by exposing your own functions, similar to how OpenClaw bridges LED control and status queries. Each new feature can be invoked through natural language rather than adding more buttons or complex menus. As you refine your ESP32 voice assistant, you can experiment with different prompt styles, interface layouts on the ST7789 display, and integration with other microcontrollers. Over time, this approach turns a simple ESP32-S3 board into a flexible, privacy-friendly smart companion tailored to your specific projects.