From Vibe Coding to Structured AI Development

Early AI code generation tools encouraged so-called “vibe coding”: a single, sprawling prompt and a flood of autogenerated code. That approach delivered impressive demos but made it difficult to control quality, avoid hallucinations, or plug output into normal engineering processes. A new wave of spec-driven workflows is trying to fix that. Instead of improvisation, tools like GitHub’s Spec-Kit and OpenAI’s Symphony orchestrator insist on structure: plans, tasks, and review states that can be audited long before changes reach production. The goal is not just faster coding, but predictable, reviewable work that fits with existing issue trackers and CI pipelines. By pushing AI agents to reason through specifications and ticket states, these systems are turning automated pull requests and code review automation from experiments into operational practices that teams can scale and measure against real productivity gains.

GitHub Spec-Kit: Plans, Tasks, and Checkpoints Before Code

GitHub’s Spec-Kit, now open-sourced, embodies the spec-driven approach to AI code generation. Instead of jumping straight from a feature idea to implementation, the toolkit walks teams through Specify, Plan, Tasks, and Implement phases. A Specify CLI plus templates and helper scripts turn high-level intent into detailed specs, technical plans, and granular task lists. Six core slash commands cover constitution, spec writing, planning, task breakdown, issue conversion, and implementation, while optional clarify, analyze, and checklist steps act as review gates. Each phase produces concrete artifacts that humans can inspect, revise, or reject before agents write code. With over 90,000 GitHub stars and thousands of forks, Spec-Kit signals strong interest in workflows where AI assistance remains compatible with familiar engineering checkpoints, creating traceable handoffs between people and agents instead of opaque, one-shot prompts.

OpenAI Symphony: Agents That Run Until Pull Requests Are Merged

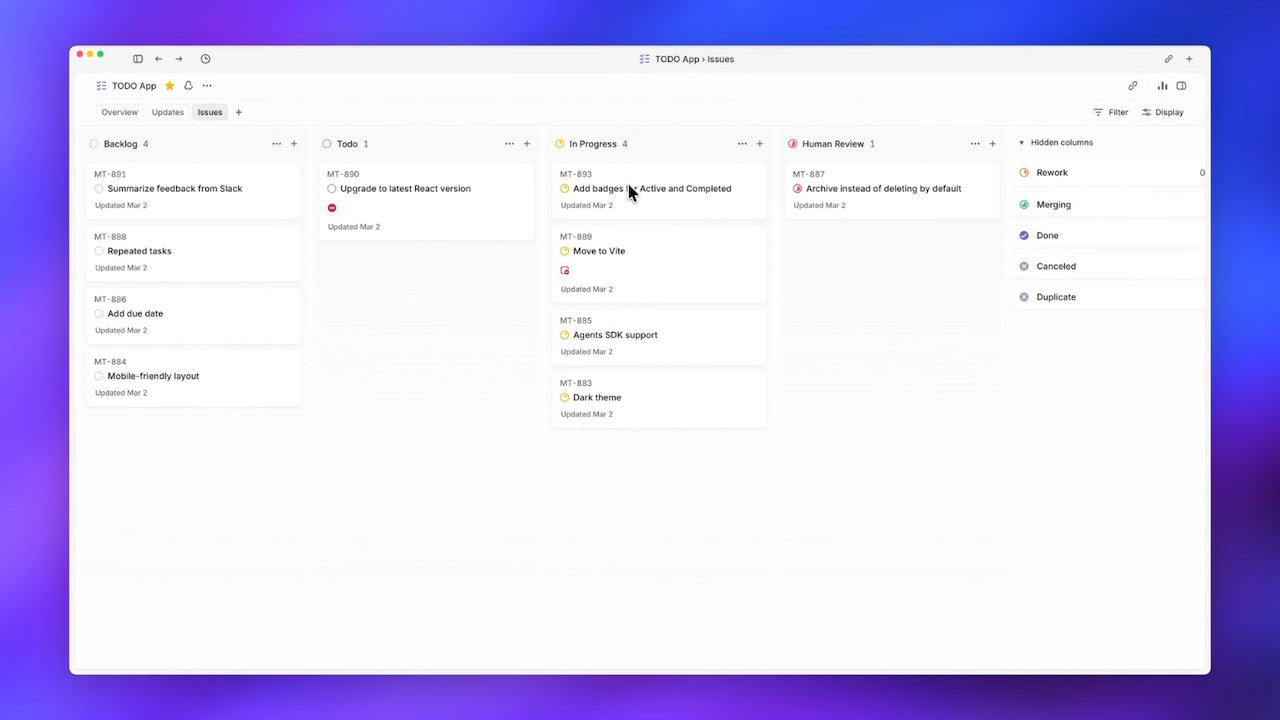

OpenAI’s Symphony tackles a different bottleneck: human supervision of many concurrent coding agents. Engineers found it difficult to manage more than a handful of Codex sessions without context switching erasing the productivity benefits. Symphony reframes the problem as orchestration. Under its spec, each ticket in Linear receives its own Codex agent and dedicated workspace that persists until the work is done. Linear effectively becomes a state machine—Todo, In Progress, Review, Merging—while Symphony respawns agents that crash or stall. The agents generate dependency trees of tasks, executing them in parallel where possible and even creating follow-up tickets when they uncover new issues. During the first three weeks of internal use, teams reported a sixfold increase in merged pull requests, illustrating how automated pull requests and structured dispatch can elevate throughput without requiring humans to babysit every step.

Reducing Hallucinations and Improving Code Quality with Spec-Driven Workflows

Both Spec-Kit and Symphony shift AI development from ad hoc prompting to processes that resemble rigorous engineering practice. Spec-Kit reduces hallucinations by forcing agents through specification, planning, and task breakdown before any implementation begins. Missing details can be surfaced using clarify and analyze commands, while checklists formalize quality expectations. Symphony, meanwhile, constrains agents inside the lifecycle of a ticket tracker, tying their actions to explicit states and dependency graphs instead of free-form exploration. These structures make it easier to catch misaligned assumptions early, review intermediate artifacts, and align automated pull requests with coding standards and architectural guidelines. The result is not just more code, but code that is traceable back to explicit specs and ticket histories, making code review automation safer and more acceptable to teams that need production-ready, auditable AI contributions.

Toward Production-Ready, Auditable AI Code Generation

Spec-driven workflows mark a turning point in how teams think about AI code generation. Rather than treating AI as an autonomous pair programmer that improvises solutions, Spec-Kit and Symphony treat it as an engine that operates inside well-defined processes. Spec documents, task DAGs, and ticket states become the interfaces between humans and agents, enabling review checkpoints before and after code is written. This makes AI output easier to trace, test, and reason about, especially when agents are dispatching and merging pull requests at scale. Adoption signals—such as Spec-Kit’s large open-source following and Symphony’s reported jump in merged pull requests—suggest that teams are ready to trade a bit of upfront planning overhead for predictable outcomes. As more tooling follows this pattern, AI-assisted development is likely to look less like one-off prompt experiments and more like integrated, auditable parts of everyday software lifecycle management.