When the King of Overpromising Says “Be Careful”

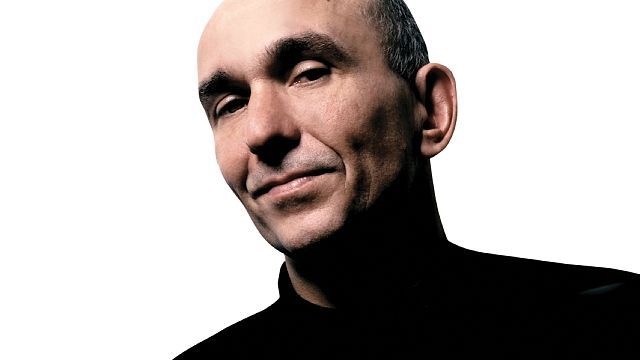

Peter Molyneux is famous for sweeping, often unrealistic promises about his games, from grandiose Fable features to the high‑profile misfires of Godus and Curiosity. That history makes his recent stance on AI in game development surprisingly restrained. Speaking in a BBC interview, he argued that AI is “not of a high enough quality for us to really use in games right now” and warned that studios must be “very, very careful” and build safeguards so they “can’t abuse this power.” Coming from a designer who once embraced blockchain hype, that caution is significant. It signals that even tech‑enthusiastic veterans see real risks in current AI tools: unreliable output, ethical grey areas, and the temptation for publishers to use AI to cut corners rather than improve games. For PC gamers, it is an early red flag that the next big revolution might also be the next big disappointment.

How AI Is Sneaking Into Game Dev Pipelines

AI in game development is already moving beyond flashy tech demos. Studios are experimenting with AI generated quests, automated dialogue passes, procedural game content, and reinforcement‑learning bots for testing balance and difficulty. The same kind of AI that lets Sony’s Ace robot arm track a table tennis ball and learn expert‑level play through reinforcement learning can also hammer on game systems thousands of times faster than humans, hunting for exploits or tuning enemy behavior. On paper, this promises faster iteration, richer worlds, and fewer bugs. In practice, it raises hard questions. If quest designers are replaced by templates and text generators, will open‑world games start to feel interchangeable? If QA shifts to bots, will subtle, human‑facing issues slip through? And as AI tools get better at mimicking studio “house styles,” there’s a real risk that creativity flattens into algorithmically safe sameness, while human jobs quietly evaporate.

Upsides for PC Players: Reactivity, Patches, Personalisation

There are real potential benefits if AI is used thoughtfully. AI‑assisted systems could make NPCs more reactive, adjusting tactics or dialogue to your playstyle in ways that go beyond scripted behavior trees. Procedural game content might become more interesting, generating side quests that respond to past choices instead of randomized fetch missions. AI‑driven tools can also speed up content pipelines: balance tweaks, bug fixes, and new cosmetics could arrive more quickly, which is attractive in live‑service PC games that rely on constant updates. Personalisation is another frontier, from dynamic difficulty that adapts to your skill level to accessibility features that tailor UI, subtitles, and controls. However, each upside has a shadow. Over‑personalised systems can feel manipulative, nudging players toward specific builds or grind loops. Faster content can also mean more filler. Without strong human direction, AI risks amplifying everything that is already shallow about modern game design.

The Dark Side: Opaque Systems, Data Hunger, and Monetisation

PC players are already wary of always‑online requirements, aggressive data collection, and invisible algorithms shaping matchmaking and difficulty. AI in game development could deepen that game dev controversy. Systems that adapt to your behaviour need data, and a lot of it: how you move, what you buy, when you quit. The same predictive models that learn to return a table tennis serve at inhuman speed can also learn exactly when you are most vulnerable to nudges toward microtransactions or grind‑skipping boosts. Difficulty and drop rates could become opaque levers tuned for engagement metrics rather than fun. Because AI systems are often "black boxes," even developers may not fully understand why they make certain decisions. For PC gamers used to tinkering, modding, and reading ini files, this shift toward inscrutable, server‑side AI logic feels like a step away from control and toward a casino where the odds are set by machine‑learning models.

Indies, AAA, and What Players Can Actually Do

Indie PC studios and big publishers are likely to treat AI very differently. Smaller teams might use AI as scaffolding: prototyping AI generated quests, background art, or test bots, then manually curating and rewriting to keep a strong authorial voice. That could enable stranger, more experimental projects without ballooning budgets. Major publishers, by contrast, may focus on scale and predictability: procedural game content tuned to proven engagement patterns, automated writing pipelines, and AI‑driven live‑ops that keep players spending. For PC gamers, the practical question is how to spot when AI is affecting your experience. Generic, repetitive dialogue, wildly inconsistent tone, or oddly tuned difficulty curves can all be hints. Read patch notes, developer blogs, and interviews; many teams now openly discuss their AI tools. Most importantly, use community channels and user reviews to reward studios that use AI transparently and responsibly—and push back loudly when it is used as a shortcut that hurts the games you love.