Frontier AI Models Redefine Vulnerability Discovery

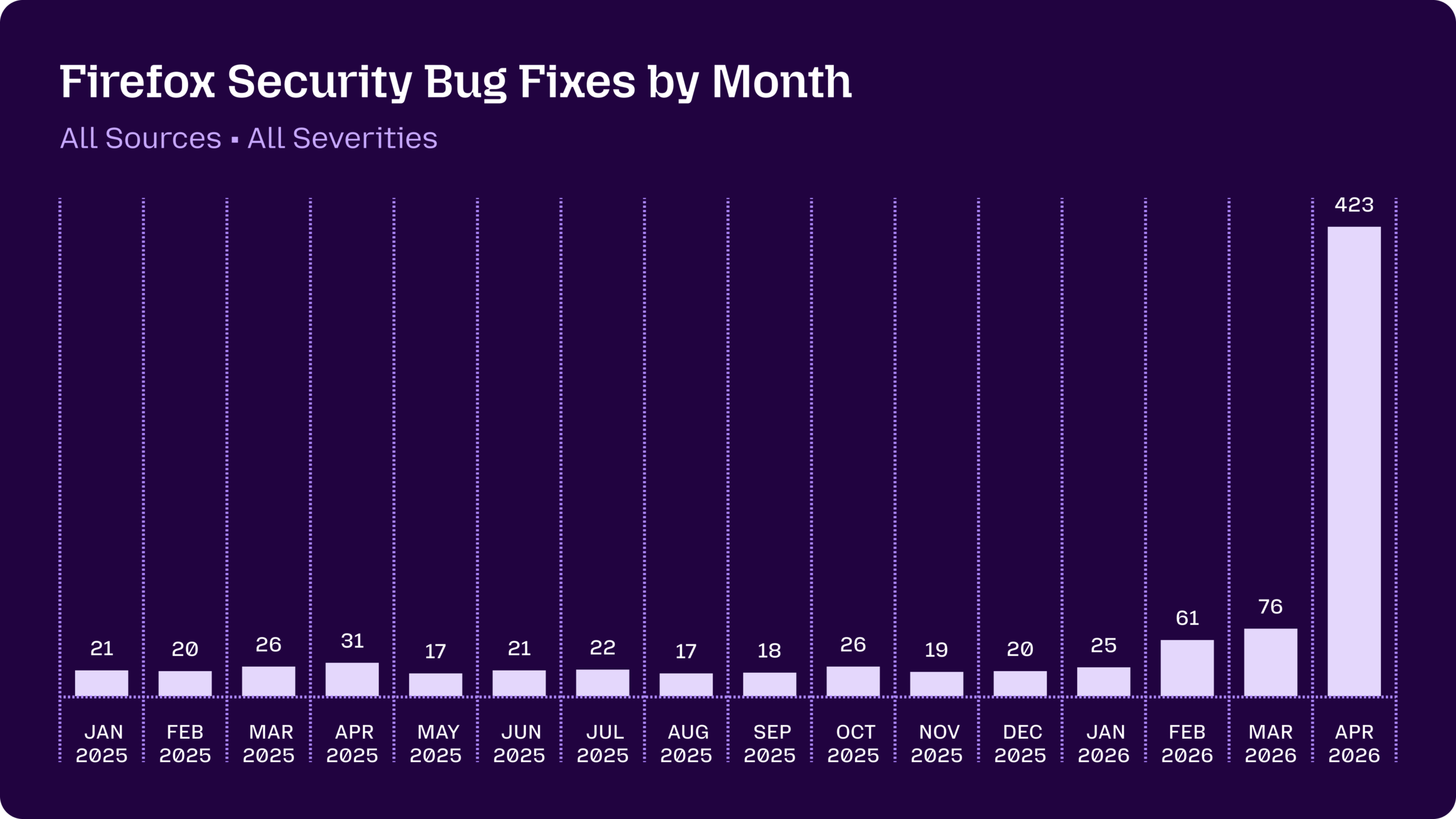

Frontier AI models security capabilities are reshaping how organizations hunt for software flaws. Mozilla’s experience with Claude Opus 4.6 and Claude Mythos Preview illustrates the shift. After a routine cadence of monthly Firefox security fixes in the teens and twenties, Mythos surfaced hundreds of additional issues when deployed through Anthropic’s Project Glasswing. Most were lower-severity bugs, defense-in-depth improvements and vulnerabilities in long-dormant code paths that human teams were unlikely to audit. This new form of AI vulnerability discovery lets defenders compress years of manual review into weeks, hardening foundational systems such as browsers, operating systems, cloud platforms and security appliances. At the same time, it raises strategic questions: who gets early access to these models, and which layers of the technology stack are hardened first? As access becomes selective, security advantages increasingly track who can wield these tools effectively.

Asymmetric Security: Who Holds the Frontier AI Advantage?

Restricted access to frontier AI models like Claude Mythos creates an asymmetric landscape for AI cybersecurity defense. Programs such as Project Glasswing and other trusted-access initiatives prioritize critical infrastructure providers: cloud platforms, operating system maintainers, chipmakers, browser vendors and endpoint-security firms. Their early access lets them rapidly reduce shared attack surfaces and clear vulnerability backlogs that previously lingered as “low priority.” But this selective model access also maps where gaps will persist. R&D environments built on less prioritized tools, niche vendors or legacy integrations may lag in hardening, even as attackers evolve. Meanwhile, the same classes of models, if weaponized, could automate chained exploits across research systems, ELNs, LIMS and connected instruments by combining multiple medium-severity weaknesses. The result is a new security divide: organizations plugged into frontier AI pipelines enjoy a defensive head start, while others face increasingly sophisticated, AI-powered attack paths.

Dev Teams Under Siege: Account Takeover as the New Front Line

While frontier AI strengthens core infrastructure, development teams are facing a surge in access control threats. Email has become a primary battlefield: analysis of billions of messages shows that one in three is malicious or unwanted spam, and phishing now accounts for nearly half of all malicious email activity. Attackers increasingly rely on phishing-as-a-service kits combined with generative AI to craft convincing, localized messages that mimic vendors, partners or internal stakeholders. The result is a wave of identity-based deception targeting developers’ inboxes. For many organizations, a dev team account takeover is no longer a rare event—34% of companies report at least one such incident every month. Once attackers compromise a developer’s email, they can pivot to reset repository credentials, access production environments or tamper with code. In this environment, technical skill alone is insufficient; identity and trust boundaries around dev accounts have become critical security controls.

Balancing AI-Powered Defense with Access Control Risk

Organizations that adopt frontier AI models for security research must also treat model access as a high-value target. The same credentials used to run large-scale AI vulnerability discovery over source code, cloud infrastructure or research data could be weaponized if attackers seize them via dev team account takeover. In practice, this means integrating AI cybersecurity defense tools with stronger identity safeguards: phishing-resistant authentication, strict least-privilege on AI tooling, and robust monitoring of anomalous access patterns. Email and collaboration platforms—still the primary entry points for identity compromise—need protections tuned for AI-generated phishing, not just traditional file-based malware. Security leaders should assume adversaries will also harness AI to automate reconnaissance, craft credible social engineering, and chain medium-severity flaws into high-impact breaches. The strategic challenge is to capture the defensive upside of frontier models without creating a single, poorly defended gateway into the heart of R&D systems.

R&D Security Strategies for an AI-Accelerated Threat Landscape

R&D-heavy organizations must evolve their security strategies around both AI-enhanced defense and AI-driven attack sophistication. On the defensive side, frontier models can re-rank vulnerability backlogs across ELNs, LIMS, connected instruments and vendor portals, uncovering the kinds of misconfigurations that previously lingered as “medium” risks. Case studies like the Cencora breach show how such gaps can cascade, exposing sensitive research and patient data via chained weaknesses in vendor ecosystems. On the offensive side, defenders should assume adversaries will deploy similar AI agents to scan external attack surfaces, identify overlooked integrations and construct exploit chains that bypass traditional prioritization schemes. Modern R&D security must therefore combine continuous AI vulnerability discovery with rigorous access segmentation, supply chain scrutiny and developer-focused email security. The objective is not just patching faster, but breaking the chains of seemingly minor flaws before AI-empowered attackers can stitch them into major incidents.