Daybreak Pushes AI Vulnerability Detection Earlier in the Pipeline

OpenAI’s new Daybreak initiative marks an aggressive move to embed AI vulnerability detection directly into software development, rather than treating security as a late-stage gate. Built around GPT-5.5 and a specialized Codex Security agent, Daybreak is designed to scan repositories, prioritize high‑impact issues, and automatically generate and test patches inside controlled workflows. The platform emphasizes scoped access, monitoring, and review gates, so that automated bug hunting does not become automated breakage. OpenAI frames this shift as a response to shrinking disclosure windows and AI-accelerated exploit development, where patches can be weaponized in minutes. By offering secure code review, vulnerability triage, malware analysis, and patch validation as part of a single agentic stack, Daybreak aims to turn enterprise security tools into proactive, continuous defenses rather than a reactive incident-response checkbox at release time.

Mythos: Between Marketing Hype and Real-World Bug-Finding

Anthropic’s Claude Mythos Preview model has become a lightning rod in the debate over automated bug hunting. cURL creator Daniel Stenberg was invited to participate in Anthropic’s Project Glasswing, only to receive a third‑party scan report rather than direct model access. Mythos flagged five supposed “confirmed security vulnerabilities” in cURL; after hours of human review, Stenberg’s team narrowed that to one low‑severity security issue, plus a handful of non‑security bugs. The remaining findings were either false positives or already‑documented limitations. Stenberg concluded that Mythos, in this case, did not outperform traditional tools and that much of the excitement felt “primarily marketing.” The episode highlights a key risk with AI vulnerability detection: impressive demos can mask noisy outputs that still demand heavy expert triage, limiting the net gain for overworked security teams.

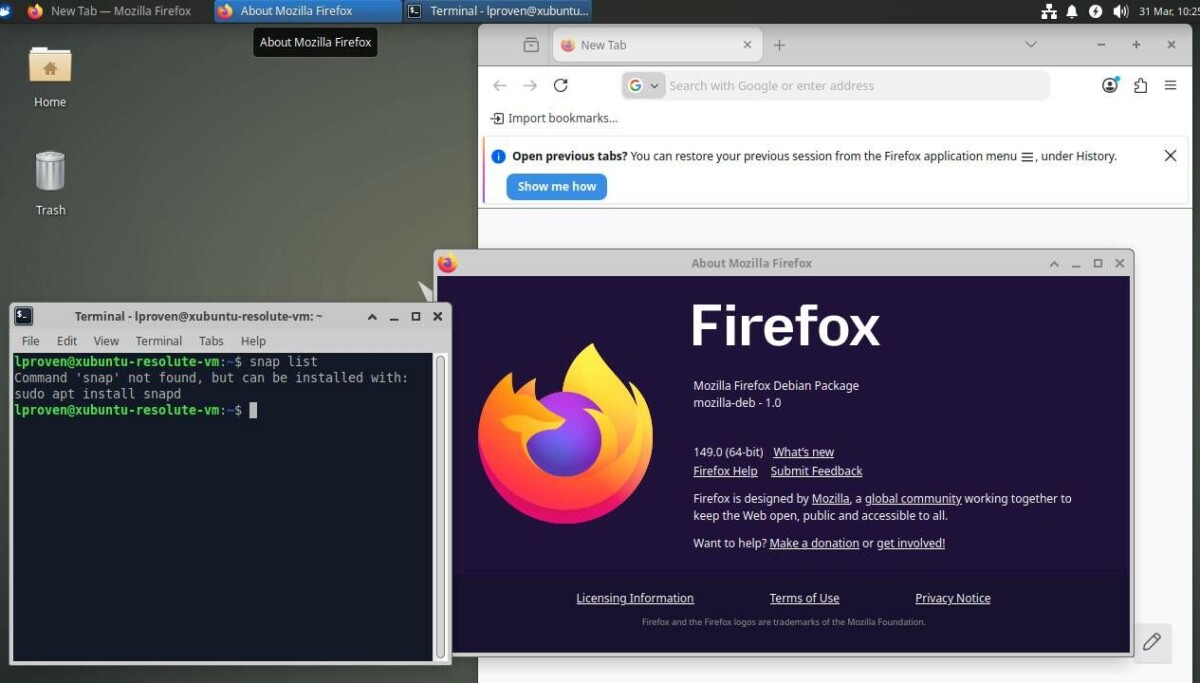

Firefox’s Experience: When AI Bug Hunting Actually Scales

Mozilla’s Firefox team reports a much more dramatic payoff from AI‑assisted security testing. After integrating Anthropic’s Mythos Preview and its sibling model Opus 4.6 into their process, Mozilla says it fixed 423 Firefox security bugs in April, up from 76 the previous month and far above its historical monthly average. Mythos was credited with finding 271 vulnerabilities in Firefox 150, including sandbox escapes and a 20‑year‑old heap use‑after‑free that traditional fuzzing missed. Firefox engineers attribute this success not only to better models, but to an “agentic harness” that structures how the AI scans, reasons, and reports—improving the signal‑to‑noise ratio. By carefully steering the models and selectively exposing detailed bug reports, Mozilla illustrates how AI can materially boost zero-day exploit prevention efforts, provided the surrounding workflow is engineered as thoughtfully as the model itself.

Faster, but Not Always Smarter: The Signal-to-Noise Dilemma

These contrasting results from cURL and Firefox reveal the central tension in AI vulnerability detection. Systems like Daybreak and Mythos can slash analysis time and surface subtle issues, yet they can also amplify noise—false positives, misclassified flaws, or “known” weaknesses already documented. Security researcher Himanshu Anand notes that AI can now turn patch diffs into exploits in minutes, compressing response timelines and pressuring teams to automate more of their defenses. But automation without precision risks burying critical vulnerabilities under AI‑generated clutter. The emerging consensus is that AI is not a replacement for seasoned security engineers; it is a force multiplier whose value depends on tuning, validation, and context. Enterprise security tools must therefore prioritize explainability, confidence scores, and tight integration with existing review processes to keep human experts firmly in the decision loop.

What Enterprise Security Teams Should Do Next

For enterprises, the question is no longer whether to adopt AI‑assisted vulnerability hunting, but how to do so without drowning in noise. Daybreak’s focus on repository‑level patch testing and Mythos’s mixed track record suggest a few practical priorities. First, treat AI systems as junior analysts: fast, tireless, but always subject to human validation for high‑impact changes. Second, invest in orchestration layers—the “agentic harness” concept—to constrain models, enforce scoped access, and standardize reports. Third, measure success not just by the volume of bugs filed, but by meaningful reductions in exploitable defects and improved zero‑day exploit prevention. Finally, ensure incident-response teams, developers, and AppSec engineers collaborate on triage criteria and escalation paths. Done well, AI can shift security left in the lifecycle and extend coverage; done poorly, it becomes just another noisy dashboard.