AI-Powered Scams Turn Crypto Dreams Into Financial Ruin

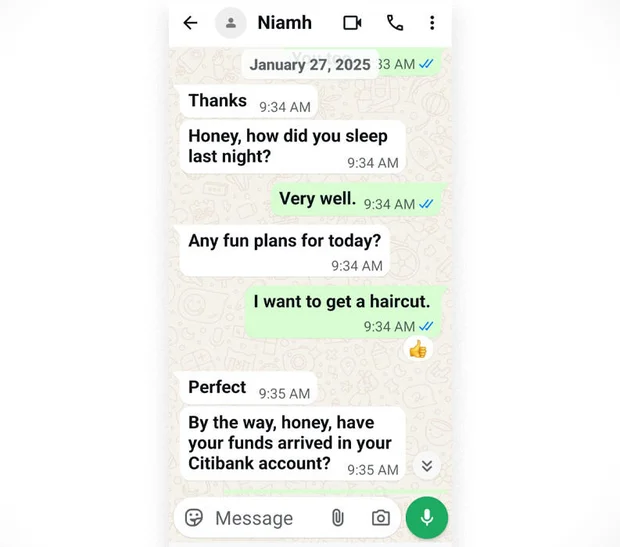

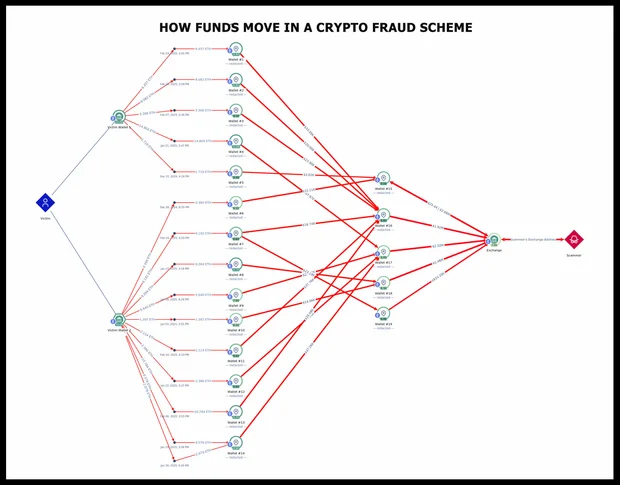

The human cost of AI powered scams is no longer abstract. In one widely reported case, 73-year-old Kyle Holder saw her entire life savings disappear after a scammer used WhatsApp to lure her into a fake crypto investment scheme. Over roughly three months, she moved USD 300,000 (approx. RM1.38 million) from retirement and savings accounts into wallets controlled by criminals, joining thousands of victims in a cyber theft wave the FBI estimates at USD 20 billion (approx. RM92 billion) in 2025, with more than half routed through cryptocurrency. The fraudster, calling herself “Niamh,” mixed emotional intimacy with relentless pressure about whether funds had arrived, creating the illusion of friendship while directing money flows. IRS investigators now warn that AI crypto fraud operations can industrialise this kind of social engineering at scale, mixing psychological profiling, automated messaging and cross-border crypto rails to make stolen funds extraordinarily hard to trace or recover.

Voice Cloning, Deepfakes and Hyper-Personalised Chat Supercharge Social Engineering

What makes today’s scams different is how convincingly AI can mimic real people and relationships. Romance-style frauds and investment approaches that once relied on clumsy scripts are now polished by large language models, which generate fluent, personalised chat based on a victim’s social media trail. Deepfake video and voice cloning make it far easier to pose as family members, bank staff or investment advisers, reducing the chances that individuals – or even front-line bank employees – spot red flags in time. Screenshots of Holder’s chats show how emotional check-ins like “Honey, how did you sleep last night?” are carefully blended with questions about incoming funds, a pattern AI systems can replicate thousands of times a day. For Malaysian consumers and banks, this raises serious AI security risks: identity verification based only on voice calls, photos or casual text exchanges is increasingly unreliable, demanding stronger multi-factor checks and more sceptical customer behaviour online.

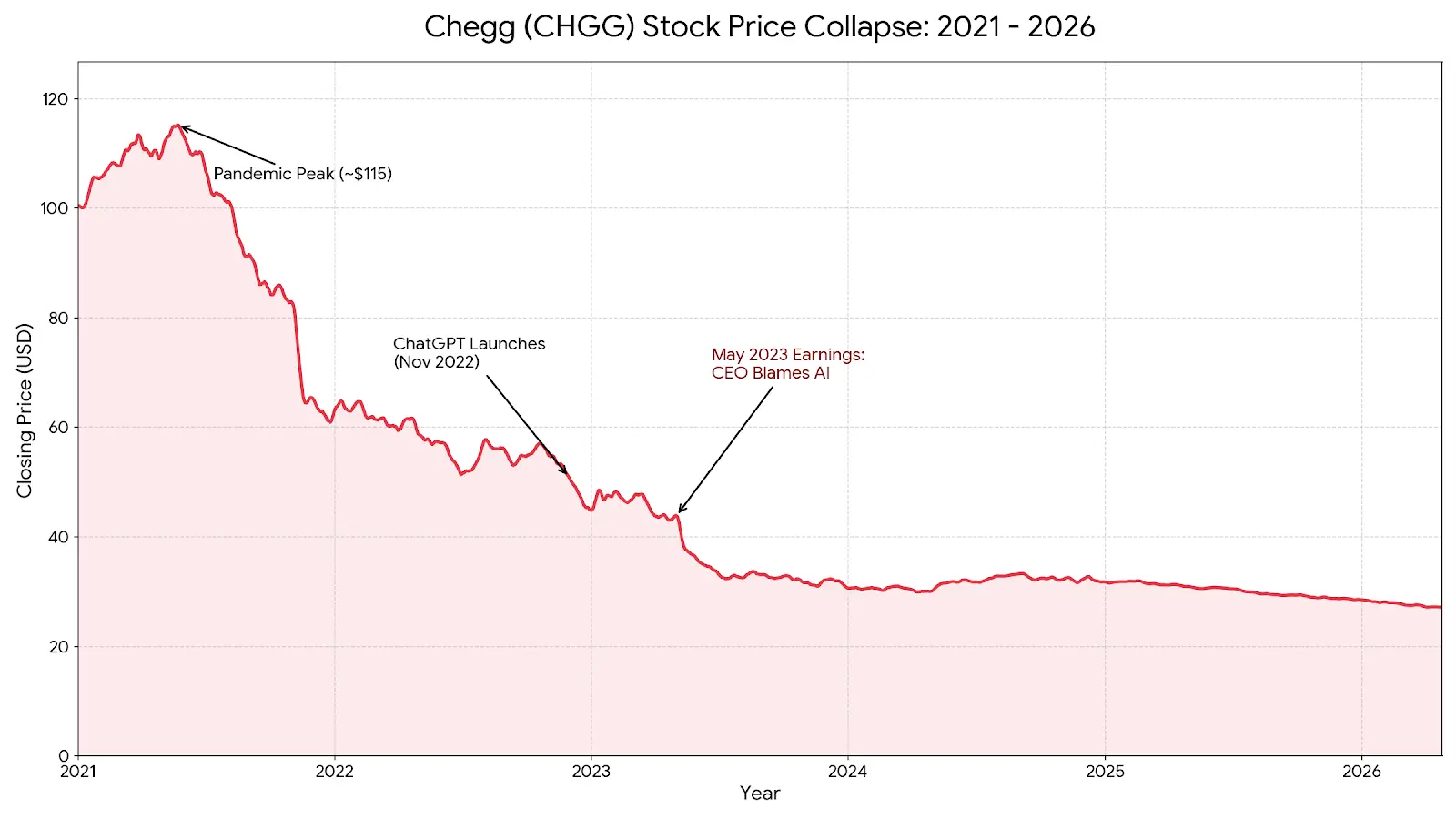

When AI Disrupts Business Models Overnight: Lessons from Chegg’s Collapse

AI is not only empowering scammers; it is also destabilising companies that fail to adapt. Chegg, once valued at USD 14.5 billion (approx. RM66.7 billion) as the dominant digital textbook and homework-help platform, saw its fortunes reverse almost overnight. On a May 2023 earnings call, then-CEO Dan Rosensweig admitted that the free chatbot ChatGPT was hurting new customer growth. Investors panicked, erasing nearly USD 1 billion (approx. RM4.6 billion) in market value in a single day. Students quickly realised that a subscription costing USD 19.95 (approx. RM92) per month for step‑by‑step answers made little sense when ChatGPT could solve similar problems instantly and interactively. Chegg’s attempted pivot to “CheggMate,” an AI tutor built on GPT‑4, failed to convince users who saw it as a paid wrapper around the same technology. For Malaysian and regional firms, the message is clear: ignoring AI or simply banning tools is not a strategy; proactive reinvention is.

AI Vulnerability Discovery: A New Arms Race in Cybersecurity and DeFi

Security researchers say AI is flipping traditional cost dynamics in vulnerability discovery. Automated systems like Anthropic’s Claude Mythos Preview helped Mozilla’s Firefox team find and fix 271 vulnerabilities in one release, after an earlier run surfaced 22 security-sensitive issues. This kind of AI vulnerability discovery can make it cheaper for defenders to harden code, but the same techniques are available to attackers probing for weaknesses. Anthropic’s specialised Mythos model goes further, stress-testing how different systems interact rather than just scanning individual smart contracts. Crypto risk experts now warn that the biggest threats lie in infrastructure: key management, signing services, bridges, oracles and web providers. A recent breach at infrastructure firm Vercel, traced to a third-party AI tool connected through Google Workspace, forced crypto projects to rotate API keys. For Malaysian banks, fintechs and Web3 startups, this underscores that AI security risks extend deep into supply chains, not just headline‑grabbing exploits.

From Washington to Kuala Lumpur: Regulating AI Without Halting Innovation

Political concern about AI regulation concerns is rising alongside fraud and systemic risk. In the US, Senator Bernie Sanders has cited renowned AI scientists Yoshua Bengio and Geoffrey Hinton, warning that unchecked AI could one day surpass human intelligence and operate beyond human control. He notes that despite a 2023 letter signed by over 1,000 experts urging a pause in AI development, Congress has yet to enact strong guardrails. For Malaysia and the wider region, the challenge is similar: banning tools like chatbots or code assistants is unrealistic when they also power productivity and security. Instead, regulators and businesses need AI-aware compliance: clear disclosures when AI is used, stricter onboarding for crypto platforms, tighter controls on third-party AI integrations and mandatory incident reporting. Consumers should assume unsolicited investment pitches, romance outreach and “urgent” calls can be AI assisted, verify through independent channels, and treat any request to move funds into crypto as a major red flag.