A New Gemini AI Model Aims Squarely at ChatGPT

Google is preparing a new Gemini AI model that is expected to debut at its I/O conference, with performance reportedly targeting OpenAI’s GPT-5.5 class while still trailing Anthropic’s Mythos frontier model. That positioning makes Gemini less about bragging rights and more about practical parity with today’s leading ChatGPT competitor. The timing is deliberate: Google is putting Gemini updates on stage directly in front of the developer audience that now treats AI as core infrastructure rather than a novelty. The company’s challenge is not convincing people that Gemini is powerful on paper, but that it is powerful in ways that matter in workflow: speed, stability, and reduced friction. If the new Gemini AI model can reliably deliver those qualities, it could meaningfully narrow the perceived performance and usefulness gap with ChatGPT for both everyday users and technical teams.

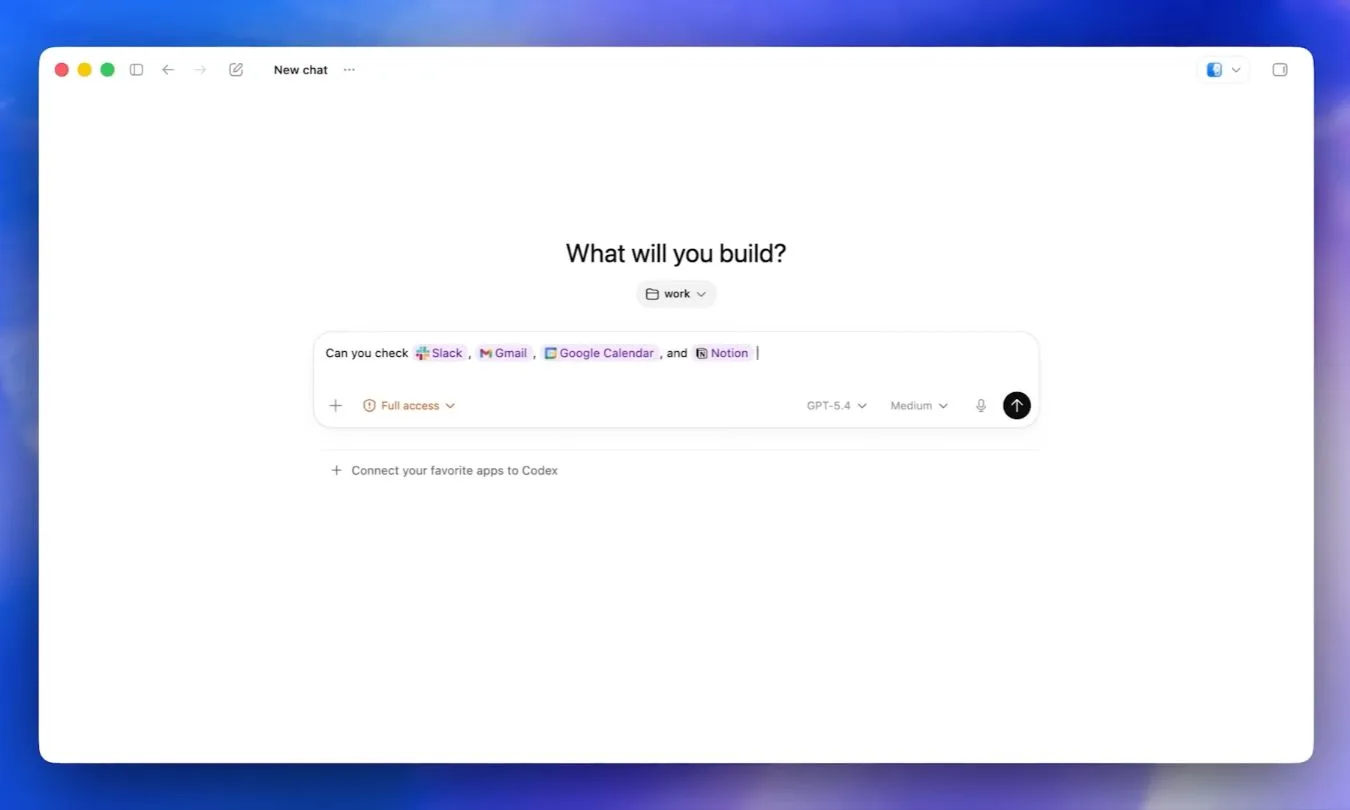

Developer Habits Will Decide Whether Gemini Sticks

For Google, the real battleground is not benchmarks but developer behavior. Most engineers already have established AI routines and mental shortcuts, often centered on ChatGPT or Claude. To dislodge those habits, Gemini must become the tool developers reach for first when they are on deadline or debugging gnarly code, not just the model they try during a keynote demo. That means faster responses, fewer hallucinations, and code that integrates cleanly into existing stacks without inflating the cleanup bill. Google’s I/O agenda around agentic coding underscores that coding is where developers can immediately tell whether the model genuinely helps or simply adds another tab to juggle. If Gemini can show clear advantages inside real projects—refactors, tests, integrations—developers may gradually rebuild their workflows around Google AI development tools. Without that, even a strong model risks fading into the background of an already crowded AI landscape.

Agentic AI Capabilities as the Next Enterprise Frontier

Agentic AI capabilities are emerging as the next differentiator for enterprise AI, and Google is clearly betting on that frontier. At its Cloud Next event, the company introduced the Gemini Enterprise Agent Platform, designed to help organizations build, scale, and govern AI agents with orchestration, identity, observability, and security built in. On paper, that gives Google more than a collection of flashy demos; it offers a managed stack for complex, multi-step workflows. But the true test for any agent platform is how it behaves under messy real-world conditions: ambiguous instructions, bad inputs, evolving goals, and minimal human babysitting. Enterprises will judge Gemini-based agents on whether they can reliably handle these scenarios without constant intervention. If Google can demonstrate agents that survive real work—not just curated stage tasks—it could carve out a strong position in the agentic AI market alongside, and potentially ahead of, other ChatGPT competitors.

Can Google Shift the Default from ChatGPT to Gemini?

Beyond raw capability, Google’s toughest challenge is psychological: changing what feels automatic. For many users, opening ChatGPT has become a reflex for coding help, research, and experimentation with agentic workflows. Gemini currently plays catch-up in that mental shortcut layer. To change this, the new model and related tools must make Gemini feel unavoidable—faster for code, more robust for research, and more trustworthy for agentic tasks that run in the background. Google’s strategy appears more refined than a year ago: instead of scattered announcements, it now pairs Gemini model improvements with a broader ecosystem, from developer tooling to enterprise agent platforms. If this alignment translates into tangible time savings and fewer headaches for users, Gemini could finally interrupt entrenched habits and earn a default spot in daily AI routines, closing not only the performance gap with ChatGPT but the adoption gap as well.