Why “Faster” Isn’t Enough: The AI Measurement Gap

Many engineering leaders hear that AI coding tools shorten development cycles, yet struggle to prove those gains are real or durable. Industry numbers vary wildly: controlled trials report large task-level speedups, forecasts predict sizable future productivity gains, and surveys show only a minority of teams experiencing high benefits today. These figures describe different things—isolated tasks, future projections, and current perceptions—so none alone can validate AI coding tools ROI for your organization. At the same time, new research links AI adoption to both higher throughput and lower delivery stability, while other studies suggest AI can even slow experienced developers in certain scenarios. This mix of optimism and caution leaves finance and engineering leaders budgeting against anecdotes instead of evidence. To move beyond guesswork, teams need a structured way to connect AI development cycle time improvements to measurable engineering outcomes and, ultimately, business value.

Use DORA Metrics as Your AI ROI Backbone

DORA metrics engineering gives you a proven backbone for developer productivity measurement in the AI era. Rather than counting how many lines of code an AI assistant writes, you track how AI affects deployment frequency, lead time for changes, change failure rate, and time to restore service. Recent DORA research frames AI as an amplifier: it strengthens already-good systems and worsens existing bottlenecks. Their ROI guidance links AI adoption to seven capabilities—such as a strong internal platform, disciplined version control, and AI-accessible data—that feed into better DORA metrics, improved developer and user experience, and eventually financial outcomes. The message is clear: AI coding tools ROI depends on the surrounding organizational system. You measure success not by raw code volume but by whether AI clears bottlenecks, shortens AI development cycle time, and maintains or improves delivery stability over time.

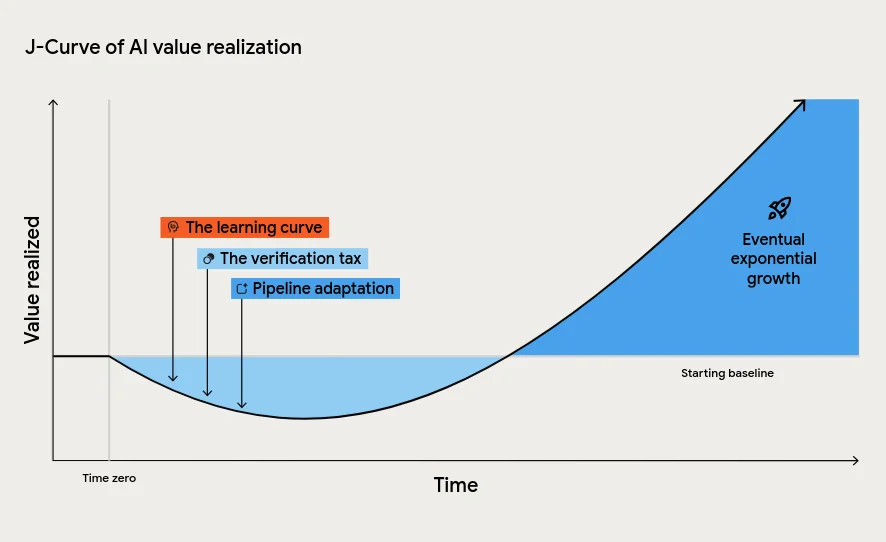

Account for the J-Curve and the “Verification Tax”

Even with a solid framework, you should expect a J-curve of value realisation when rolling out AI coding tools. At first, productivity may dip as developers learn new workflows and teams adapt their review practices to AI-generated code. This verification tax—extra effort spent checking suggestions, tests, and documentation—can temporarily offset any speed gains. Downstream processes like testing, approvals, and incident management may also struggle to keep up with higher code throughput, creating an instability tax where change failure rates rise despite individual effectiveness improving. Rather than interpreting this dip as failure, treat it as the tuition cost of transformation. Track DORA metrics through this period: look for shorter lead times and higher deployment frequency, but also monitor change failure rate and recovery time. Your goal is to emerge from the dip with faster, more reliable delivery, not just more code merged.

Measure Foundations First: AI Amplifies Your Current System

Before declaring success or failure on AI coding tools, evaluate your engineering foundations. DORA’s research shows AI magnifies existing strengths and weaknesses. Teams with clear workflows, robust CI/CD, automated testing, and high-quality internal platforms are positioned to turn AI suggestions into real throughput improvements. In less mature environments, AI risks creating localized pockets of productivity that disappear into downstream chaos—more partially tested changes, more manual reviews, and more operational noise. Practical steps include mapping your value stream, documenting handoffs, and checking whether AI has access to relevant internal code and documentation. Use these insights alongside DORA metrics to see where AI reduces friction versus where it amplifies dysfunction. The question isn’t “Does AI help?” but “Where in our system does AI remove bottlenecks, and where do we need foundational work before it can pay off?”.

Go Beyond Perception with Commit-Level and Financial Analysis

To separate real productivity gains from perceived improvements, combine developer sentiment with hard data from your codebase and financial model. Commit-level metrics like Engineering Throughput Value (ETV) illustrate how each team’s output changes against its own pre-AI baseline, rather than against generic benchmarks. This helps validate whether claimed gains from AI tools show up as sustained throughput improvements without compromising quality. On the financial side, follow a structured ROI model: tie improvements in DORA metrics to non-financial outcomes such as better developer experience and user satisfaction, then estimate downstream cost savings and revenue impact. Treat these calculations as high-uncertainty, scenario-based estimates intended to inform decisions, not precise predictions. When commit patterns, DORA metrics, stability indicators, and financial signals all move in a consistent direction, you can credibly state that your AI coding tools ROI is real, not just marketing.