Velocity Over Verification: How AI Short-Circuits Code Review Practices

AI tooling has made it dramatically easier to spin up new features, APIs, and internal tools in minutes. But beneath the hype about unprecedented velocity lies a quieter, more troubling trend: developers shipping unaudited AI-generated code simply to keep up with workload demands. Interviews with programmers describe teams “vibe-coding” their way to production, relying on large agents to implement sweeping codebase changes that are too large to fully track. Traditional code review practices—line-by-line audits, peer walkthroughs, and careful design discussions—are often skipped or reduced to cursory approvals because the volume of AI output is overwhelming. Senior engineers remain on the hook for high-risk changes, yet they must now review larger, more complex diffs produced by tools they did not operate. This creates a dangerous illusion of safety, where speed is celebrated while subtle bugs, security flaws, and duplicated logic quietly accumulate in the codebase.

Developer Skill Erosion in the Age of AI-Generated Code

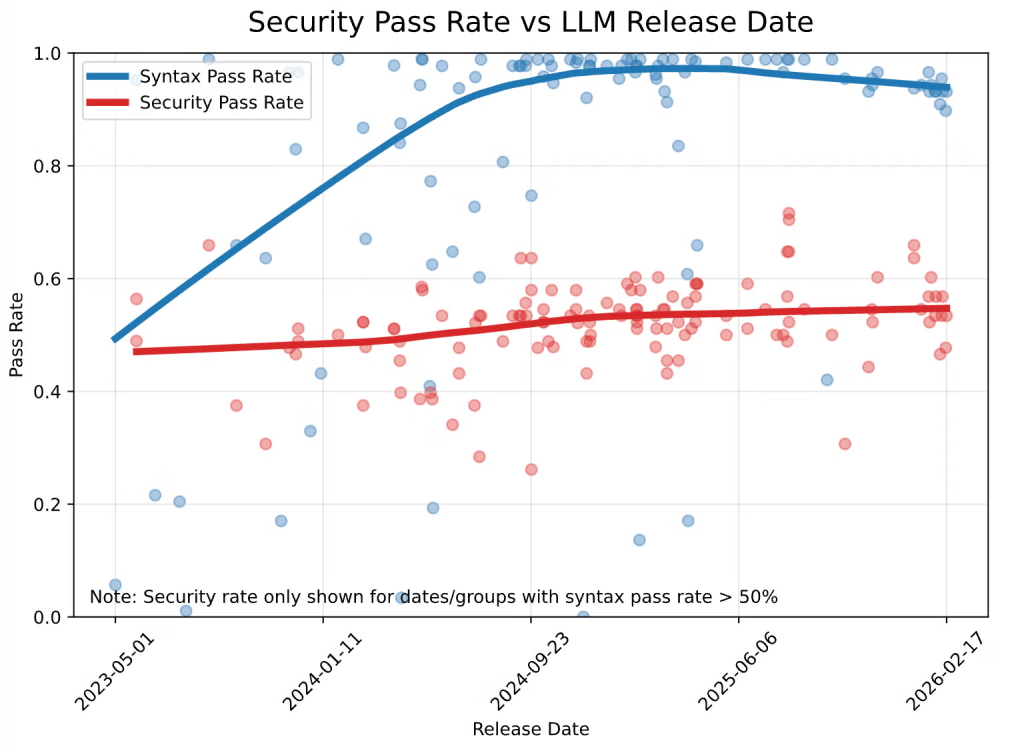

AI assistance was supposed to free developers from drudgery so they could focus on higher-value work. Instead, many engineers report a slow erosion of core skills. Some describe forgetting how to implement frameworks they once mastered, likening the experience to forgetting phone numbers after smartphones became ubiquitous. For junior developers, AI-generated code can raise the floor by catching obvious mistakes and offering quick examples. But heavy reliance comes at a cost: the mental muscles built through debugging, architectural reasoning, and problem decomposition get less exercise. Over time, these developers struggle to progress to higher levels because their thinking is mediated by prompts rather than their own design decisions. The cognitive load also shifts—from reasoning about systems to coaxing an AI into generating acceptable output—leading to burnout and a shallow understanding of the resulting software. As AI-generated code quality varies, this skill atrophy leaves teams less able to discern what is safe to ship.

Hidden Technical Debt and the Future Maintenance Shock

The productivity story around AI-generated code emphasizes rapid delivery but often omits the cleanup bill that arrives later. Quality debt accumulates quietly: duplicated patterns, inconsistent abstractions, and subtle logic flaws spread across the codebase as AI tools optimize for local correctness, not long-term coherence. Many changes are large-scale and cross-cutting, making it hard for any single engineer to fully understand their impact. When these systems inevitably require refactoring or adaptation, teams discover that the people who “shipped” the original features never deeply understood how they worked. This creates fragile systems that are costly to modify and difficult to debug, especially during incidents when time is critical. The result is a future maintenance shock, where software maintenance costs surge, eroding the early gains in velocity. Without deliberate technical debt management—explicit cleanup cycles, stricter review gates for AI-generated code, and architectural oversight—organizations effectively trade short-term speed for long-term instability.

Uneven Debt Across Engineering Orgs, Indie Developers, and Ecosystems

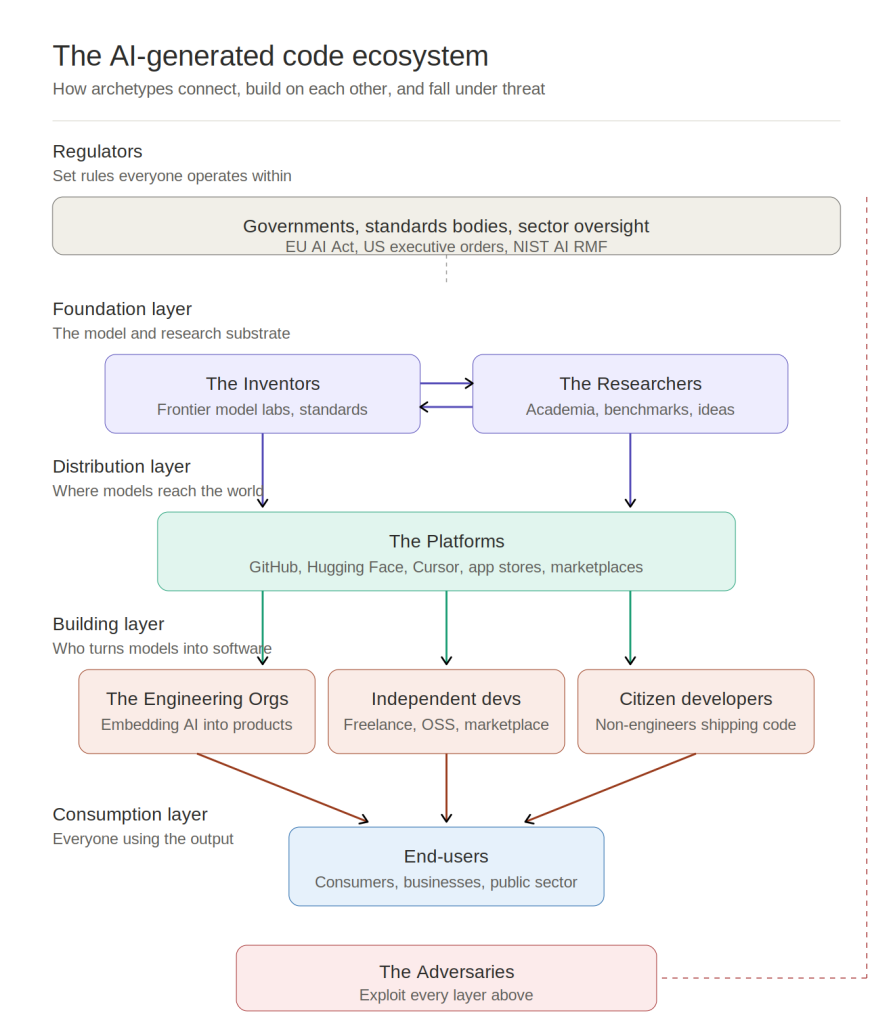

The hidden costs of AI-generated code are not evenly distributed. Engineering organizations with established processes gain the most short-term benefit, using AI to accelerate feature work and internal automation. Yet they also incur the largest cleanup costs, as senior engineers must review high-risk changes and handle incidents in increasingly opaque systems. Independent developers benefit from democratized development and can ship products solo at a pace once reserved for teams, but they often lack formal safeguards—making technical debt management an afterthought until something breaks. Citizen developers can now generate working code and applications without traditional training, contributing to broader ecosystems that must later integrate, secure, and maintain these artifacts. Across all these archetypes, the AI-generated code quality problem compounds: code is produced at scale by people with varying levels of expertise and limited contextual understanding. This creates a patchwork of maintainability, where some components are robust while others become brittle liabilities over time.

From Productivity Mirage to Sustainable AI Adoption

Organizations embracing AI-assisted development often see an initial productivity spike: faster shipping, leaner teams, and rapid prototyping. But as systems age, delayed cleanup costs begin to surface, offsetting those early gains. Incidents last longer because no clear owner understands AI-generated modules; refactors stall when teams fear breaking opaque logic; and promotion paths blur as AI blunts opportunities for engineers to demonstrate deep technical leadership. To avoid this productivity mirage, teams need deliberate guardrails. That includes redefining code review practices to explicitly flag AI-generated code, budgeting time for refactoring and documentation, and investing in training that keeps human reasoning at the center of design decisions. AI should augment, not replace, the craft of software engineering. Treating AI output as “first draft code” rather than production-ready material—and aligning incentives around maintainability, not just velocity—is critical to preventing today’s convenience from becoming tomorrow’s technical debt crisis.