Why RISC-V Matters for Edge AI Inference

RISC-V processors are quickly becoming a compelling foundation for edge AI inference, especially where local LLM deployment and tight power budgets matter. Unlike proprietary instruction sets, the RISC-V architecture is vendor‑neutral and open, allowing chip designers and system builders to add custom extensions tailored to AI workloads. That flexibility is ideal for AI accelerator boards designed to run transformer models, multimodal pipelines, or robotics stacks without relying on the cloud. When inference happens locally, latency drops dramatically—responses are limited by hardware speed, not network conditions—and sensitive data never has to leave the device. This combination of openness, tunability, and privacy is driving a growing ecosystem of open source hardware, software stacks, and tooling. From tiny single‑board computers to modular laptops, RISC‑V platforms are now powerful enough to host mid‑ to large‑scale models, pushing generative AI and classic ML closer to where data is actually produced.

Inside Sipeed’s K3: Compact RISC-V Board for Local LLM Deployment

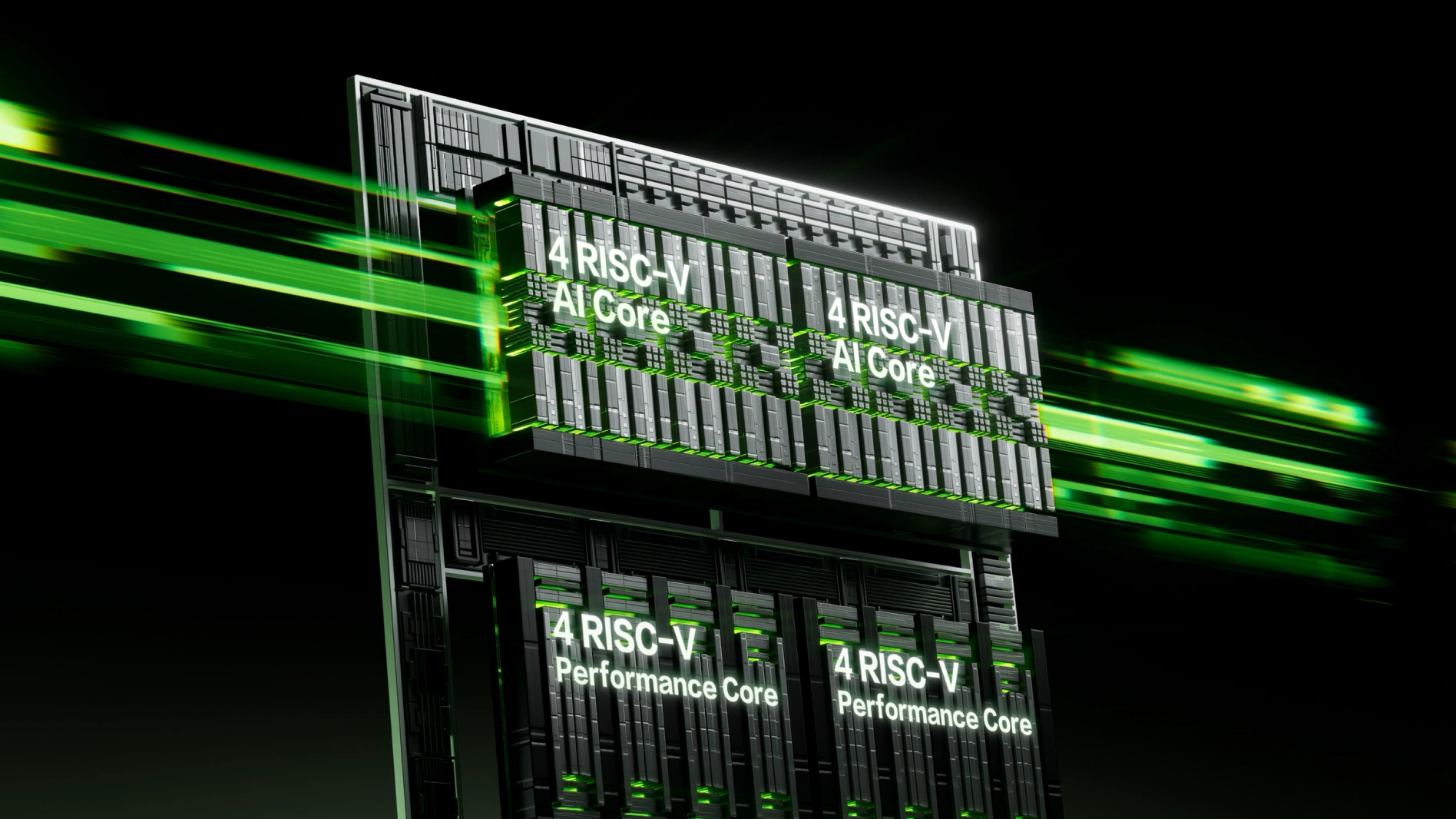

Sipeed’s K3 series single‑board computers show how far RISC‑V edge AI has come. Built around SpacemiT’s Key Stone K3 SoC, each board combines 8 high‑performance X100 cores with 8 A100 AI cores, delivering up to 130k DMIPS of general compute and a 60 TOPS NPU for acceleration. With up to 32GB of LPDDR5 running at 6400 MT/s and 51GB/s bandwidth, the K3 can keep large models resident in memory, supporting smooth local LLM deployment. Sipeed reports that the board can run Qwen‑3.5 35B at around 15 tokens per second, and is rated to handle models up to roughly 30B parameters without cloud assistance. The Pico‑ITX design offers modern I/O including 10GbE, USB‑C with USB‑PD and DisplayPort alt‑mode, and PCIe Gen3 for NVMe storage. Hardware compatibility with Jetson Orin Nano carrier boards makes it easier to migrate existing edge AI projects from other architectures to a RISC‑V processor.

Framework Laptop 13 Meets RISC-V: SpacemiT K3 in a Modular Form

RISC‑V is not limited to developer boards; it is also entering modular laptops. The DC‑ROMA RISC‑V Mainboard III brings a 2.5 GHz SpacemiT K3 octa‑core RISC‑V processor to the Framework Laptop 13, offering up to 60 TOPS of AI performance in a portable form factor. As with other Framework mainboards, this unit can be used either as the heart of a laptop or as a standalone desktop by attaching a display, keyboard, mouse, and power supply. Configurations start at USD 699 (approx. RM3,270) for a standard model with 16GB of RAM and no SSD, with options up to 32GB of memory. Storage and connectivity are handled via an M.2 2280 slot for PCIe NVMe or SATA SSDs, an M.2 2230 wireless slot, microSD reader, and multiple USB‑C ports, including DisplayPort 1.4 and 65W USB‑PD. The board ships with an Ubuntu 26.04 variant aimed at RISC‑V developers, underscoring its role as an open, hackable AI PC platform.

Local AI Inference: Latency, Privacy, and Reliability Advantages

Both the Sipeed K3 SBC and the SpacemiT K3‑powered Framework mainboard are designed to run AI models locally, which brings clear benefits over cloud‑only approaches. Edge AI inference drastically reduces latency, since prompts and sensor data are processed on‑device instead of traveling across the network to remote servers. This is critical for real‑time applications such as robotics, industrial monitoring, or interactive assistants using large language models. Local processing also improves privacy, because raw data and context stay on the device; only optional summaries or anonymized results need to be transmitted. Reliability improves as well, because systems remain usable even with poor connectivity or strict bandwidth limits. RISC‑V processors with integrated NPUs and generous memory make it feasible to deploy sizeable LLMs and other deep learning models directly at the edge, turning previously thin clients into fully capable AI nodes.

Open Source Hardware Ecosystem and Custom AI Workloads

The rise of RISC‑V‑based AI accelerator boards is tightly linked to the growth of an open source hardware ecosystem. Platforms like the Sipeed K3 and the DC‑ROMA RISC‑V Mainboard III embrace open standards at multiple levels: an open instruction set, mainstream Linux distributions such as Ubuntu‑based systems, and containerization support via Docker or similar tools. This openness lets developers profile, tweak, and extend their stacks across firmware, kernel, and user‑space frameworks to squeeze more performance out of AI workloads. For example, developers can experiment with quantized formats such as INT4 or FP8 on the K3’s 60 TOPS NPU, or tailor scheduling between CPU cores and the accelerator for mixed workloads that combine LLMs with vision or control logic. As more projects standardize on RISC‑V processor platforms, expect a richer library of optimized kernels, inference engines, and reference designs that further lower the barrier to deploying advanced AI at the edge.