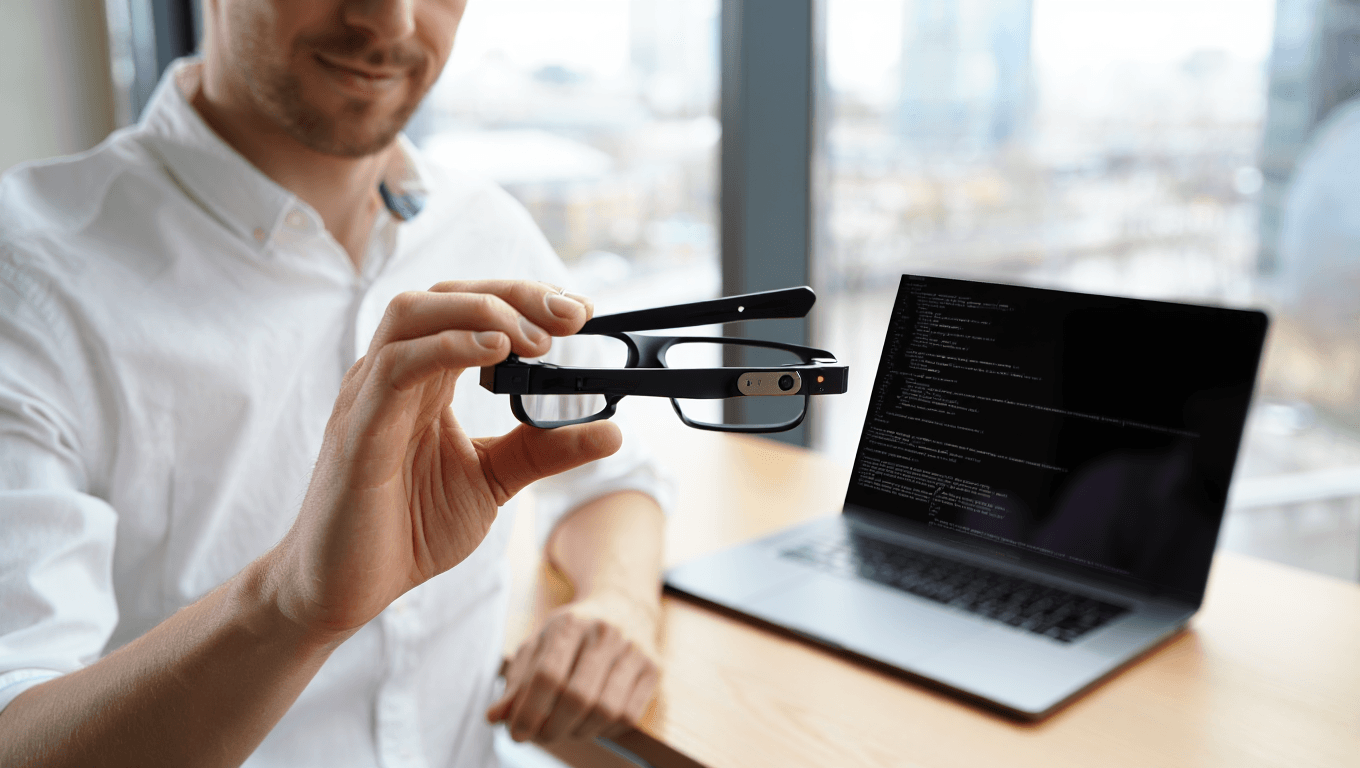

Why Google’s May Launch Window Matters for Smart Glasses

Google is circling May 2026 as the moment its consumer smart glasses finally step out of the lab and onto people’s faces. Recent Project Aura demos, highlighted by outlets like CNET and TechCrunch, show lightweight, camera‑equipped AR glasses that look built for everyday wear rather than niche developer experiments. This timing is crucial because it aligns hardware that can last most of the day with software that is nearly ready to plug into your existing digital life. By moving beyond bulky headsets into glasses‑like designs, Google is signalling that AR is about to become a mainstream wearable AI technology rather than a novelty. The 2026 release window also compresses decision time for early adopters, developers, and even advertisers, who must quickly decide whether to build, buy, or hold off as Google pushes its next screen directly onto your face.

Android XR: The Missing Link Between Your Phone and Your Face

The real strategic play behind Google smart glasses is Android XR, the extended‑reality platform designed to connect glasses, phones, and AI services. Developers now have early Android XR roadmaps, meaning apps built for today’s Android ecosystem can be adapted for heads‑up overlays, spatial interfaces, and hand‑tracking without starting from scratch. Rumoured Gemini integration adds another layer, turning the glasses into a live channel for conversational AI. Instead of treating AR as a separate world, Android XR aims to make your glasses an extension of your smartphone—handling navigation, notifications, search, and collaboration through the same accounts, settings, and app libraries you already use. This tighter ecosystem hookup reduces friction for both users and developers, promising faster app support and more consistent experiences across devices. In practical terms, your phone may become the compute hub while the glasses become the ambient, always‑ready display.

How Google Plans to Challenge Today’s AR and VR Heavyweights

Google’s timing positions it to challenge existing AR and VR competitors with a different angle: familiar Android at eye level. While current headsets often feel like isolated platforms, Google’s prototypes lean into everyday wear—lighter frames, waveguide displays approaching a ~60° field of view, and day‑long use claims. Snap and other rivals are also racing to refine AI‑powered glasses, but Google’s advantage lies in an ecosystem that already reaches billions of Android phones. By tying AR glasses to Android XR and Gemini, Google could offer developers a single stack for phones, tablets, headsets, and glasses. That reduces the classic chicken‑and‑egg problem: if devices launch in 2026 with immediate access to navigation, messaging, and productivity apps, users get utility on day one, not years later. Competitors will need to respond quickly with their own integrations or risk becoming isolated islands in a landscape of tightly connected wearable AI technology.

A New Interface for AI: From Phone Assistants to Ambient Overlays

Smart glasses may quietly redefine how you interact with AI assistants and digital information. Instead of pulling a phone from your pocket, you could glance at subtle overlays for directions, meeting reminders, or translations. With Gemini hooks, Google smart glasses could become a persistent conversational partner—listening through microphones, interpreting your surroundings through cameras and sensors, and responding with visual prompts anchored in the real world. Everyday tasks such as AR search, micro‑meetings, or remote collaboration could shift from discrete phone sessions to continuous, lightweight interactions. That raises new questions: how much always‑on sensing is acceptable, what recording etiquette should apply in public spaces, and how will users manage fatigue from persistent overlays? If 2026 is truly the inflection point for AR glasses, many users will be deciding whether to embrace this ambient AI layer now or wait for a second generation that tightens privacy and comfort standards.

What Early Adopters Should Watch Between Now and Launch

For early adopters, the next months before Google’s smart glasses launch are about more than spec sheets. Engineers are still wrestling with battery life, heat, and optical safety, while regulators scrutinise camera use and biometric data. The race is on to decide whether companies should ship lighter, faster hardware quickly or slow down for stricter certifications and clearer rules around always‑on sensing. On the user side, key metrics to watch include field of view, comfort over full‑day wear, and how well Android XR apps actually perform in real‑world conditions. Expect preorders and product announcements to cluster around major developer events, as vendors try to lock in developers and ecosystem partners early. Ultimately, your choice in 2026 may come down to whether you’re ready to trade some phone time for a persistent digital layer—knowing that your decision will help shape how future wearable AI technology balances convenience, privacy, and social norms.