From Mobile OS to Intelligence System

Google is reimagining Android as an “intelligence system” with the launch of Gemini Intelligence, a deep AI layer designed to sit on top of the operating system and orchestrate everyday tasks. Instead of treating Gemini as a standalone chatbot, Google is wiring it directly into Android so it can read on-screen context, understand what users are doing, and coordinate actions across apps, services, and devices. Gemini Intelligence Android capabilities debut this summer on the latest Samsung Galaxy and Google Pixel phones before expanding more broadly to other Android-powered hardware, including watches, cars, XR glasses, and laptops. This AI-first pivot positions Android as a platform where proactive AI automation becomes a default expectation, not an add-on feature, setting the stage for a new phase of competition in mobile platforms as Apple prepares its own AI upgrades.

Proactive AI Automation: Beyond Chatbots to Task-Orchestrating Agents

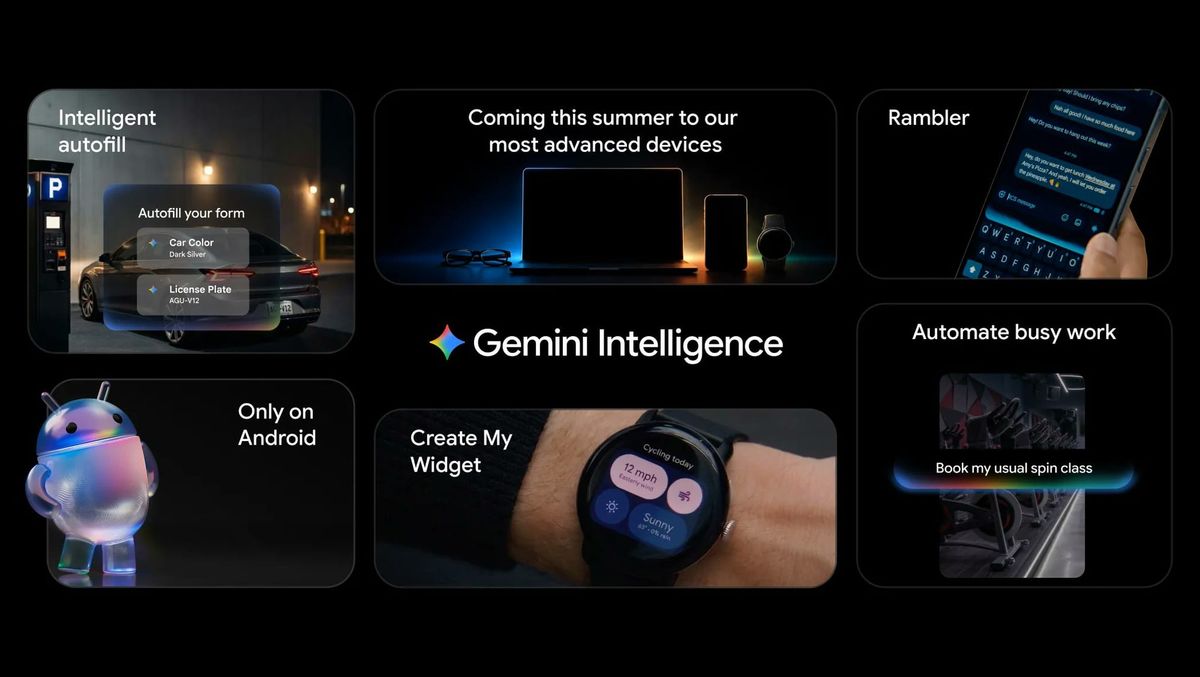

Gemini Intelligence marks a shift from reactive Q&A assistants to predictive, multi-step task management. Users can long-press the power button and ask Gemini to assemble a grocery cart from a notes app, re-order a favorite meal, book a ride, or plan a tour from a photo of a brochure. Gemini can also handle complex workflows like booking classes, filling online forms, and browsing sites to complete tasks with minimal manual tapping. Progress is visible via live notifications, and final actions still require user confirmation, keeping control in human hands. In Chrome for Android, Gemini 3.1 can summarize pages, answer questions, auto-browse to reserve parking or update orders, and even generate visuals, with some advanced features initially reserved for AI Pro and AI Ultra subscribers. Together, these Pixel Gemini features and broader Android capabilities turn Gemini into a true agent that proactively gets things done.

Deep Google AI Integration Across Apps, Cars, and Devices

Google AI integration is extending well beyond phones. Gemini Intelligence is being threaded through Android Auto, laptops, XR devices, and future “Googlebook” systems so that the same assistant can coordinate tasks wherever users are. In the car, Gemini-enhanced Android Auto will assist with on-the-road tasks such as managing messages or handling reservations, while keeping interactions voice-first and minimal-distraction. On-device features like Create My Widget let users describe a custom widget—such as a rain-only weather tile or a meal-prep dashboard—and have Gemini build it automatically for Android or Wear OS. Gemini Intelligence also powers Rambler in Gboard, cleaning up speech-to-text by stripping filler words and pauses while preserving conversational flow, even across multiple languages. These integrations signal Google’s intent to make Gemini the connective tissue across the Android ecosystem, turning disparate apps and devices into a coordinated, AI-managed environment.

Personal Memory, Autofill Upgrades, and Privacy-by-Design

Under the hood, Gemini Intelligence builds on Google’s Personal Intelligence work, which—after explicit opt-in—links Gemini with services like Gmail, Photos, YouTube, and Search. This lets Gemini Intelligence Android experiences remember context, such as emails, reservations, or past purchases, and use that knowledge to automate future tasks. That context is being fused with Android’s Autofill so the system can complete more complex forms using relevant information from connected apps, while keeping the Gemini link optional in settings. Google emphasizes privacy controls: users can enable automation per app, all purchases need confirmation, and a revamped Privacy Dashboard shows which AI assistants were active and which apps they interacted with in the past 24 hours. Security measures such as Private Compute Core, Private AI Compute, protected KVM, and defenses against prompt injection are intended to ensure these new agentic capabilities do not compromise user data.

Getting Ahead of Apple in the Mobile AI Race

Gemini Intelligence arrives just as Apple prepares major updates to its own AI system, and Google is clearly racing to set the narrative first. By pushing Gemini into flagship Galaxy and Pixel devices ahead of rival announcements, Google is signaling that Android is becoming the most deeply AI-integrated mobile platform. Gemini’s ability to act across Gmail, Chrome, Android apps, and even cars underscores a strategy focused on end-to-end proactive AI automation rather than isolated chatbots. The rollout will start on newer premium Android devices, such as upcoming Galaxy S26 and Pixel 10 models, before expanding across more hardware categories over time. While regional availability and hardware constraints will shape how quickly features spread, Google’s early, system-level integration of Gemini gives it a visible lead in demonstrating what AI-first smartphones, cars, and laptops can look like in everyday use.