DeepSeek’s New Model Pushes Cost-Efficient Reasoning to the Forefront

DeepSeek’s new model, DeepSeek-V4, crystallises how far low cost AI models have come in a short time. The Hangzhou startup first drew global attention when its R1 reasoning model delivered capabilities comparable to leading US systems at a fraction of the cost. V4 raises the bar again: the system offers an ultra-long context window of one million words, allowing it to ingest and reason over far larger bodies of text than most mainstream models. DeepSeek claims “drastically reduced compute and memory costs” alongside leadership in agent capabilities, world knowledge, and reasoning performance across both domestic and open-source segments. Two variants, V4-Pro and the more efficient V4-Flash, signal a strategy that spans high-end reasoning and mass-market deployment. Analysts describe the launch as an inflection point, arguing that by making long-context reasoning cheaper and faster, DeepSeek is expanding advanced AI beyond elite labs into everyday commercial applications.

Why Cheap, Long-Context Reasoning Models Matter Now

The timing of DeepSeek’s new model is strategic. As AI deployments scale, cloud providers and enterprises are grappling with soaring compute bills, particularly for models handling long context windows and complex agentic workflows. V4’s drastically reduced compute and memory requirements directly target this bottleneck. By delivering one-million-word context support with lower infrastructure demands, DeepSeek positions itself as a pragmatic alternative in a market where cost per token and serving efficiency are increasingly decisive. This runs parallel to moves by hyperscalers rolling out specialised chips optimised for pre-training and high-concurrency reasoning, underscoring a broader shift: the next phase of AI competition turns on efficient reasoning, not just raw model size. If V4’s open-source preview drives real-world performance gains, it could pressure incumbents to reprice long-context offerings and may accelerate the spread of agentic AI into sectors like legal, customer service, and industrial operations that rely on large document corpora.

AI Powered EVs and the Drive for Homegrown Intelligence

While DeepSeek is attacking cloud costs, the auto industry is turning vehicles into rolling AI platforms. A national “AI Plus” blueprint aims to embed AI systems across the economy, and cars are emerging as a flagship use case. Automakers are racing to build AI powered EVs that rely on domestic chips and software, turning next-generation models into self-reasoning machines rather than merely connected devices. Xpeng’s updated driving stack already allows drivers to issue natural-language commands like “park near the entrance to the shopping center,” with the vehicle using cameras to navigate even without detailed maps or coordinates. Consumer electronics players entering the EV space are also releasing updated AI models for their in-car systems. Executives argue there is no longer a clear line between a tech company and a car company, as AI-driven development cycles make vehicles faster to design, update, and differentiate in a fiercely competitive market.

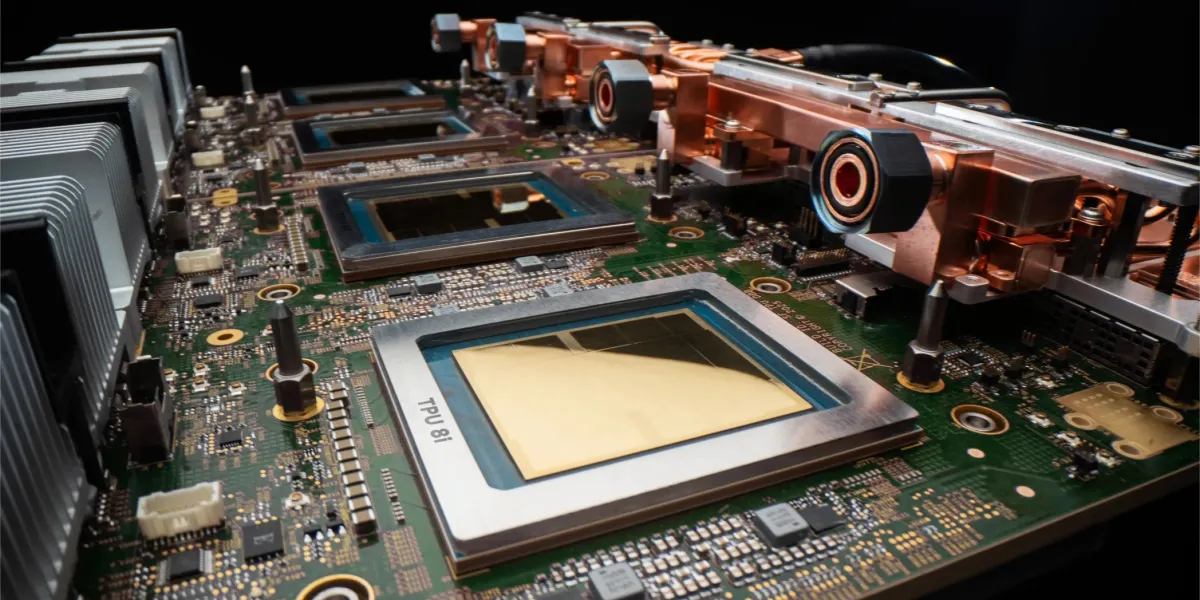

Chinese AI Chips and the Push for a Sovereign Stack

Behind both DeepSeek’s new model and AI heavy EV strategies lies a concerted effort to reduce dependence on foreign compute. Policymakers have explicitly targeted high-end semiconductors as a vulnerability and are backing domestic GPU and accelerator vendors. Companies such as Muxi have built fully self-developed general-purpose GPU architectures and achieved mass production, including GPUs whose design, manufacturing, and packaging are all completed within the local supply chain. Executives there argue that the bottleneck is no longer single-point chip technology but ecosystem collaboration across the entire stack—hardware, frameworks, compilers, and applications. DeepSeek’s V4, with its emphasis on efficient reasoning, is a natural fit for such domestic hardware. In automotive, the ambition is similar: self-driving stacks, in-cabin assistants, and over-the-air AI services all running on Chinese AI chips and software. Taken together, these efforts aim to form an end-to-end, sovereign AI infrastructure spanning data centers and vehicles.

Implications for Global Rivals—and the Challenges Ahead

For Western automakers and cloud providers, these developments hint at parallel AI ecosystems emerging. If players like DeepSeek can routinely ship low cost AI models with long context and strong reasoning, price pressure on premium cloud AI services is likely to intensify. In autos, domestically tuned self-reasoning driving systems, natural-language control, and tight integration with local digital services could become differentiating features that are hard for foreign brands to replicate quickly. At the same time, major hurdles remain. Export controls and restrictions on advanced chips still shape hardware roadmaps. Domestic GPU leaders warn that gaps in software ecosystems and industrial-chain coordination remain a core bottleneck. Trust and transparency will also be crucial: global customers scrutinising AI safety, data governance, and IP protection may hesitate before committing to unfamiliar platforms. The race is no longer just about model benchmarks—it is about building credible, cost-efficient, and globally trusted AI stacks end to end.