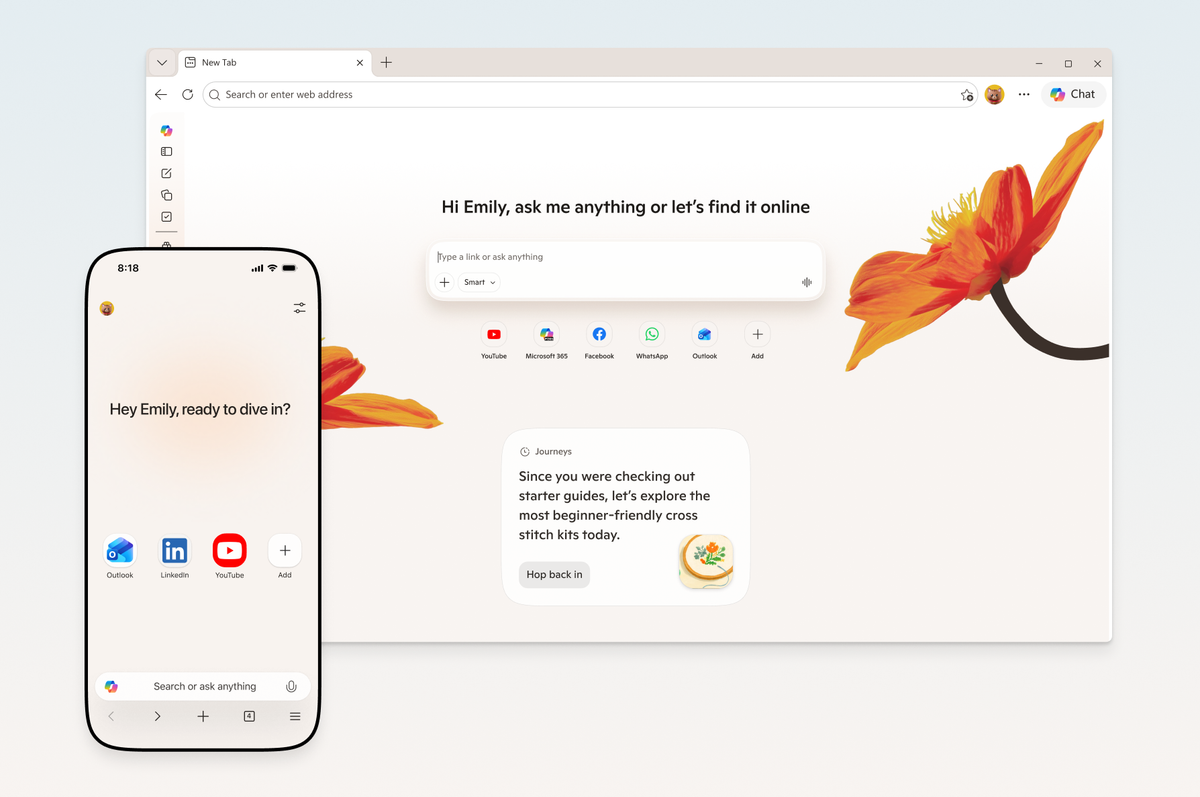

From Copilot Mode Experiment to Default Edge Experience

Microsoft is dismantling the wall between its experimental Copilot Mode and the mainstream Microsoft Edge Copilot experience. Instead of living in a separate mode, AI browser features are now woven directly into the standard Edge interface on both desktop and mobile. Users simply tap the Copilot button to access tools like multi-tab reasoning, summaries, and comparisons, eliminating the need to switch contexts or toggle special settings. This shift signals Microsoft’s confidence that Copilot is ready for everyday browsing, not just early adopters. Edge is being positioned as a full AI workspace that spans Windows, Mac, iOS, and Android, rather than a browser with a bolt-on chatbot. By promoting Edge AI integration as the default, Microsoft is effectively democratizing access to its AI assistant, lowering friction for people who want help while they search, read, and work across devices.

Desktop-Only No More: Multi-Tab Reasoning, Journeys, and Memory Go Mobile

The most meaningful change is the jump of formerly desktop-exclusive tools to mobile. Copilot can now reason across multiple open tabs on phones, comparing information or summarizing key points—useful for decisions like bookings, product research, or news analysis on the go. Journeys, which clusters browsing history into topic-based cards with summaries and suggested next steps, is also arriving on Edge mobile, helping users resume research they started earlier. Long-term memory capabilities let Copilot use past conversations and browsing (with user permission) to deliver more contextual answers over time. This brings the full research loop—discover, compare, revisit—into a single mobile AI workspace. Combined, these mobile AI tools close the gap between desktop and handheld productivity, turning Edge into a cross-device hub for ongoing projects rather than a series of isolated browsing sessions.

Vision, Voice, and Screen Sharing: A More Natural Mobile Copilot

Microsoft’s Edge AI integration is also reshaping how people interact with the web using Vision and Voice. On both desktop and mobile, users can now share what’s on their screen directly with Copilot, letting it see pages, images, or documents in context. On mobile, this is paired with real-time voice interaction, so users can talk to Copilot as they browse instead of typing prompts. That makes tasks such as asking follow-up questions about a page, clarifying confusing sections, or quickly summarizing dense content far more fluid—especially when multitasking. The experience is closer to an on-device assistant than a traditional search box. By embedding these multimodal capabilities directly into Edge, Microsoft is turning the browser into a conversational companion that understands what you see and say, rather than just what you type into a search field.

Study, Writing, and Audio Tools: Turning Edge into a Learning Workspace

On desktop, Microsoft is layering Copilot-driven study and creation tools directly into the browsing experience. Study and Learn mode can transform any webpage into guided sessions with quizzes, flashcards, and interactive prompts, so users can ask Copilot to “quiz me on this topic” instead of passively reading. A writing assistant embedded where users type offers drafting, rewriting, and tone adjustment, functioning as a context-aware upgrade to traditional spell check. Edge can also generate AI-produced podcasts from open tabs, condensing research or articles into audio—ideal for learning during commutes or downtime. These AI browser features emphasize Edge as a space for active learning and content creation, not just consumption. While availability and limits vary by feature and market, the overarching design is clear: Copilot turns the browser into a personalized tutor, editor, and narrator layered on top of everyday web pages.

What This Means for Browser-Based Productivity

By embedding Copilot directly into Edge across desktop and mobile, Microsoft is redefining what a browser is for. Instead of being a passive window onto websites, Edge becomes a cross-device productivity environment where AI helps manage research, writing, learning, and planning. Users can start a project on a laptop, continue it on a phone with Journeys and multi-tab reasoning, and get audio summaries for later listening—without ever leaving the browser. This reduces the friction of jumping between apps or tools when seeking AI assistance. It also positions Microsoft Edge Copilot as a central layer of assistance wherever users browse, not just in traditional desktop scenarios. As mobile AI tools mature and more everyday tasks move to phones, this strategy tightens the link between browsing and productivity, turning Edge into the primary “canvas” for consumer Copilot work.