From Signed-In Sessions to Supervised Automation

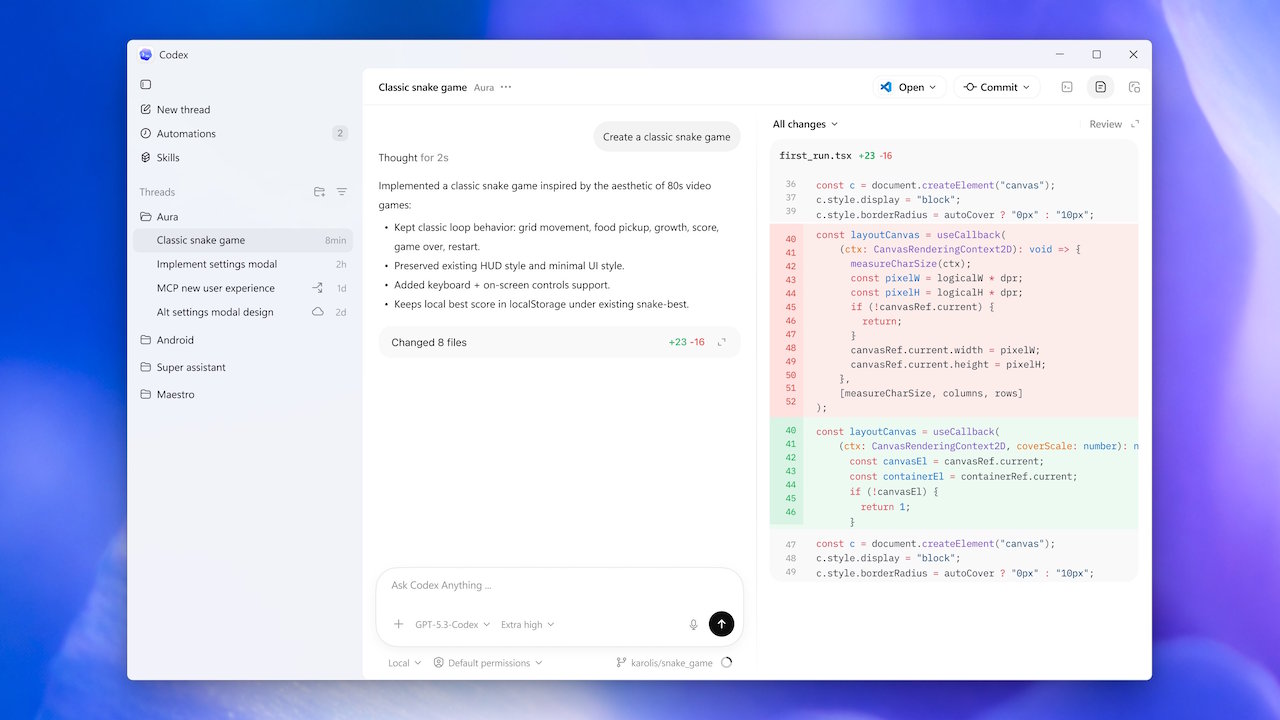

The Codex Chrome extension is designed to operate inside real, signed-in browser sessions, giving the AI access to the same authenticated web tasks that humans rely on every day. Instead of depending solely on APIs, Codex can now navigate Gmail, Salesforce, LinkedIn, and internal dashboards directly in Chrome, where live account state and session context matter most. Users invoke it from within Codex, often with prompts that explicitly call for Chrome when logged-in websites are required. Crucially, OpenAI avoids turning this into unrestricted browser automation. Codex runs in task-specific tab groups that separate its work from the user’s main window, so it can test web apps, review dashboards, and complete multi-step workflows without hijacking active browsing. This approach makes browser automation feel like a supervised assistant working alongside the user, rather than an opaque process that silently manipulates their entire session.

AI Browser Security Through Tab Groups and Approval Gates

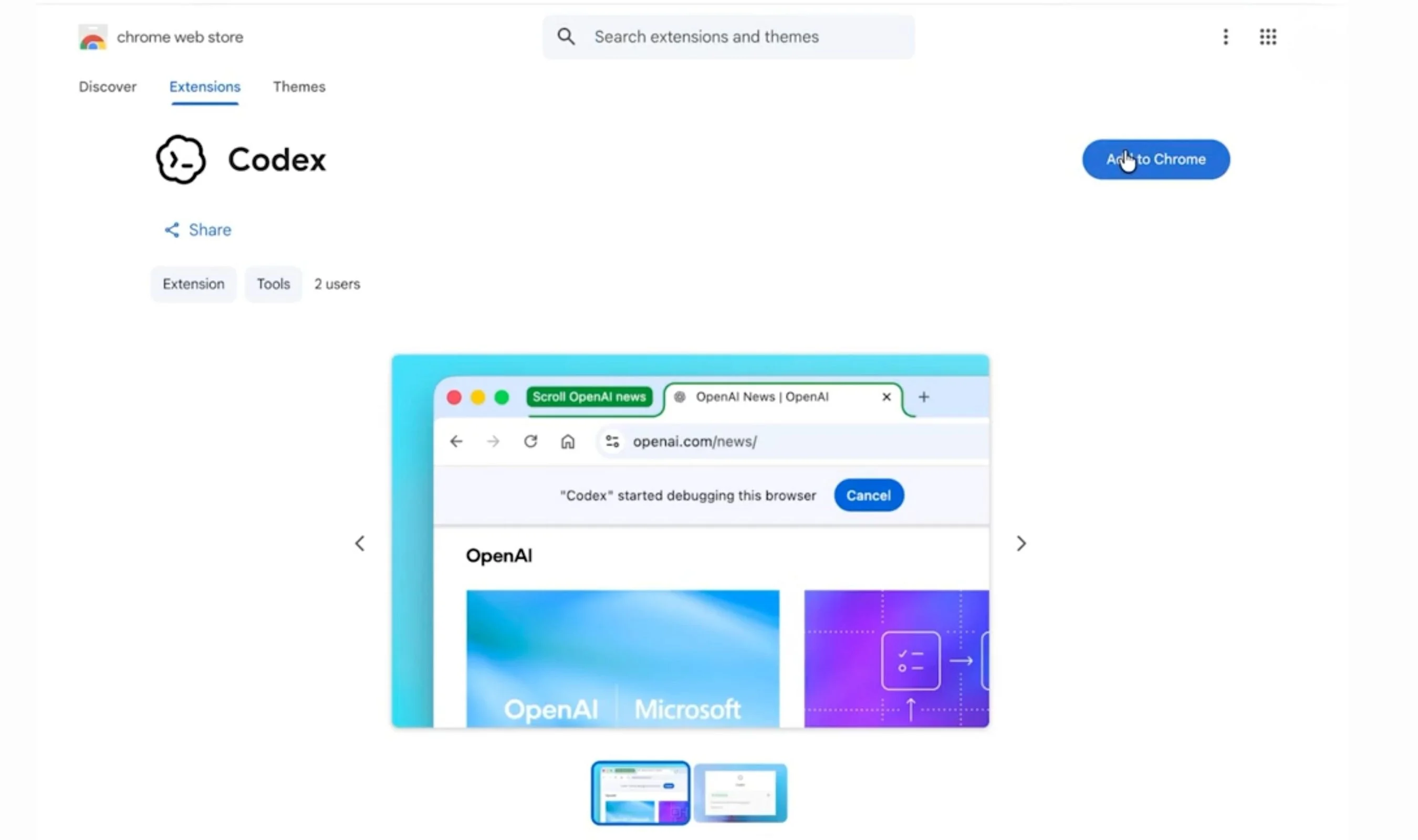

OpenAI’s AI browser security model for the Codex Chrome extension centers on deliberate constraints. Codex receives its own tab groups and even its own browser instance, which keeps background automation isolated from the user’s foreground work. The extension never takes over the active tab; it runs web development and operational tasks in parallel, using Chrome DevTools, gathering context across open tabs, and organizing results in its own workspace. Permissions are tightly scoped. Users install the extension via the Codex Plugins menu, then manage allowlists and blocklists in Computer Use settings. Each new website requires explicit approval, and browser history access is granted per request rather than through any permanent always-allow option. Host prompts and sensitive-action approvals create additional checkpoints before Codex interacts with logged-in sites, reducing the risk that the agent could roam beyond what the user intends or perform high-impact actions without clear consent.

Windows Sandbox Design: Offline Users, Firewall Checks, and DPAPI

Behind the scenes, Codex’s Windows sandbox architecture reinforces the safety of local and browser-based workflows. OpenAI’s redesign introduces offline and online sandbox users, keeping default tasks in an offline-by-default mode unless the user explicitly allows broader network connectivity. This layered model is especially important as Codex moves from purely cloud-based behavior into local developer environments. Before any child process runs, the sandbox routes actions through DPAPI-backed credential handling, firewall checks, and controlled command-runner handoffs. These safeguards limit how Codex can use local file and network access while still letting it read codebases, write inside an active workspace, and support familiar tools like the CLI, IDE extensions, and the desktop app. By combining strict execution layers with clear user opt-ins for connectivity, OpenAI gives enterprises a governance framework that keeps local automation powerful but bounded, even when Codex is orchestrating complex authenticated web tasks through Chrome.

Balancing Autonomous Agents with User Control in Authenticated Workflows

The Codex Chrome extension’s security model reflects a careful balance between autonomous AI capabilities and user control. On one side, Codex can navigate authenticated workflows across Gmail, Salesforce, internal tools, and custom dashboards, handling tasks like inspecting logs, filling forms, and testing web apps without constant human micromanagement. On the other, every layer of the experience keeps users in charge: they must install the plugin, approve Chrome’s prompts, and confirm the extension’s connection before tasks run. New hosts trigger fresh approvals, and a broken browser session pushes users back into explicit troubleshooting rather than silent failure. Meanwhile, sandbox controls on Windows constrain local behavior with offline users, DPAPI, and firewall enforcement. Together, these browser and OS-level safeguards allow Codex to operate as a powerful agent in authenticated web tasks while preserving a clear boundary: the AI works within user-defined lanes, under transparent rules, and with visible, revocable permissions.