Firefox’s AI-Assisted Bug Surge

Mozilla’s security team says April was a breakthrough month for AI vulnerability detection in Firefox. The browser maker fixed 423 security bugs, compared with just 76 in March and a 21.5 monthly average last year. Anthropic’s Mythos Preview model is credited with finding 271 of those issues in Firefox 150, including high-severity flaws like a 20-year-old heap use-after-free reachable via the XSLTProcessor DOM API. Many of the newly uncovered vulnerabilities are sandbox escapes that are notoriously hard to reach with traditional fuzzing alone, suggesting AI analysis can broaden security bug detection coverage. Mozilla engineers also report that AI models tried and failed to exploit prior hardening against prototype pollution, indirectly validating past defensive work. Yet they emphasize that results improved not only because of better models, but also due to a refined “agentic harness” that filters AI output and boosts the signal-to-noise ratio in security reports.

Is Mythos Really Special—or Just Well Packaged?

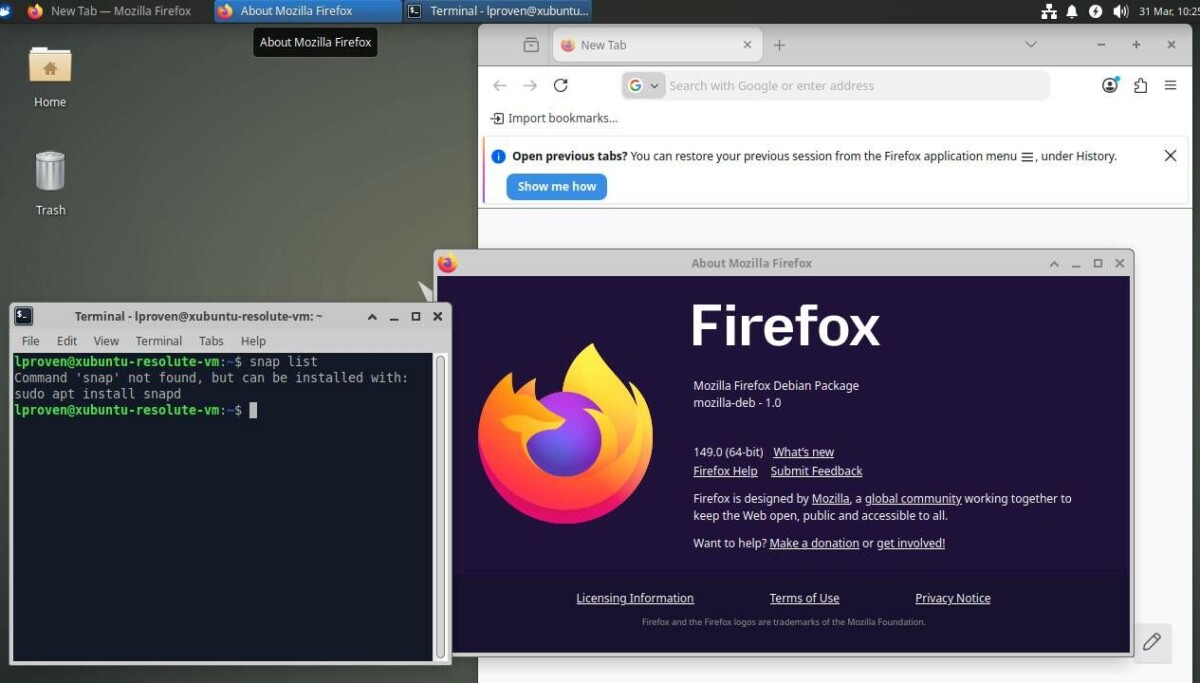

Despite Mozilla’s enthusiasm, security experts are questioning whether Mythos is uniquely capable or simply one capable model among many. Consultant Davi Ottenheimer argues that claims like “Mythos found 271 bugs” lack context because there’s no head-to-head comparison with other AI security tools or conventional methods on the same codebase. In his own experiment, he strapped Anthropic’s cheaper Sonnet 4.6 and Haiku 4.5 models into a harness called Wirken with an auditing skill named Lyrik. That setup surfaced eight findings in two minutes, two of which matched Mythos-discovered bugs, at a reported cost of about USD 0.75 (approx. RM3.50). Other practitioners report productive bug hunting with off-the-shelf models like Opus 4.6, which also undercuts Mythos on price. For skeptics, the absence of transparent benchmarks makes it impossible to tell whether Mythos itself is the breakthrough—or whether it’s just the most hyped participant in a broader AI vulnerability detection trend.

The Quiet Star: Middleware and Agentic Harnesses

Both Mozilla’s account and independent experiments hint that architecture may be more transformative than any single model. Mozilla credits its “agentic harness” for steering Mythos and Opus 4.6 toward useful findings while suppressing noisy or low-value reports. Ottenheimer makes a similar point by showing that a carefully designed harness like Wirken plus a targeted auditing skill can turn general-purpose models into efficient security bug detection engines. The pattern is clear: orchestration—task decomposition, tool integration, and automated triage—appears to be doing much of the real-world heavy lifting. This reduces manual review fatigue and lets security teams iterate quickly over large codebases. If harness design is the real differentiator, the competitive edge may come less from privileged access to a supposedly extraordinary model and more from disciplined engineering around workflows, prompts, logging, and verification pipelines that make AI security tools dependable instead of chaotic.

When AI Security Tools Become the New Attack Surface

While AI helps find browser bugs, AI tooling can introduce fresh risks when its own security model is weak. Adversa AI’s TrustFall proof-of-concept shows how AI-powered developer tools like Claude Code can be turned into a one-click remote code execution vector through the Model Context Protocol (MCP). By planting configuration files such as .mcp.json and .claude/settings.json in a cloned repository, attackers can silently enable project-scoped settings that spawn an unsandboxed Node.js MCP server once a user clicks a generic “Yes, I trust this folder” prompt. Anthropic views that click as moving the issue outside its threat model, but Adversa counters that this is not informed consent—especially after a more explicit warning dialog was removed in a later CLI version. For teams adopting AI security tools, this incident underlines a key lesson: UX decisions, default trust scopes, and pipeline integration can turn convenience prompts into critical security liabilities.

Adoption Hinges on Evidence—and Clear Warnings

The emerging consensus is that AI vulnerability detection can accelerate Firefox bug fixes and similar efforts, but measurable evidence and transparent UX will determine whether it scales safely. Security leaders want comparative benchmarks that show how Mythos, Opus, and other models perform under identical conditions, not marketing narratives framed as measurements. At the same time, incidents like TrustFall illustrate that user behavior and warning clarity are central to AI security tool adoption. Overly vague prompts such as “trust this folder” hide complex implications, from enabling MCP servers to expanding project-scoped permissions, and they blur the line between user error and design failure. For AI to become a trusted layer in security workflows, vendors will need to pair strong models and robust agentic harnesses with explicit, actionable warnings—and be willing to demonstrate, not merely claim, that their systems deliver better security than the status quo.