A Student’s LongMemEval Breakthrough: 90.4% Without a Single Vector

A first-year university student has just posted a standout result on the LongMemEval-S benchmark: 90.4% using structured storage, no embeddings, and roughly half the token consumption of typical retrieval augmented generation pipelines. Their system achieves around 98% retrieval accuracy across 500 questions over chat histories exceeding 115,000 tokens, a regime where many vector-database approaches start to crumble. Instead of relying on cosine similarity over embeddings, the design uses a three-stage pipeline—retrieve, process, store—where the outer stages maintain structured maps of topics, facts, and ledgers. The middle stage only processes the relevant slice of memory for each query, averaging about 15,000 tokens split between cached system context, dynamic content, and a short tail. This AI memory benchmark result matters because it shows that careful write-time organisation can outperform fuzzy similarity search in demanding, long-horizon conversational settings.

RAG Without Vectors: Reasoning Over Structured Storage Instead of Similarity Search

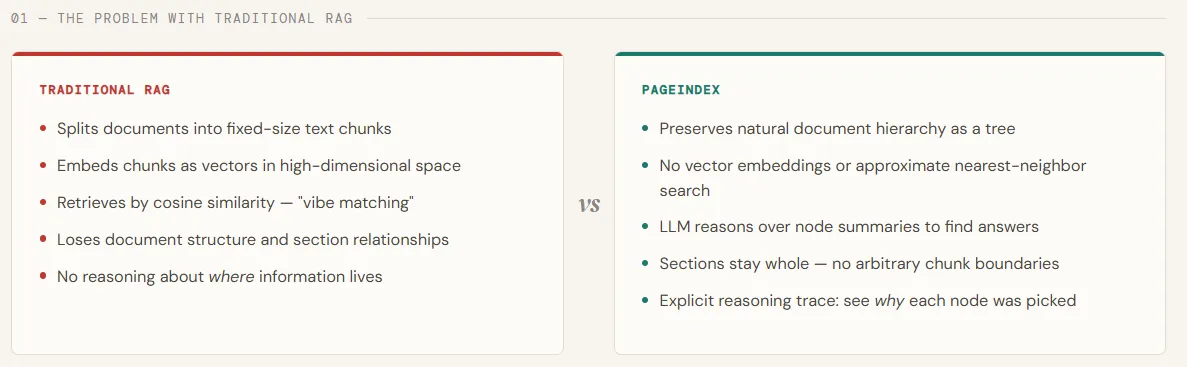

RAG without vectors flips the usual retrieval augmented generation playbook. Traditional pipelines embed every document chunk, then use vector similarity as a proxy for relevance. But similarity does not always equal the best answer, especially when questions depend on temporal order, contradictions, or scattered facts across sessions. Structured storage RAG takes a different path: it stores knowledge in explicit structures—maps of entities, timelines, and topics—so that retrieval becomes a logical lookup rather than a fuzzy nearest-neighbour search. The student’s system shows that when data is organised correctly at write-time, retrieval can collapse into a single hop, avoiding the overhead of scanning an entire long context. This approach treats memory more like a database with schemas and indexes than a search engine. For developers, it suggests that the real performance gains may come not from better embeddings, but from better data modelling and explicit reasoning over structure.

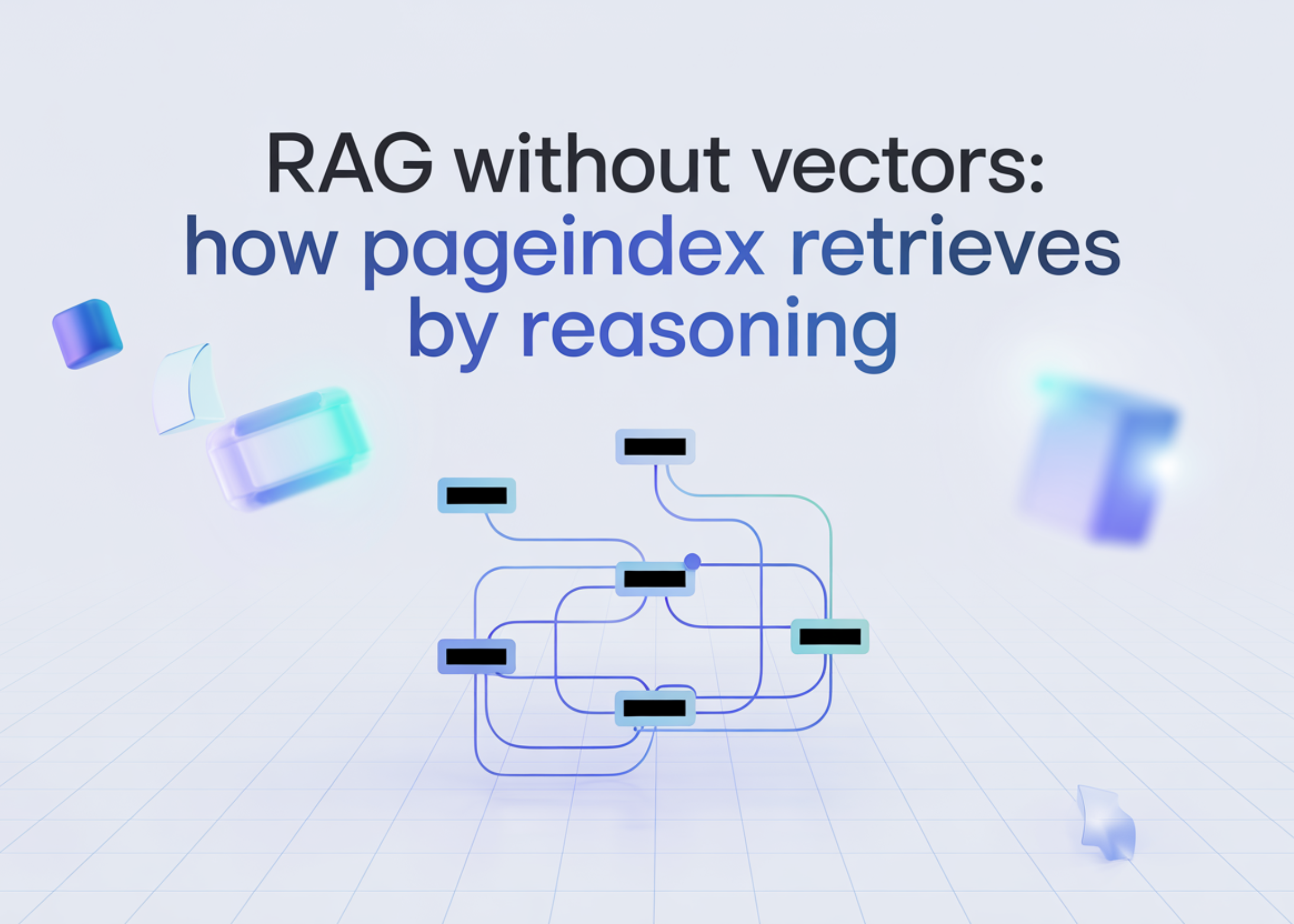

Inside PageIndex: Tree-Based Reasoning as a Vector Database Alternative

PageIndex demonstrates another practical path to RAG without vectors by rethinking how documents are indexed and searched. Instead of chunking PDFs and embedding each piece into a vector space, PageIndex builds a hierarchical, table-of-contents-style tree where every node stores a title, a summary, and the full section text. When a query arrives, an LLM such as GPT-5.4 reasons over node summaries to decide which sections likely contain the answer before loading any full text. This vectorless, reasoning-driven retrieval is closer to how humans navigate complex reports: scan headings, drill into promising sections, then connect ideas across chapters. The process is more interpretable and traceable than opaque similarity scores, and PageIndex’s strong performance on demanding benchmarks like FinanceBench underscores that advantage. For long, structured documents—financial reports, research papers, legal contracts—this kind of hierarchical reasoning offers a compelling vector database alternative focused on precision and structure-aware search.

Why Structured RAG Matters for Malaysian Teams and SME Chatbots

For Malaysian developers and SMEs building internal chatbots, structured storage RAG could be a practical game changer. Many corporate use cases—HR policies, SOPs, compliance manuals, or multi-year project notes—are highly structured and slow-changing. Instead of paying the complexity cost of maintaining large vector indexes, teams can organise documents into explicit hierarchies and maps, then let an LLM reason over that structure. Potential benefits include smaller indexes, lower infrastructure overhead, and more interpretable retrieval paths that compliance or management teams can audit. Crucially, performance is less tied to the quirks of a particular embedding model. However, there are trade-offs: transforming messy PDFs and legacy documents into clean structures takes upfront work, and complex reasoning over hierarchies may add latency. Hybrid setups—combining vectors for broad recall with structured signals for precise reasoning—may offer a pragmatic path for organisations testing a vector database alternative in production.

Practical Takeaways: From Vector-First to Structure-Aware Retrieval Strategies

Teams already invested in vector databases do not need to rip out existing infrastructure, but these results are a clear signal to diversify. Start by identifying high-value, high-structure domains—policy libraries, contracts, or technical documentation—and experiment with structured indexing. You can emulate PageIndex by building a tree of sections and summaries and prompting an LLM to choose which nodes to read, or mimic the LongMemEval-style memory system by maintaining explicit maps of topics and facts updated at write-time. Compare token usage, retrieval accuracy, and error types against your current similarity-based pipeline. Watch especially for improvements on multi-session, time-sensitive questions where vector search often degrades. Over time, a hybrid retrieval augmented generation strategy—vectors for broad discovery, structure for precise reasoning—will likely become the norm. The emerging lesson for builders is simple: treat AI memory as a data modelling problem, not just an embedding problem.