A Booming Market Built on Invisible Cameras

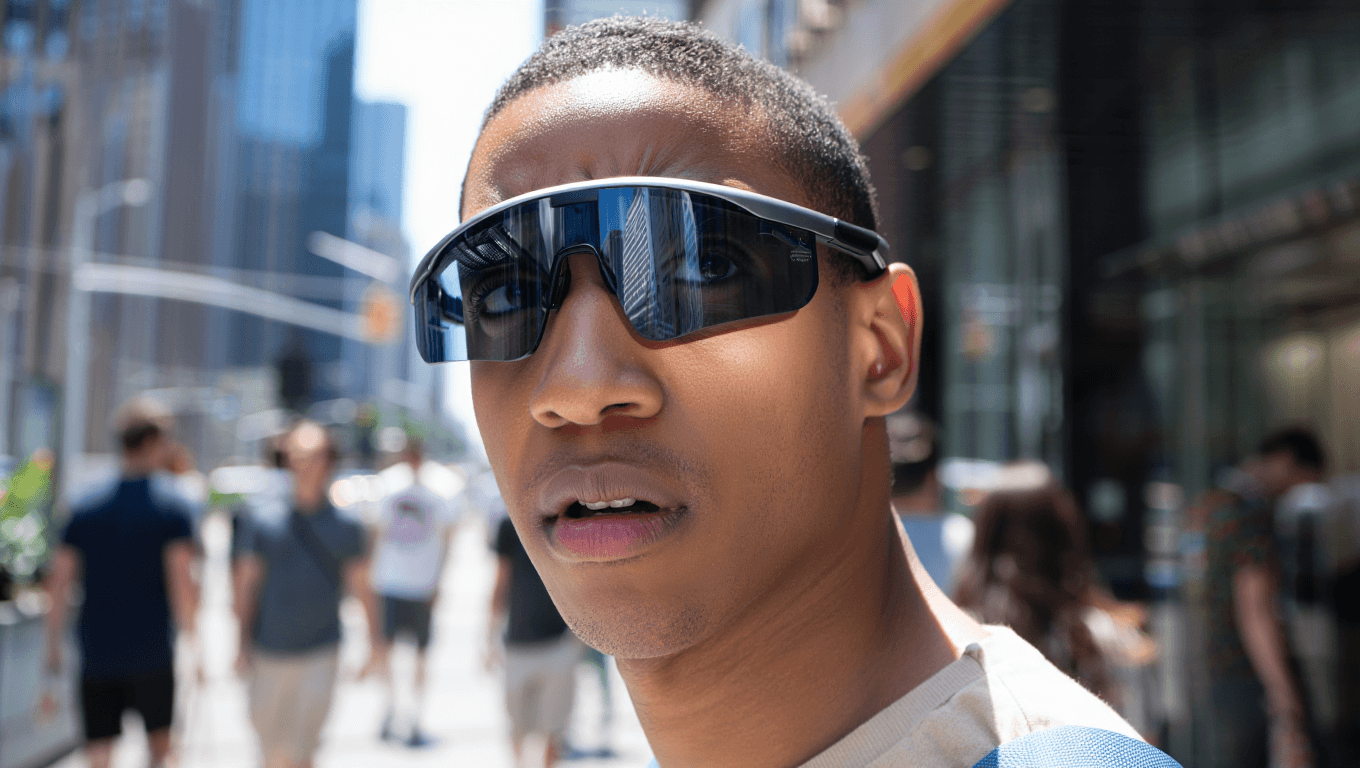

Smart glasses are moving from novelty to mass-market gadget, led overwhelmingly by Meta’s Ray-Ban line. Meta has shipped more than seven million pairs in partnership with EssilorLuxottica, giving the device over 80% of the global AI eyewear market, according to Counterpoint Research. Executives describe the product as one of the fastest-growing consumer electronics categories in history, a rare momentum story for a company better known for its metaverse bet. The appeal is clear: the glasses embed an almost invisible camera, open‑ear speakers and a subtle recording light into familiar Wayfarer-style frames. Wearers can snap photos, film video, take calls and trigger Meta’s AI assistant with a tap on the temple. The catch is that bystanders usually cannot tell when they are being filmed; the indicator light is easy to miss in daylight, and the eyewear looks indistinguishable from a standard fashion accessory.

Covert Recording Incidents and Lawsuits Raise the Stakes

That ambiguity is now driving a wave of discomfort and legal friction. Women have reported being approached in shops, on beaches and in the street by people wearing smart glasses who deliver scripted pick‑up lines or intrusive questions while filming reactions, later uploading the clips for views. Many victims only discover the recordings after they go viral, often alongside online harassment, and find that legal options are limited where photography in public spaces is broadly lawful. Parallel to these incidents, lawsuits have emerged targeting how footage from the glasses is handled. Content moderators in Kenya reviewing clips for AI training say they were exposed to graphic material, including sexual activity and people using the lavatory. Separate suits from device owners claim they were unaware such videos had been captured or that their recordings could be shared for human review, exposing gaps between terms of service and user understanding.

Facial Recognition Risks Turn Smart Glasses into Walking Identifiers

Privacy concerns are poised to escalate as smart glasses move beyond simple video capture toward real‑time identification. Meta is reportedly preparing to integrate facial recognition into a future version of its Ray‑Ban line, which would allow wearers not only to record passers‑by but also to identify them on the spot. That shift raises acute facial recognition risks: bystanders could be logged, tagged and profiled without consent, blurring the line between casual recording and pervasive biometric surveillance. Regulators and privacy lawyers warn that norms built for visible cameras and fixed CCTV systems are ill‑suited to inconspicuous eyewear that travels everywhere people go. Existing rules on filming in courtrooms, hospitals, changing rooms, museums and cinemas hinge on being able to spot a camera; a lens hidden in everyday frames, paired with algorithmic recognition, makes those boundaries far harder to police in practice.

2026 Sales Surge Outpaces Policy and Workplace Rules

The commercial trajectory in 2026 is intensifying the policy crunch. A recent BBC investigation highlighted that consumer smart‑glass models are selling strongly just as privacy worries spike, pushing governments, regulators and venues into urgent conversations. Analysts and industry observers now frame this as a collision between wider availability and immature norms: convenience without clear guardrails risks becoming “surveillance by accident.” Manufacturers including Meta, Snap, Samsung and others are shifting from prototype demos to real retail deployments this year, meaning gyms, offices, schools and public transport systems will see far more heads‑up displays in daily use. As a result, employers and venue operators are scrambling to decide whether to ban smart glasses in sensitive areas, require visible notices, or develop new disclosure rules for recording. Sales are climbing faster than regulatory frameworks can adapt, leaving individuals and institutions to improvise protections in real time.

From Early Backlash to Long-Term Trust Test

The current backlash echoes earlier controversies around first‑generation wearable cameras, but this time the stakes are higher. Major technology companies beyond Meta—such as Apple, Snap and Google—are investing heavily in new consumer smart‑glass designs and platforms, betting that tens of millions of people could be wearing AI-enabled eyewear within a few years. For investors, that suggests a powerful new hardware category, akin to the rise of the smartwatch. For civil liberties groups and privacy practitioners, it looks like the front edge of normalized ambient surveillance. Meta has argued that it discloses human review of footage and maintains teams to combat misuse, insisting that individual users bear responsibility for ethical behavior. Yet as covert recording lawsuits proliferate and facial recognition looms, the bigger question is whether clear, enforceable rules can emerge quickly enough to preserve public trust while the technology races ahead.