From Unlimited AI Coding to Locked Sign-Ups and Stricter Caps

GitHub Copilot has shifted from a seemingly limitless AI coding subscription to a product under stress. GitHub has paused new sign-ups for Copilot Pro, Pro+, and Student plans, leaving Copilot Free as the only individual tier still open to newcomers. Existing users can stay on or upgrade between paid plans, but they now face tightened usage limits and reshuffled model access. Opus models have been removed entirely from Pro, while Anthropic’s Claude Opus 4.7 is restricted to Pro+ and older Opus versions are being phased out. GitHub has introduced two overlapping usage caps: a per-session limit to protect infrastructure during bursts of activity, and a weekly token ceiling that, once hit, downgrades users to auto model selection until the window resets. These “disruptive” changes, as GitHub itself describes them, are explicitly framed as necessary to keep service reliability intact as AI usage surges.

Agentic AI Workflows: How Coding Agents Blew Up the Cost Model

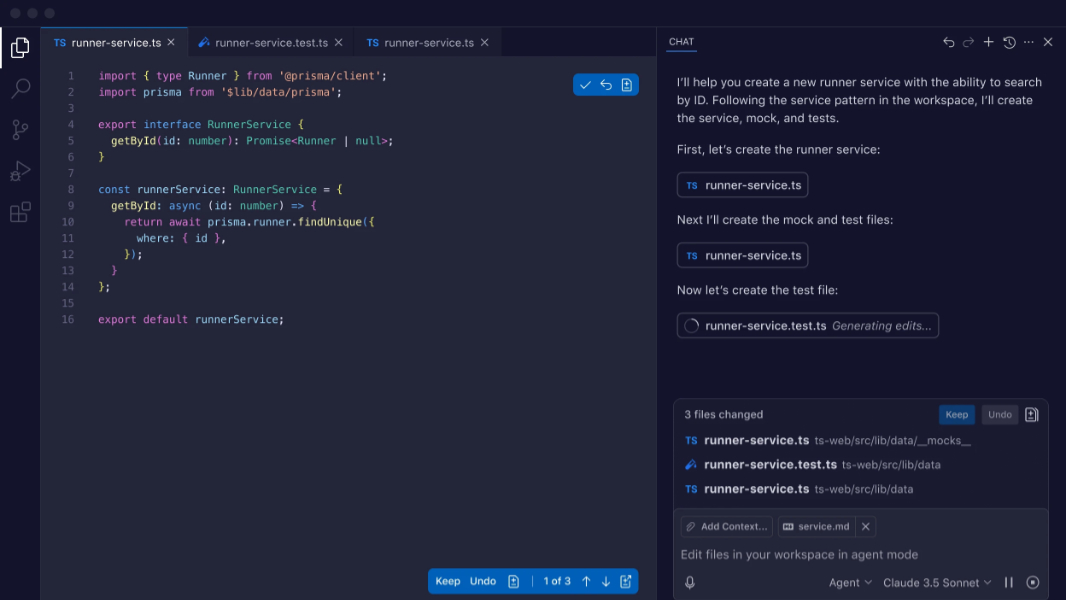

Copilot’s economics were originally tuned for short, stateless code completion—quick suggestions as developers typed. That model assumed modest, sporadic compute use. Agentic AI workflows have blown up those assumptions. Developers now run long-lived, parallelised sessions where autonomous agents and subagents debug, refactor, and implement features over extended periods. GitHub’s VP of product Joe Binder notes that these agentic workflows “regularly consume far more resources than the original plan structure was built to support,” and it is now “common for a handful of requests to incur costs that exceed the plan price.” Weekly operating costs for Copilot have nearly doubled since early this year, and more customers are slamming into usage limits designed to keep the system stable. The result is a fundamental mismatch: users expect an unlimited AI coding subscription, while the underlying infrastructure is paying per token and per compute cycle in a far more demanding environment.

Inside Copilot’s Move to Token-Based Billing

GitHub is now preparing a shift from request-based quotas to token-based billing, aligning what users pay with the actual compute they consume. Today, Copilot Pro offers a fixed number of monthly “premium requests” and Pro+ a larger allocation, but each request can involve wildly different token volumes. Internal documents and reports indicate that from June, Copilot will assign users AI token budgets instead of request counts, charging based on the tokens used for inputs and outputs. One reported pricing example for a GPT-based model quotes USD 2.50 (approx. RM11.50) per million input tokens and USD 15 (approx. RM69) per million output tokens, highlighting how much heavier generation dominates cost. Enterprise customers will receive pooled AI credits that teams can share. Importantly, Copilot is expected to remain a subscription product, with the flat fee effectively buying a specific token allowance rather than unmetered access to premium models and agentic workflows.

How Copilot’s Limits Compare to Claude Code and Other Rivals

GitHub Copilot’s new constraints sit within a broader shift across AI coding assistants. The flat-rate AI coding subscription model, often clustered around USD 20–30 (approx. RM92–138) per month for individual tiers, is showing strain even for major providers. Anthropic, a leading player with Claude Code, has already moved enterprise customers to token-based billing and has tested restrictions on lower-priced plans. Like GitHub, it cites agentic AI workflows and long-running agents as the source of unexpected compute demand. Competitors such as Claude-powered tools or agentic-first editors are experimenting with usage caps, tiered access to heavier models, and pooled credits for teams. Copilot’s tightening of GitHub Copilot limits, removal of Opus from cheaper plans, and upcoming Copilot token billing are therefore less an outlier and more a signal: heavy agentic workloads are increasingly treated as a premium, metered resource rather than a perk bundled into low, flat monthly fees.

What Developers and Teams Should Do Next

For individual developers and engineering teams, Copilot’s pricing changes mean AI coding assistance must be treated as a real infrastructure cost, not a free lunch. The first adaptation is visibility: pay attention to the new session and weekly token limits surfaced in VS Code and the Copilot CLI, and map those patterns against your typical workflows. Reserve agentic AI workflows—long refactors, multi-step agents, automated code generation—for the problems where they create clear leverage. Mix tools where possible: use lighter models or Copilot Free for simple code completion, and switch to Pro+ or alternative assistants only when you truly need heavy reasoning. Teams should explore pooled or enterprise-style credits, which can smooth out spikes in individual usage. Finally, consider supplementing with local or open-source coders for repetitive tasks, and include AI usage in sprint planning and budgets the same way you track cloud compute and storage.