A New Generation of AI GPU Clouds Aims for Clean Compute

The AI boom has exposed a chasm between sky‑high model ambitions and the hardware needed to run them. Enterprise teams are scrambling for access to GPUs as demand for AI chips pushes key suppliers like Nvidia to fresh highs and fuels sector-wide rallies in semiconductor stocks. At the same time, the energy footprint of AI data centers is under growing scrutiny, forcing companies to weigh performance against environmental impact. Into this gap steps a new crop of AI GPU cloud providers promising not just capacity, but clean AI compute and clearer economics. Their pitch: faster access to specialized infrastructure, transparent pricing, and architectures designed from the ground up around sustainability and AI workloads, rather than retrofitted from generic cloud services. Together with shifts in AI accelerators production and global chip markets, these offerings signal a more fragmented—but potentially more resilient—AI infrastructure landscape.

Verda’s Renewable-Powered GPU Cloud Bets on Full-Stack Control

Helsinki-based Verda has emerged as a prominent challenger with an AI GPU cloud built on vertical integration and renewable energy. Formerly known as DataCrunch, the company has raised USD 117 million (approx. RM540 million) to scale its infrastructure, which it designs and operates end to end, including servers, sustainable data centers, networking, and AI developer tooling. Instead of renting hardware from third parties, Verda runs its own facilities that rely on renewable energy and natural cooling, lowering operating costs and shrinking its carbon footprint. As one of a small number of Nvidia Preferred Partners, it gets priority access to scarce GPUs, a critical advantage while AI chip demand remains elevated. Verda positions itself between hyperscalers—where capacity can be slow to secure and pricing opaque—and bare‑metal rentals that offer little support. By coupling clean AI compute with European data sovereignty and hands-on AI lab services, it is targeting enterprises that want speed, sustainability, and control.

SpaceX’s In-House GPU Plans Highlight the Push for Vertical Integration

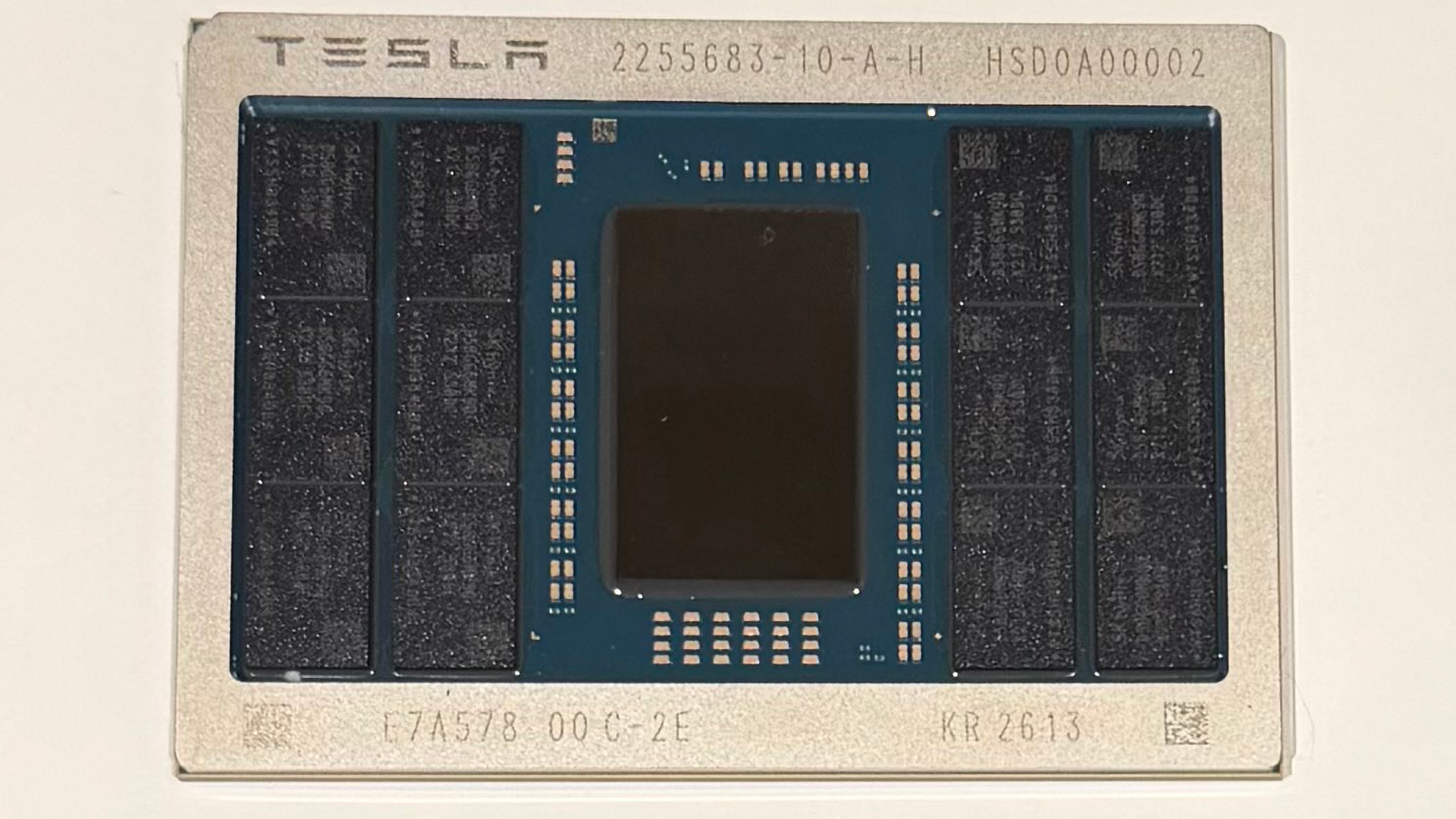

At the other end of the spectrum, some giants are responding to the compute crunch by taking chip production into their own hands. SpaceX’s confidential S-1 filing reportedly outlines plans to invest billions in manufacturing its own GPUs, rather than relying solely on external silicon suppliers. The move comes amid a lack of long-term supply agreements and follows the announcement that Elon Musk will use Intel’s 14A process node in a new TeraFab venture, with SpaceX managing the manufacturing facilities. While terminology differs—hyperscalers may label their designs as AI accelerators, ASICs, or custom devices—the intent is clear: secure dedicated AI hardware for internal use across Tesla, xAI, and other initiatives. This trend toward vertically integrated AI accelerators production reduces exposure to supply shocks and could give such players tighter optimization between models, software stacks, and underlying chips, even as it raises capital intensity and technical risk.

AI Chip Demand Is Redrawing the Global Equity Map

The scramble for AI compute is not only reshaping data centers; it is rewriting the hierarchy of global stock markets. Surging AI chip demand has propelled Nvidia’s valuation into multi-trillion territory, with investors expecting continued triple-digit earnings growth and robust revenue expansion tied to data center GPUs. Beyond individual names, hardware suppliers deeper in the stack are driving regional shifts. Markets heavily weighted toward leading-edge chipmakers have outperformed, with the value of tech-centric exchanges overtaking those dominated by financials. Shares of key foundries and memory manufacturers supplying AI platforms—described as operating in an oligopolistic, “new oil” industry—have posted outsized gains, lifting their home markets up the global rankings. Export data and investor flows underscore how critical AI semiconductors and sustainable data centers have become to economic narratives, cementing certain regions as epicenters of AI infrastructure even as their broader economies remain more modest in size.

What a More Diverse AI Infrastructure Future Means for Enterprises

Taken together, cleaner GPU clouds, in-house accelerator projects, and chip-driven equity shifts point to a more diversified AI infrastructure future. Instead of a world dominated solely by a few hyperscalers, enterprises and developers will increasingly choose among specialized AI GPU cloud providers like Verda, vertically integrated operators building their own GPUs, and mainstream platforms. Each path carries distinct trade-offs in cost, performance, supply security, and ESG credentials. Renewable-powered providers promise cleaner AI compute and transparent pricing, but may offer narrower geographic footprints. Vertically integrated giants can optimize hardware and models, yet lock users into proprietary ecosystems. Hyperscalers still provide global reach and rich managed services, while facing scrutiny over energy usage and concentration risk. For AI teams, infrastructure strategy is becoming a core business decision: aligning compute choices with sustainability targets, data sovereignty requirements, and long-term control over critical AI capabilities.