Investment Bankers Put AI to the Test — And Fail It

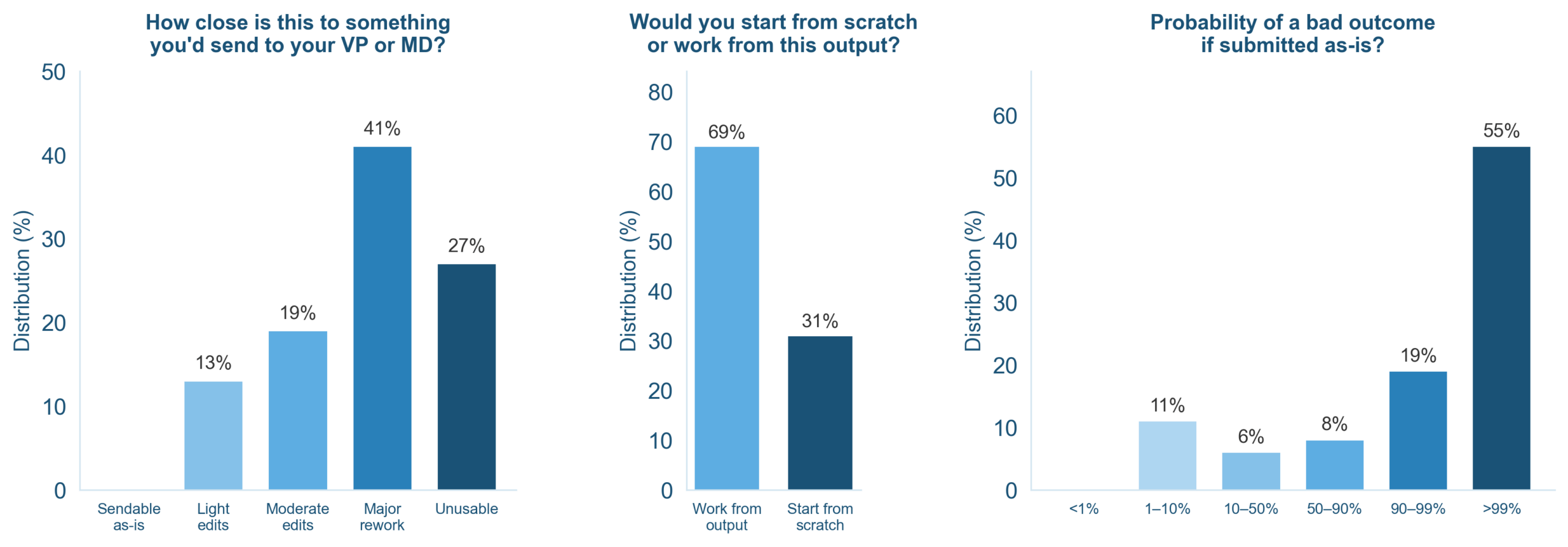

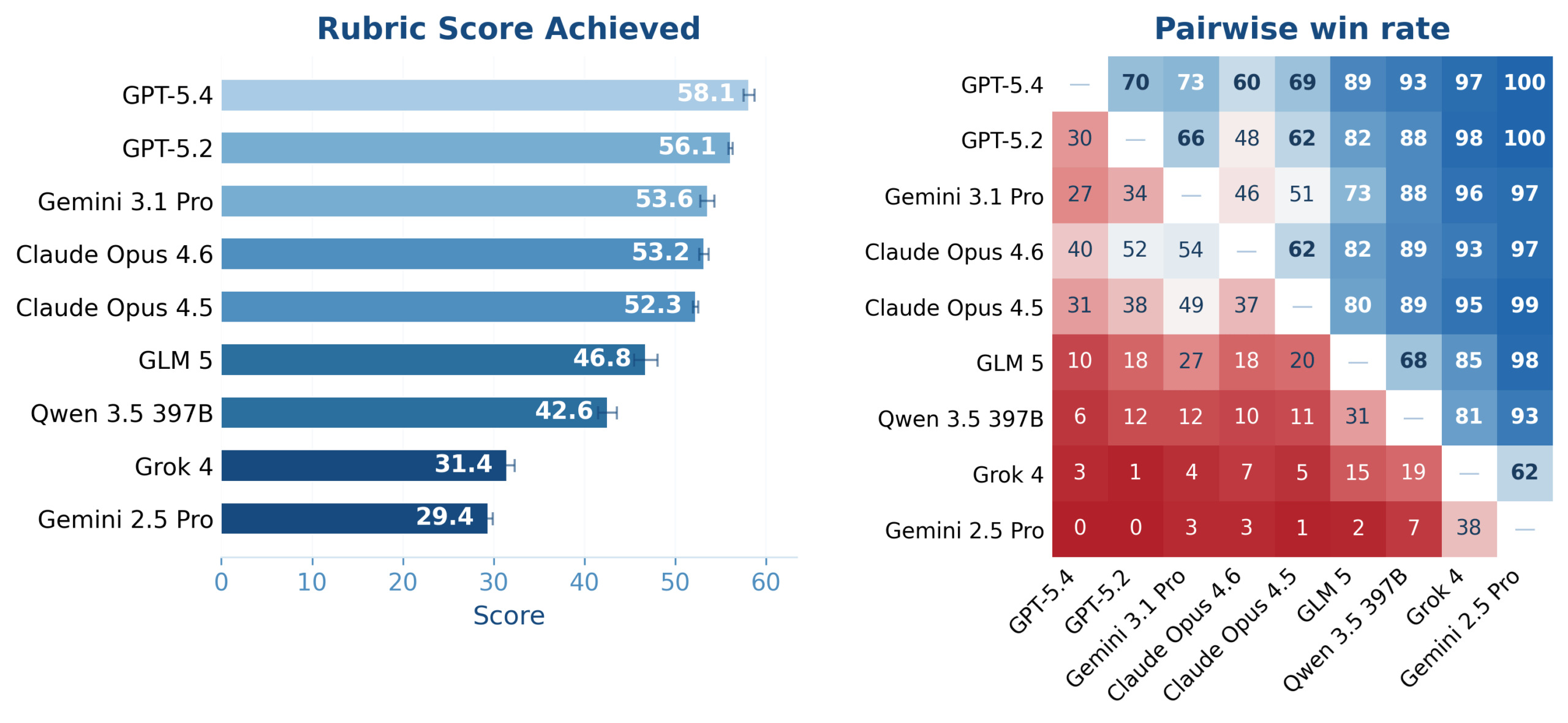

A new benchmark suggests that, for now, AI in investment banking still can’t be trusted to work alone. BankerToolBench, created by Handshake AI and McGill University, asked nine leading models, including GPT-5.4 and Claude Opus 4.6, to perform typical junior‑banker tasks. Around 500 current and former bankers from firms such as Goldman Sachs and JPMorgan helped design and grade 100 realistic tasks that often take humans hours to complete, from building Excel models to drafting pitch decks and memos. The verdict was stark: not a single AI-generated deliverable was considered ready to send to a client as-is. Bankers said 41 percent of outputs required major rework and 27 percent were completely unusable, while just 13 percent could pass with light edits. Yet more than half said they would still use the outputs as a starting point, underscoring both the appeal and the AI reliability risks in high‑stakes, regulated work.

Why High-Stakes Professions Still Need LLM Human Oversight

BankerToolBench didn’t just look at style; it scored deliverables against rubric criteria averaging 150 checkpoints, covering instruction following, technical correctness, client readiness, internal consistency, transparency, and risk and compliance. GPT-5.4 topped the benchmark overall with a score slightly above 58 out of 100, while Claude Opus 4.6 led on client readiness and risk and compliance, but lagged on technical correctness. Some tasks triggered hundreds of model calls, most involving tool use or code execution, yet subtle formula and logic errors still crept into spreadsheets and slides. This matters because, in finance and other regulated sectors, small inaccuracies can become big liabilities. When only a minority of outputs are even a workable “first draft,” firms cannot avoid LLM human oversight. AI agents may be “pretty on the outside, broken underneath,” good at presentation but unreliable on the numbers and assumptions that underpin real decisions. For now, they augment analysts rather than replace them.

From Productivity Aid to Evidence Trail: ChatGPT Misuse in Crime

If BankerToolBench highlights AI’s limits, a disturbing criminal case shows its darker side. Prosecutors in Florida say a suspect in the killings of two missing university students allegedly used ChatGPT to ask about disposing of a body. While the investigation is ongoing, the allegation underscores an uncomfortable truth: tools designed for everyday assistance can also be folded into criminal intent, just as search engines and smartphones have been before. This kind of ChatGPT misuse crime does not mean AI causes violence, but it reveals how generative systems can become part of the planning process — and, potentially, the evidentiary trail. It also raises questions about how platforms should log, restrict, and flag sensitive queries without blanket surveillance of legitimate users. The episode illustrates that the societal risks around AI are not only about hallucinations and bias, but also about how people choose to apply the technology in the real world.

Hiding the Machines: AI-Generated Email Plugins and Mental Offloading

As AI-generated text spreads, some users are now trying to make machine output look more human. Venture capitalist Ben Horowitz recently used Anthropic’s Claude to code a browser-based AI generated email plugin called “Sinceerly” that intentionally injects typos and messy capitalization into polished drafts. Billed as an “anti‑Grammarly,” it lets users tune how chaotic the errors are — even adding a “sent from my iPhone” tag — to evade suspicion from bosses, teachers, or AI detectors. This urge to pass off synthetic writing as authentic comes as researchers warn that constant reliance on chatbots may be eroding critical thinking. MIT scientist Nataliya Kosmyna noticed students forgetting material more easily and receiving cover letters that sounded suspiciously similar and LLM-written. Studies on “cognitive offloading” suggest that delegating too much thinking to AI can dull memory and problem‑solving, especially in young people. When the tech both writes for us and hides its own fingerprints, digital literacy becomes a cognitive safety issue, not just an academic one.

Adopting AI Without Blind Trust or Backlash

Taken together, these stories show a technology that is powerful yet fragile, useful yet abusable. In investment banking, leading models can assemble complex documents but still flunk the precision test for client work, making LLM human oversight non‑negotiable. In criminal investigations, chatbots can surface as tools misused for planning harm, reminding policymakers that AI governance must address behavior, not just algorithms. Meanwhile, plugins that fake human typos and research linking chatbots to mental offloading highlight how easily authenticity and attention can erode. A balanced path forward does not mean rejecting AI altogether. It means treating models as fallible collaborators: humans remain responsible for final judgments, risk checks, and ethical choices. Organisations should build guardrails around sensitive uses, and schools and employers should teach people how to question, verify, and supplement AI outputs. The real danger is not that machines start thinking, but that humans stop doing so.