The Productivity Mirage: Faster Cycles, Fuzzier Proof

Engineering leaders widely report shorter development cycles after rolling out AI coding tools, yet most lack the engineering metrics AI demands. GitHub’s controlled trials cite 55% faster task completion with Copilot, while Gartner talks about 10% productivity gains from code generation tools today and forecasts 25–30% by 2028. A separate Gartner survey finds only 34% of teams actually perceive high productivity gains. These numbers describe different scopes and time horizons, but they are often treated as interchangeable evidence that AI works. Meanwhile, the 2025 DORA research links AI adoption to higher throughput but lower delivery stability, while nonprofit METR observed experienced open-source engineers taking 19% longer with large language models. With such inconsistent development cycle measurement, finance teams are still being asked to budget for AI coding tools ROI on the basis of fragmented studies, anecdotes, and internal sentiment rather than consistent, repeatable metrics tied to real code output.

DORA’s Framework: From Engineering Metrics to AI Coding Tools ROI

Google Cloud’s DORA group is attempting to give engineering leaders a coherent way to quantify AI coding tools ROI. Their updated ROI of AI-Assisted Software Development report proposes a value model that connects engineering metrics AI teams already track to business outcomes. The model starts with seven capabilities, such as a quality internal platform, disciplined version control, and AI-accessible internal data. Improvements in these areas flow into better DORA framework software delivery metrics, including lead time and deployment frequency. Those, in turn, influence non-financial outcomes like developer experience and user satisfaction, before finally surfacing as cost savings and revenue growth. The authors stress that these calculations are intentionally high-uncertainty, designed to create a shared language between engineering, finance, and leadership. Crucially, they argue that AI value should be measured not by how much code tools generate, but by which bottlenecks they remove across the delivery pipeline.

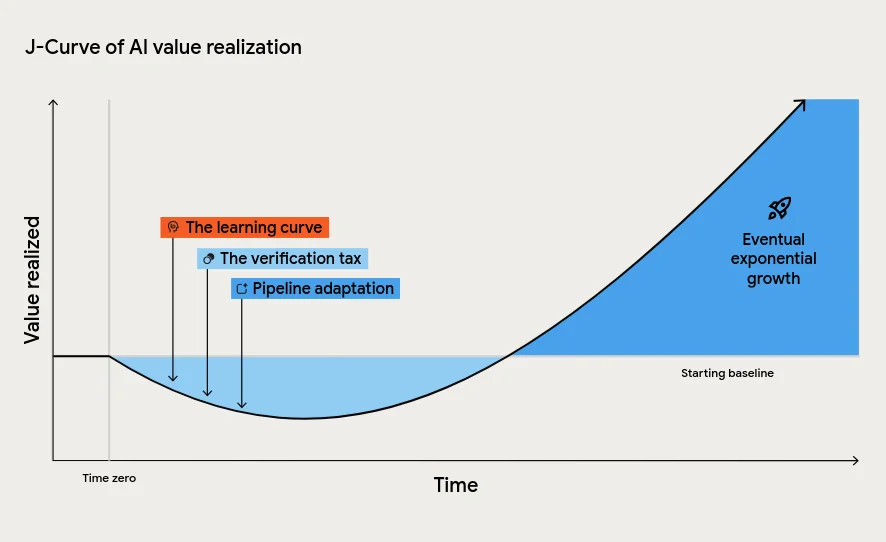

The J-Curve and the Verification Tax

A central idea in the DORA framework is the J-Curve of AI value realisation: performance often dips before it rises. Teams adopting AI coding tools typically face a learning curve as developers adapt workflows and prompts. They also pay a verification tax, because AI-generated code must be reviewed, tested, and integrated carefully. At the same time, downstream processes such as testing, change approval, and incident response must evolve to handle increased code volumes. Without these adjustments, AI can flood pipelines with changes that destabilise production, echoing DORA’s finding that throughput often rises while stability falls. The report calls this dip the “tuition cost of transformation,” warning leaders against misreading it as failure. Without appropriate development cycle measurement and patience for the J-Curve, organisations risk cancelling initiatives just as they approach sustainable gains, locking themselves into a cycle of short-lived experiments instead of durable productivity improvements.

Engineering Throughput Value: Measuring AI at the Commit Level

Navigara’s Engineering Throughput Value (ETV) offers another approach to engineering metrics AI teams can use to validate their transformations. ETV is a per-commit metric that evaluates engineering output directly in the codebase, scoring each team’s commits against its own pre-AI baseline. Rather than relying on self-reported productivity or external benchmarks, ETV compares like-for-like work within the same organisation over time. Navigara applies this commit-level analysis to open-source work from companies such as Microsoft, Google, Cloudflare, OpenAI, Meta, and Vercel, aiming to separate genuine AI-driven improvements from broader process or staffing changes. Founder Jirka Bachel argues that traditional DORA metrics are “old-school and outdated” for the AI era, and that new measures like ETV are needed to test claims that AI tools accelerate engineering. In a landscape where AI is frequently credited with unverified gains, ETV attempts to anchor the AI coding tools ROI discussion in concrete, auditable data.

AI as Amplifier: Why Foundations and Systems Matter More Than Tools

Both Navigara’s ETV and the DORA framework software research emphasise the same conclusion: AI acts as an amplifier, not a cure-all. DORA’s reports show that AI intensifies the strengths of high-performing teams while magnifying the dysfunctions of struggling ones. Strong engineering foundations—such as robust internal platforms, automated testing, continuous integration, and clear workflows—are prerequisites for consistent AI returns. Without them, organisations see localised “pockets” of productivity that vanish in downstream chaos, or accept instability as a hidden tax on speed. At the same time, Navigara highlights how AI is sometimes blamed or credited for outcomes that stem from unrelated workforce or process decisions. In the absence of disciplined engineering metrics AI initiatives can be evaluated against, teams cannot tell whether gains are real, sustained, or merely temporary spikes from experimentation and overtime. The message is clear: measure first, then scale AI.