Mythos and the Promise of Frontier AI Security

Anthropic’s Mythos model arrived with a bold claim: it was so strong at AI vulnerability detection that open access would be risky. Through Project Glasswing, Anthropic offered vetted partners early Mythos-tier access to harden widely used infrastructure, from browsers and operating systems to chips, firewalls and cloud platforms. The pitch was that Mythos could comb massive codebases, uncover subtle flaws, and help organizations rethink long-ignored security backlogs. This narrative positioned Mythos as a frontier AI effectiveness milestone and a glimpse of how who gets to use such models could redefine cybersecurity R&D. Yet from the start, details were thin. Was Mythos itself a bug hunting AI breakthrough, or were its gains dependent on how organizations integrated it into existing workflows, tooling and human review? The contrasting experiences of Mozilla and the cURL project would soon put that question under a microscope.

Mozilla’s Firefox Bug Surge: Breakthrough or Baseline Shift?

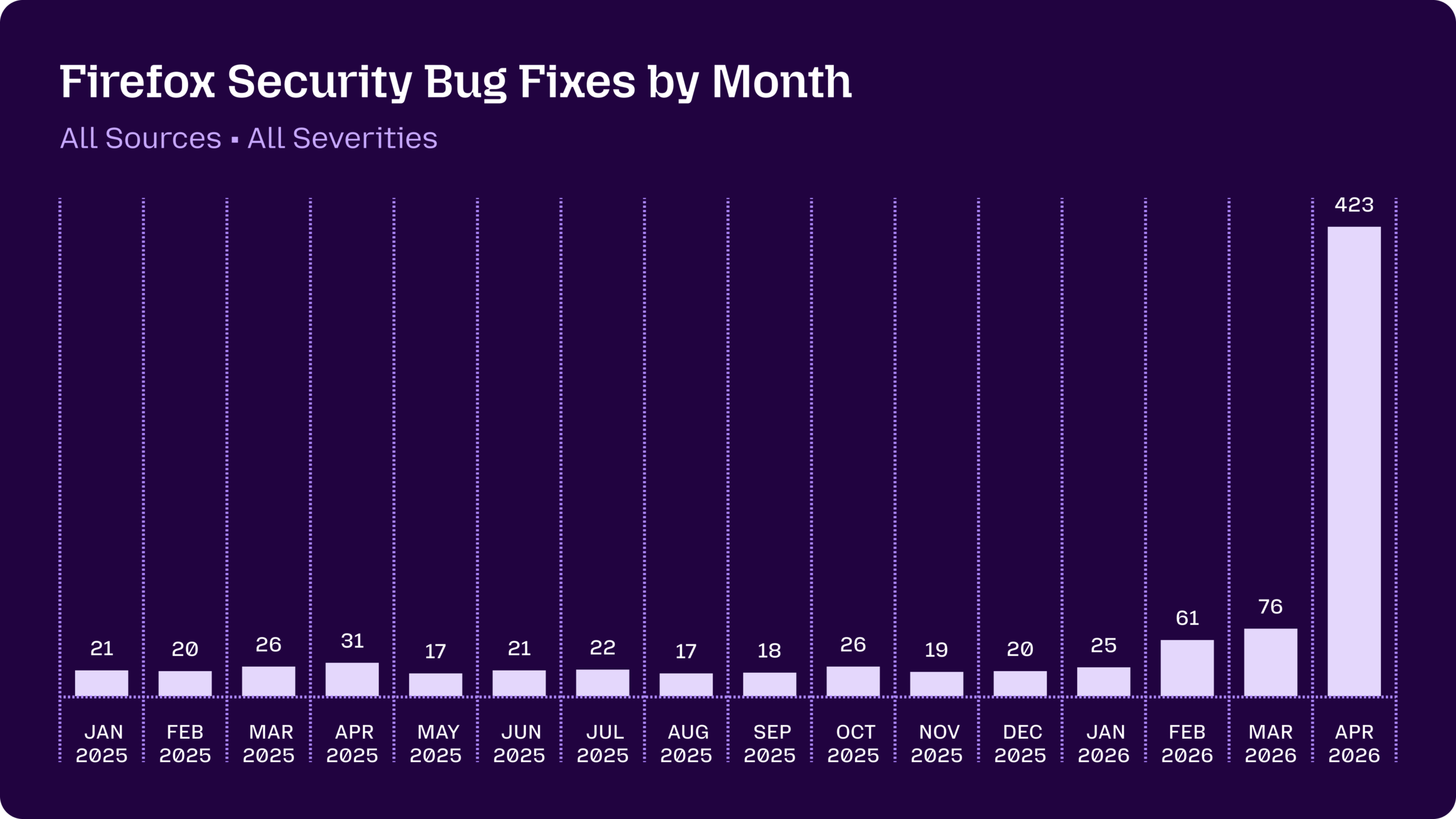

Mozilla’s data offers the strongest case that Mythos model security capabilities can matter in practice. Firefox typically fixed bugs in the teens to mid‑twenties each month, with 31 security vulnerabilities patched last April. After Anthropic’s Claude Opus 4.6 scanned Firefox over two weeks in January, it uncovered 22 vulnerabilities, 14 of them high‑severity. Then came a dramatic spike: 423 security bugs fixed in April, once Mozilla gained early access to Claude Mythos Preview via Project Glasswing. Mythos alone surfaced 271 of those issues, though only three became standalone CVEs; the rest were low‑severity findings, defense‑in‑depth tweaks and fixes in long‑dormant code paths. Mozilla engineers credited not just newer models, but also improved “agentic harness” middleware that shaped prompts, filtered noise, and turned AI output into actionable reports. In this view, Mythos was a key ingredient, but the orchestration layer may have been just as important.

cURL’s Experience: Mythos as Marketing, Not Miracle

The cURL project’s encounter with Mythos painted a very different picture. Developer Daniel Stenberg joined Project Glasswing expecting hands‑on access to Anthropic’s frontier model, but instead received a one‑off scan report generated by someone else. That report flagged five alleged “confirmed security vulnerabilities” in cURL’s codebase. After several hours of review by the cURL security team, three were dismissed as false positives that restated documented API limitations, a fourth was downgraded to a non‑security bug, and only one issue remained a genuine vulnerability. Even then, it was assessed as low severity and scheduled for disclosure alongside a routine release, hardly the sort of flaw that would “make anyone grasp for breath.” Mythos did identify some additional non‑security defects, and its explanations were considered solid. Still, Stenberg concluded the hype around Mythos was “primarily marketing,” arguing it did not clearly outperform existing tools in practice.

Frontier AI Effectiveness: Model Power vs. Middleware Reality

The Firefox and cURL cases highlight a core ambiguity in evaluating frontier AI effectiveness for security. In Mozilla’s environment, Mythos and its predecessors were embedded in a carefully engineered pipeline, with an agentic harness mediating between the model and human engineers. This infrastructure boosted the signal‑to‑noise ratio, converting raw model output into focused, higher‑quality security reports and making it practical to chase down even low‑priority bugs that would usually languish. In contrast, cURL’s experience was essentially a single, externally run scan without iterative prompting, integration into existing tooling or tight feedback cycles. That raises the question: are Mythos‑tier gains primarily about model capability, or about how organizations wrap models in workflows, automation and expert review? The evidence suggests the middleware and operational context can be as decisive as the model itself in turning AI vulnerability detection into real security improvements.

What Mythos Means for the Future of Bug Hunting AI

Taken together, Mythos looks less like a universal security breakthrough and more like a force multiplier that depends heavily on deployment context. Mozilla’s surge in Firefox fixes—especially in tricky areas like sandbox escapes and decades‑old memory issues—shows how access to Mythos‑tier models, combined with robust orchestration, can reshape how organizations tackle their vulnerability backlogs. At the same time, cURL’s low‑yield scan underscores that frontier models are not magic bullets and can still produce false positives or modest results when used in isolation. As Project Glasswing extends to cloud platforms, operating systems, libraries and security vendors, access to such models could redefine who can aggressively hunt for flaws across the shared attack surface. But for now, the evidence is mixed: Mythos can deliver real security gains, yet its impact appears tightly coupled to human expertise and the quality of the systems wrapped around it.