What GPT‑5.5 Actually Is and Why It Matters

GPT‑5.5 is OpenAI’s new flagship model aimed squarely at professional, productivity‑driven use cases. Instead of just chasing benchmark scores or raw speed, it focuses on planning, task decomposition and more autonomous problem solving. The model is built to interpret loosely specified, complex tasks and structure them into coherent steps, making it more useful as an AI coding assistant and a general productivity engine. OpenAI highlights significantly improved programming and data‑analysis capabilities, including a strong performance jump on benchmarks like Terminal‑Bench 2.0. GPT‑5.5 also emphasizes efficiency: it often needs fewer prompts and corrective turns to reach a usable answer, which directly benefits teams paying for API usage or embedding the model into OpenAI productivity tools and third‑party SaaS apps. Combined with upgraded cybersecurity mechanisms and controlled access pathways for security professionals, GPT‑5.5 is clearly positioned as a foundation for serious code generation AI and enterprise‑grade AI workflow automation, not just casual chat.

How GPT‑5.5 Changes Developer Workflows

For developers, GPT‑5.5’s main promise is better end‑to‑end assistance rather than isolated code snippets. Its improved coding skills show up in benchmarks and, more importantly, in realistic tasks drawn from developer platforms. This means the model is more capable of understanding entire repositories, refactoring across files, and aligning code changes with higher‑level requirements. In practice, GPT‑5.5 can take over more of the boilerplate: spinning up service layers, wiring configuration, or scaffolding APIs. It also becomes more reliable at generating unit tests and integration tests that actually run, and at updating documentation alongside code changes. Because it handles ambiguous prompts more gracefully, developers can describe desired behavior in natural language and let the model propose implementations and test plans. Over time, this shifts the workflow: engineers review, refine and integrate model‑generated code rather than writing everything from scratch—turning GPT‑5.5 into a persistent AI coding assistant embedded in IDEs, CI pipelines and internal tools.

Productivity Gains Beyond Engineering Teams

GPT‑5.5 is also designed as a general productivity engine for non‑developers. Its stronger planning abilities make it more than a drafting tool: it can outline complex documents, prioritize tasks and keep long contexts coherent, even when requirements are underspecified. Knowledge workers can offload first‑draft writing, from reports and strategy memos to marketing copy, and then iteratively refine the output rather than starting with a blank page. In research workflows, GPT‑5.5’s improved reasoning helps summarize multi‑source material, extract key arguments and suggest follow‑up questions. Combined with lower token consumption for the same tasks, this makes it more attractive for persistent assistants built into OpenAI productivity tools or SaaS platforms. For AI workflow automation, GPT‑5.5 can coordinate multi‑step processes—such as data cleaning, analysis and reporting—while requiring fewer manual corrections. The result is a model that can sit at the center of dashboards, CRMs and knowledge systems, orchestrating routine work while humans focus on review and decision‑making.

Security, Reliability and Cost: What Teams Need to Know

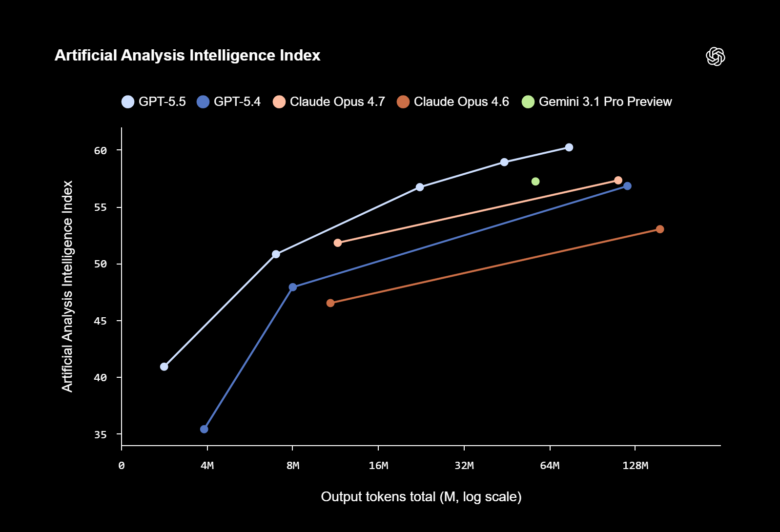

OpenAI has clearly framed GPT‑5.5 as a model that must be safe enough for production use. It includes stronger security mechanisms, especially around cybersecurity tasks, where capabilities are enhanced but paired with new protections to limit misuse. There is also controlled access for security experts, who can leverage GPT‑5.5 to harden digital infrastructure, for example by assisting in threat modeling or analyzing logs and configurations for weak points. On the efficiency side, GPT‑5.5 often reaches results with fewer interactions, cutting token usage and making it more cost‑effective as an API backbone compared with many current mainstream models. This mirrors a wider industry trend toward token‑efficient architectures, as seen in models like Ling‑2.6‑flash, which uses sparse Mixture‑of‑Experts designs to drastically cut inference cost while maintaining intelligence. For SaaS builders and enterprises, the implication is clear: newer models are not just smarter; they are increasingly optimized for sustained, large‑scale deployment as embedded engines inside products.

How to Pilot GPT‑5.5 Without Disrupting Existing Workflows

Adopting GPT‑5.5 does not require a wholesale overhaul of current tools. A practical approach is to start with narrow, high‑leverage scenarios: for engineering teams, this might mean using GPT‑5.5 only for test generation or documentation first, then expanding to refactoring and feature scaffolding once trust is built. Non‑technical teams can begin with content drafting or meeting‑notes synthesis before automating more complex workflows. Because GPT‑5.5 emphasizes autonomous task planning, it is well suited to pilot deployments as a companion inside existing platforms—IDE plugins, ticketing systems, BI dashboards—rather than as a standalone chatbot. Teams should log model decisions, track error patterns and define clear guardrails for sensitive data, taking advantage of the model’s upgraded security features. Over time, organizations can compare GPT‑5.5’s efficiency and reliability to their current code generation AI or automation stack, then selectively replace components where the new model demonstrably improves speed, quality or cost.