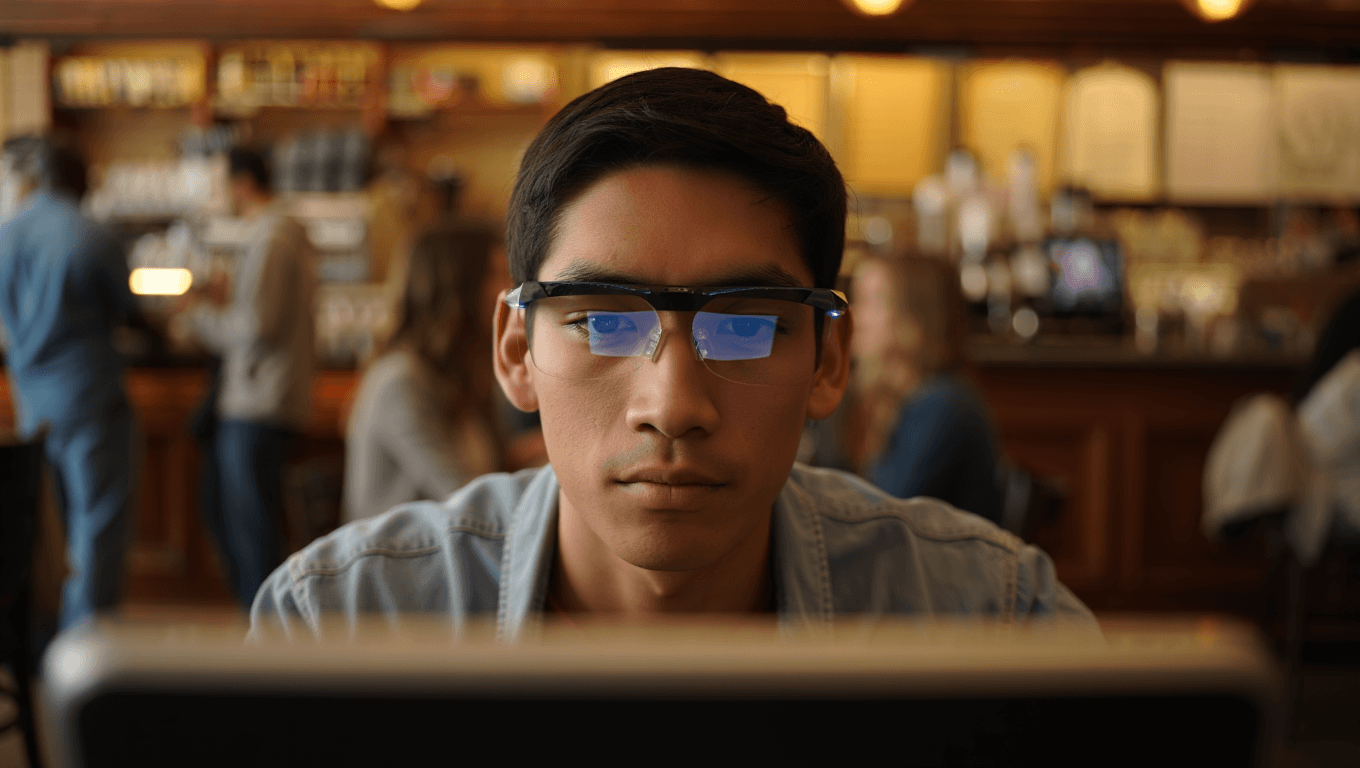

How Live-Captioning Glasses Turn Speech into On-Screen Subtitles

Live captioning glasses are emerging as one of the most practical forms of hearing assistance technology, especially in crowded rooms and busy streets. Instead of forcing you to stare at a phone screen, these AI smart glasses accessibility tools project real-time subtitle eyewear directly into your field of view. Microphones pick up speech around you, AI models convert it to text, and a tiny display overlays captions so you can maintain eye contact and situational awareness. Reviews from tech outlets highlight that accuracy has reached a point where following fast, overlapping conversations in public is finally usable, not just a lab demo. This shift matters for anyone who struggles in noisy environments, not only people with hearing loss, because captions can clarify muffled voices, unfamiliar accents, and echo-prone spaces, making in-person interactions more inclusive and less exhausting.

Captify and Even: Caption-First Glasses Without Subscription Strings

Among the latest live captioning glasses, Captify and Even stand out for focusing on captions first, rather than flashy mixed reality tricks. Captify is repeatedly praised for delivering readable subtitles in noisy environments while avoiding heavy recurring fees, an important factor for users who need reliable hearing assistance technology every day. Reviewers note that its priority is transcription accuracy and comfortable text placement, so you can decide whether the overlay feels natural for long conversations. Even, another surprise hit, also offers a no-subscription model while bundling full features, making it appealing to buyers tired of constant app payments. Together, these devices show how AI smart glasses accessibility is shifting toward straightforward, buy-once tools that respect users’ budgets and attention, turning live-captioning into a practical everyday accessory rather than an experimental luxury.

Meta Ray‑Ban and Clip-On Displays: Subtitles Built Into Your Style

Not every user wants specialized caption-only eyewear; some prefer real-time subtitle eyewear that blends into familiar frames. Meta’s Ray‑Ban line brings live captioning and translation into a mainstream brand, combining fashion with function. For people already invested in a single-vendor ecosystem, this offers a seamless way to add hearing assistance technology without changing their look. At the other end of the spectrum, Xreal and Aura-style clip-ons take a modular approach. These lightweight displays attach to existing glasses and pair with a phone app that handles speech-to-text, trading some latency for a lower overall cost and flexible use. Travelers and occasional users may appreciate being able to pack a small clip-on rather than a full smart frame, proving that AI smart glasses accessibility can adapt to different lifestyles and budgets.

Rokid and Emerging AI Glasses: Powerful Features, New Questions

Beyond established brands, companies like Rokid and other AI glasses makers are pushing aggressive augmented reality demos that showcase bold caption overlays and virtual screens. These devices often combine live captioning glasses capabilities with expansive displays for media, productivity, and translation, blurring the line between assistive tech and full AR headsets. Early reports suggest feature-rich experiences at comparatively attractive prices, but they also raise questions about privacy and varying rules on data collection. Users considering these options should weigh not only display quality and caption accuracy, but also how their voice and conversation data might be handled by cloud services. Support, software updates, and transparency will be critical in determining whether these ambitious real-time subtitle eyewear products become trusted companions or remain niche experiments in the rapidly evolving AI smart glasses accessibility landscape.

What These 5 Glasses Mean for Everyday Conversations

The rise of these five live captioning glasses signals a turning point for how we experience spoken interaction in real-world settings. Instead of relying on lip reading, guessing, or stepping away to check a phone, users can keep their focus on faces and body language while captions quietly fill in the gaps. Early reviews show that accuracy in public spaces is now good enough for daily use, provided battery life and fit meet personal needs. With entry prices reported around USD 699 (approx. RM3,260) for some models, buyers are weighing one-time costs against subscription-free operation and long-term accessibility benefits. As more people experiment with AI smart glasses accessibility tools, the social norm of wearing discreet, caption-capable eyewear could expand, making conversations more inclusive for anyone navigating noise, fatigue, or hearing differences.