Mythos Enters the Security Arena Amid High Expectations

Frontier AI models in cybersecurity are being promoted as transformative tools for vulnerability detection and exploit generation. Anthropic’s Mythos has become the poster child, billed as so powerful at finding software flaws that it cannot be widely released. Security researchers used an early Mythos Preview to uncover a complex macOS exploit chain, linking two bugs into a privilege escalation path that bypassed built‑in protections. At the same time, the model has been integrated into programs like Project Glasswing, which routes Mythos analyses to high‑profile open source maintainers via intermediaries rather than granting them direct access. This framing positions Mythos as critical infrastructure for defending digital systems, suggesting it can surface subtle flaws beyond the reach of traditional static analysis and fuzzing. Yet as more practitioners report their hands‑on experience, a more nuanced picture of AI bug hunting effectiveness is emerging.

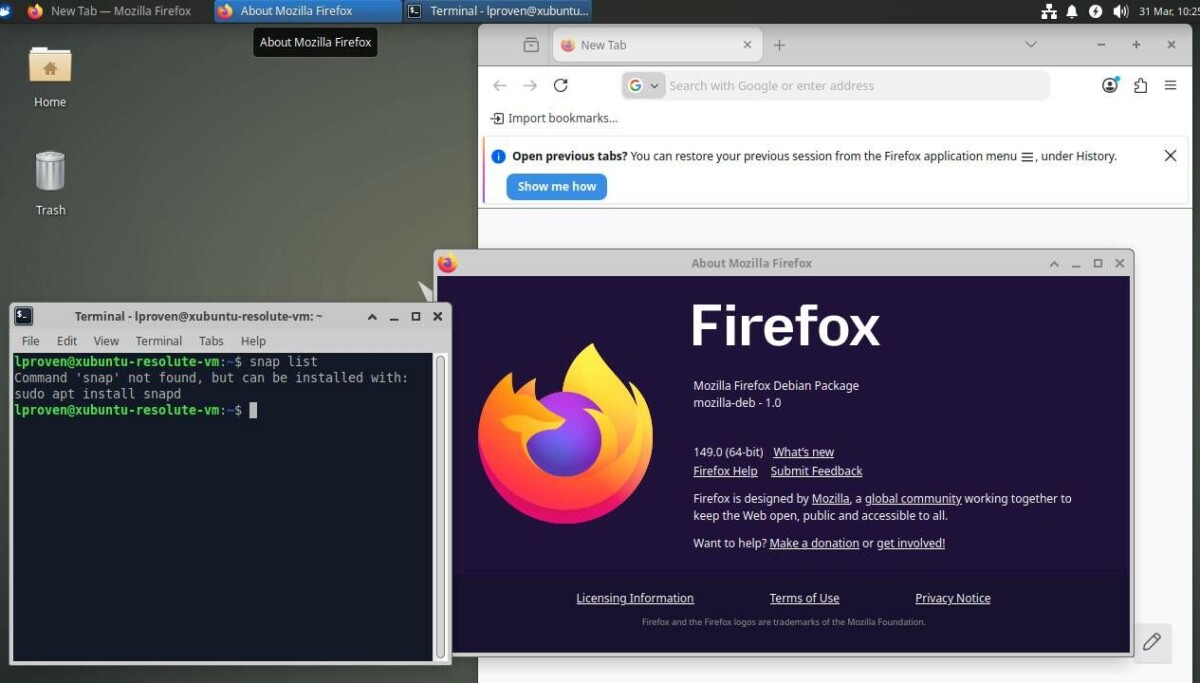

Firefox’s Bug Surge Highlights the Power of AI Harnesses

Mozilla’s Firefox team reported a dramatic spike in security fixes, jumping from 76 issues in March to 423 in April, after bringing Mythos and its sibling model Opus 4.6 into their pipeline. Mythos was credited with identifying 271 bugs in Firefox 150, including a high‑severity heap use‑after‑free dating back roughly two decades and several sandbox escape paths that are notoriously hard to spot with fuzzing alone. Yet Mozilla’s engineers stressed that the real breakthrough was not just the model, but the agentic harness wrapped around it. By iteratively steering the model, filtering noise, and curating report formats, they transformed early, low‑signal AI outputs into usable security insights. Their experience underscores a key theme in frontier AI models cybersecurity: middleware and workflow integration can matter as much as raw model power, raising the question of whether organizations should prioritize access to frontier systems or better orchestration of the tools they already have.

cURL Maintainer Calls Mythos Hype ‘Primarily Marketing’

Not everyone is convinced that Mythos represents a step change in Mythos vulnerability detection. cURL creator Daniel Stenberg participated in Anthropic’s Project Glasswing, where an Anthropic operator ran Mythos against the cURL codebase and returned a report claiming five confirmed security vulnerabilities. After several hours of review, Stenberg’s security team concluded that only one issue was a genuine vulnerability, and even that was categorized as low severity and scheduled for disclosure alongside a future cURL release. The remaining items were either false positives or ordinary bugs already captured in documentation. Stenberg acknowledged that Mythos produced well‑written descriptions and helped surface non‑security defects, but argued that its performance was comparable to existing tools, not a game‑changer. He characterized the surrounding narrative as “primarily marketing,” sharpening community skepticism about AI bug hunting effectiveness and highlighting the danger of overselling frontier models before their operational value is proven.

Sandyaa Shows Open Source Can Deliver Autonomous Security Scanning

While frontier systems dominate headlines, open‑source projects like Sandyaa suggest powerful alternatives that do not rely on restricted models. Developed by SecureLayer7, Sandyaa uses large language models to perform autonomous security scanning of source code, from initial ingestion to exploit proof‑of‑concept generation. It recursively analyzes call chains and data flows, revisits code to refine hypotheses, and runs multiple verification phases—such as contradiction detection and exploitability proof—to cut false positives. For each confirmed bug, it emits a detailed write‑up, a Python exploit, setup guidance, and an evidence file mapping claims to specific lines of code. Sandyaa targets a broad spectrum of issues, from memory safety flaws and logic bugs to injection vulnerabilities, cryptographic misuse, and unsafe APIs. Crucially, an attacker‑control analyzer drops findings that cannot be reached from untrusted input. This architecture demonstrates that autonomous bug hunting does not strictly require access to frontier AI models, but can be realized through carefully designed, open tooling.

ROI, Policy, and the Future of AI‑Assisted Security

The contrasting experiences with Mythos and tools like Sandyaa raise unresolved questions about return on investment in frontier AI models cybersecurity. Mozilla’s success hints at strong upside when advanced models are paired with sophisticated orchestration layers, while cURL’s muted results suggest diminishing marginal gains for mature, heavily audited projects. Meanwhile, open‑source alternatives show that autonomous bug hunting and exploit writing are possible without privileged access to top‑tier proprietary models. Despite this mixed track record, policymakers and technology firms are increasingly framing frontier systems as critical infrastructure for defending software ecosystems, and collaborating on controlled deployments aimed at protecting widely used platforms. For enterprise security teams, the immediate challenge is strategic: whether to invest in expensive access to closed frontier models, double down on model‑agnostic middleware and verification pipelines, or blend both approaches while rigorously measuring AI bug hunting effectiveness in their own environments.