Agentic AI Readiness: Hype Meets Structural Reality

Agentic AI readiness is becoming a defining issue for enterprise AI adoption. While 62% of companies are already experimenting with autonomous AI agents, most initiatives remain stuck in pilot mode. A key brake is trust: 45% of business leaders cite data accuracy and bias concerns, and more than 40% of agentic AI projects are expected to be canceled by 2027. Fragmented and legacy data environments are a primary culprit. Agents operating on incomplete, siloed information are prone to hallucinations, undermining confidence in autonomous AI agents for critical workflows. Without modern connectivity frameworks such as standardized MCP-style interfaces and unified, high-speed access to organizational data, even sophisticated agents are forced into speculative reasoning. The result is an AI landscape where technical capability races ahead, but structural readiness, governance, and enterprise-grade reliability lag, creating a widening gap between proofs of concept and scaled production deployment.

Culture Shift: Lessons from Google Cloud Next and Macy’s

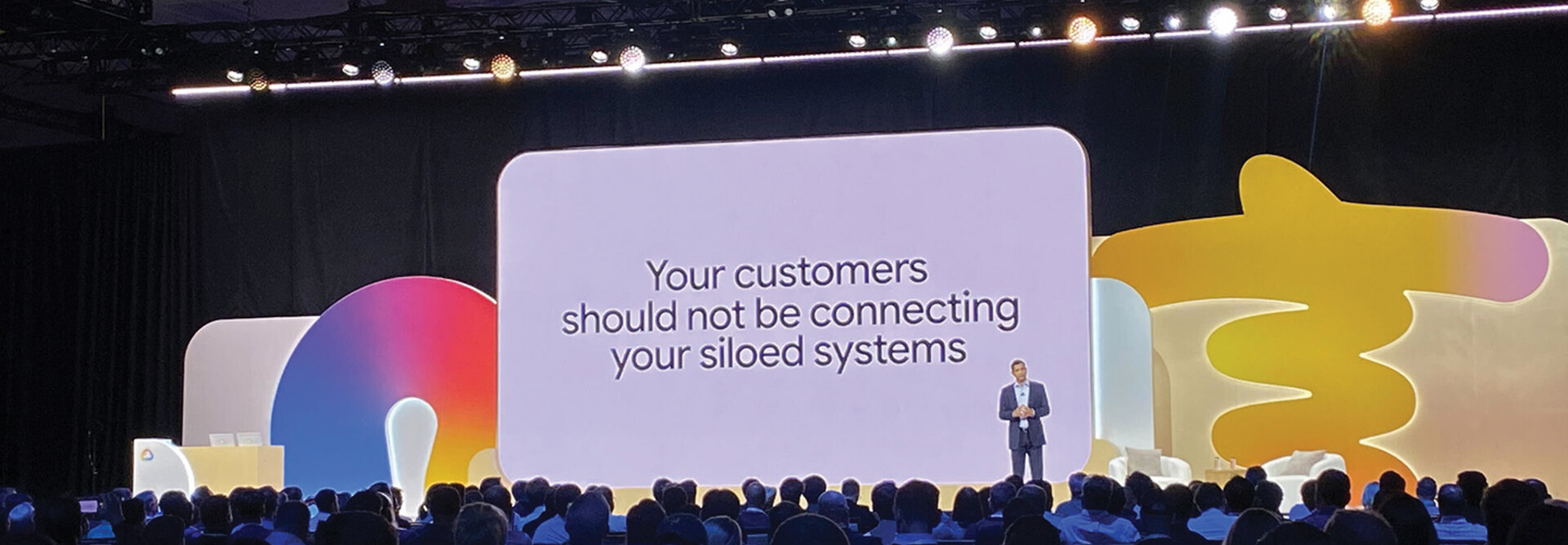

The path from pilot to production is not just technical; it is cultural. At Google Cloud Next, executives spotlighted Macy’s “Ask Macy’s” customer experience agent as a template for expanding AI agent adoption. Built on Gemini Enterprise for Customer Experience, the agent compresses discovery, purchase, service, and fulfillment into a single interaction, even verifying real-time inventory and bridging digital and physical commerce. Macy’s leaders emphasized that success required more than a new interface: teams had to trust agent recommendations, redesign workflows, and align metrics around outcomes rather than tickets handled. Google Cloud speakers framed agentic AI as a move from static knowledge bases to context-aware digital coworkers. That mindset shift—treating agents as part of a digital workforce—is now critical for enterprises that want to move beyond isolated pilots and embed AI agent governance, training, and accountability into everyday operations rather than leaving agents as side projects.

Anthropic’s Autonomous ‘AI Employees’ vs Enterprise Caution

The most striking contrast in enterprise AI adoption is between internal AI companies and traditional enterprises. Anthropic disclosed that inside its own operations, fully autonomous “AI employees” are no longer hypothetical. Over 90% of code for new Claude models and features is now written by AI agents, with human engineers acting as architects and auditors rather than primary developers. Internal autonomy metrics improved sharply, with the number of human interventions per session dropping as agents took on more complex work. Yet outside this environment, most organizations report that only a fraction of their code is AI-written and that large-scale deployment of autonomous AI agents remains rare. Liability exposure, hallucination risks, and organizational inertia keep enterprises cautious. This divergence underscores a central challenge of enterprise AI adoption: the capability to automate exists, but the governance maturity, risk tolerance, and accountability structures required to trust AI “employees” in production are still developing.

New Guardrails: Token Spend Control and Agent Security

As organizations test autonomous AI agents in real workflows, operational fears are surfacing around runaway AI token spend and misbehaving agents. Portal26’s new Agentic Token Control module directly targets AI token spend control by giving enterprises real-time visibility into how many tokens their agents consume. Teams can set policy-based thresholds, throttle activity, or even pause or terminate agents when usage nears or exceeds budgeted limits. This addresses both cost and operational risk, preventing recursive loops and unstable workflows from spiraling. In parallel, Codenotary is extending its software supply chain protection into AI agent governance with AgentMon and AgentX. AgentMon provides real-time observability into networks of autonomous AI agents, tracking decision chains and behavior across infrastructure. Combined with AgentX’s focus on security and autonomous remediation, these tools aim to make continuous verification, trust, and automated incident response foundational capabilities rather than afterthoughts in agentic AI readiness.

From Pilot to Production: Practical Steps to De-Risk Agentic AI

Bridging the gap between experimentation and production requires deliberate design rather than blind scaling. Organizations should begin with narrowly scoped, high-value use cases—such as customer support triage or internal knowledge retrieval—where autonomous AI agents can be evaluated against clear KPIs. Human-in-the-loop approvals should remain standard for consequential actions, allowing agents to draft decisions while humans retain final authority. Monitoring dashboards that expose token usage, decision chains, and error rates are essential, leveraging platforms like Portal26 for spend control and Codenotary’s AgentMon for behavioral observability. Equally important is defining responsibility models: who owns an agent’s output, who can pause or reconfigure it, and how incidents are escalated and remediated. Finally, enterprises must modernize data infrastructure so agents access consistent, high-quality context. With structured governance, observability, and culture change, enterprises can turn agentic AI from risky experiments into dependable, autonomous workflows.