From Prompting to Planning: Why Spec-Driven AI Is Emerging

AI coding automation has quickly moved beyond one-off prompts pasted into chat windows. As teams scaled their use of large models, they ran into a familiar ceiling: improvisational, “vibe” coding made it hard to enforce engineering standards, track work, or prevent subtle regressions. The new wave of spec-driven workflow tools aims to solve that by treating code generation as the final step in a structured pipeline, not the first. Instead of asking an AI to implement a feature from a single natural-language request, these systems force an explicit progression from intent to specification, plan, and tasks, with review checkpoints baked in. GitHub’s Spec-Kit and the OpenAI Symphony agent orchestrator are two of the clearest examples. Both assume that AI agents should live inside guardrails that resemble familiar software processes—ticket queues, pull requests, and staged reviews—rather than free-form chat. The result is less magic, more measurable throughput.

How GitHub Spec-Kit Turns Ideas into Specs, Plans, and Tasks

GitHub Spec-Kit takes a feature idea and forces it through a structured pipeline before any code is written. The toolkit, now open-sourced at version v0.8.7 with tens of thousands of stars and thousands of forks, centers on a Specify CLI plus templates and helper scripts tailored for agentic development. Its core stages—Specify, Plan, Tasks, Implement—mirror how disciplined teams work: a specification captures the product scenario, the plan converts that scenario into technical direction, and tasks break the work down into assignable units. Slash commands for constitution, specification writing, planning, task breakdown, issue conversion, and implementation give developers a clearly defined command surface. Optional clarify, analyze, and checklist steps act as quality gates, prompting teams to fill in missing details, test consistency, and challenge assumptions. By the time an AI coding agent starts writing code, there is already an artifact trail that managers and reviewers can inspect without decoding a single giant prompt.

Symphony: OpenAI’s Orchestrator for Automated Pull Requests

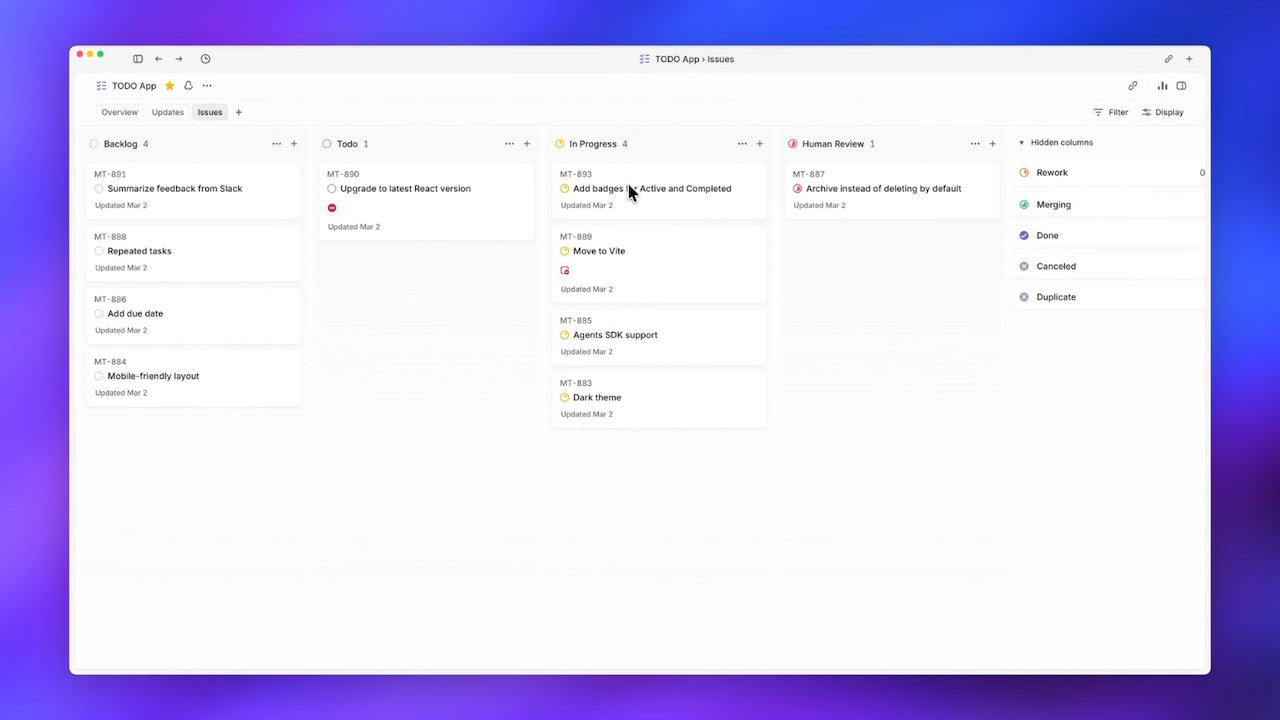

OpenAI Symphony approaches AI coding automation from the orchestration side of the pipeline. Released as an open-source specification plus an Elixir reference, Symphony lets Codex agents pull tickets directly from the Linear tracker and run until their work is merged. OpenAI’s internal teams hit a management bottleneck when trying to supervise more than a handful of parallel agent sessions; Symphony’s answer is to remove humans from that dispatch loop. Each open ticket gets its own Codex agent and dedicated workspace. Symphony treats Linear as a state machine, with tickets flowing through Todo, In Progress, Review, and Merging, and it respawns agents that crash mid-task so progress continues. The agent builds a task tree that executes as a dependency graph, enabling natural parallelism across work items. According to OpenAI, this design drove a sixfold increase in merged pull requests in the first three weeks of internal use, signaling how powerful automated code review and merging can be when supervision is encoded in the workflow itself.

Checkpoints, Guardrails, and Automated Code Review

What unites GitHub Spec-Kit and the OpenAI Symphony agent is not just automation, but the way they embed guardrails into everyday engineering flow. Spec-Kit inserts checkpoints before code generation: specifications that can be critiqued, plans that can be revised, and task lists that can be turned into trackable issues. Each step creates a natural review point where architects or leads can intervene if the direction looks wrong, preventing an AI agent from accelerating down a flawed path. Symphony, meanwhile, bakes review and merging into its ticket state machine. Tickets move through explicit Review and Merging stages, and agents can even create follow-up tickets when they uncover refactoring or performance work that should not be silently folded into a single pull request. In both cases, automated code review is not an add-on linting pass; it is a consequence of structuring AI work around specs, tickets, and PR lifecycles rather than ad-hoc prompts.

What Spec-Driven AI Means for Future Development Teams

These spec-driven workflow tools point toward a future in which AI agents feel less like chatbots and more like junior engineers embedded in familiar processes. Spec-Kit’s emphasis on product scenarios, predictable outcomes, and reviewable artifacts gives organizations a way to adopt AI coding automation without abandoning their existing engineering checkpoints. Its issue-conversion capabilities bridge planning, tracking, and implementation, making AI output auditable and schedulable. Symphony’s orchestration model, where a ticket tracker becomes the supervisor and agents run until merge, tackles the human-attention bottleneck head-on. Together, they suggest that the next competitive edge will come not just from smarter models, but from better structures around them: state machines, DAGs of tasks, and standardized spec-driven workflows. Teams that embrace this shift may ship more pull requests with fewer coordination costs—while retaining the ability to pause, review, and course-correct before generated code lands in production.